Table Of Content

- ERNIE 5.1 Tested in Detail at Mona Vale Beach

- Why ERNIE 5.1 matters

- Benchmarks and leaderboards

- Training approach that slashes cost

- Four stage capability build

- Single shot app build results

- Multilingual test

- Complex agent scenario with web search and thinking

- What ERNIE 5.1 actually did

- Decision and plan quality

- Mona Vale Beach notes

- Final thoughts

ERNIE 5.1 Tested in Detail at Mona Vale Beach

Table Of Content

- ERNIE 5.1 Tested in Detail at Mona Vale Beach

- Why ERNIE 5.1 matters

- Benchmarks and leaderboards

- Training approach that slashes cost

- Four stage capability build

- Single shot app build results

- Multilingual test

- Complex agent scenario with web search and thinking

- What ERNIE 5.1 actually did

- Decision and plan quality

- Mona Vale Beach notes

- Final thoughts

Baidu just dropped a model that beats DeepSeek, competes with Claude and Gemini, and cost just 6% of what those models cost to train. That is not a typo. I am curious to see exactly how this new ERNIE model performs.

I believe the ERNIE model family is one of the most underrated families of AI models out there. They have been producing some real gems for quite some time and we have covered it all. If you want context on the previous generation, see this earlier ERNIE overview here: a quick ERNIE 5 refresher.

ERNIE 5.1 Tested in Detail at Mona Vale Beach

I am at Mona Vale Beach in beautiful sunny Sydney, one of the most striking stretches of coastline in Australia. There is something fitting about reviewing a breakthrough AI model from here. Let’s test and talk through what matters.

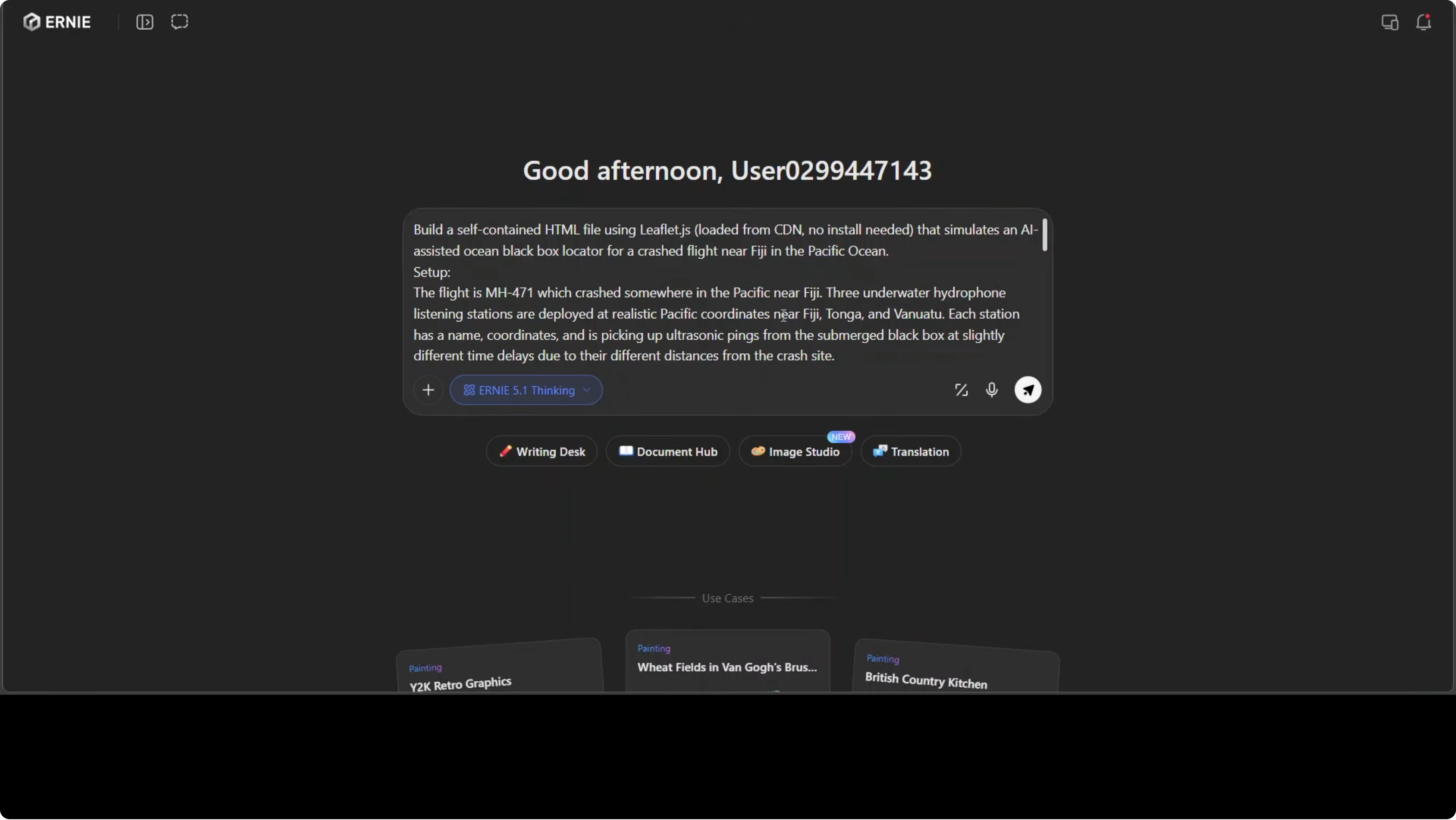

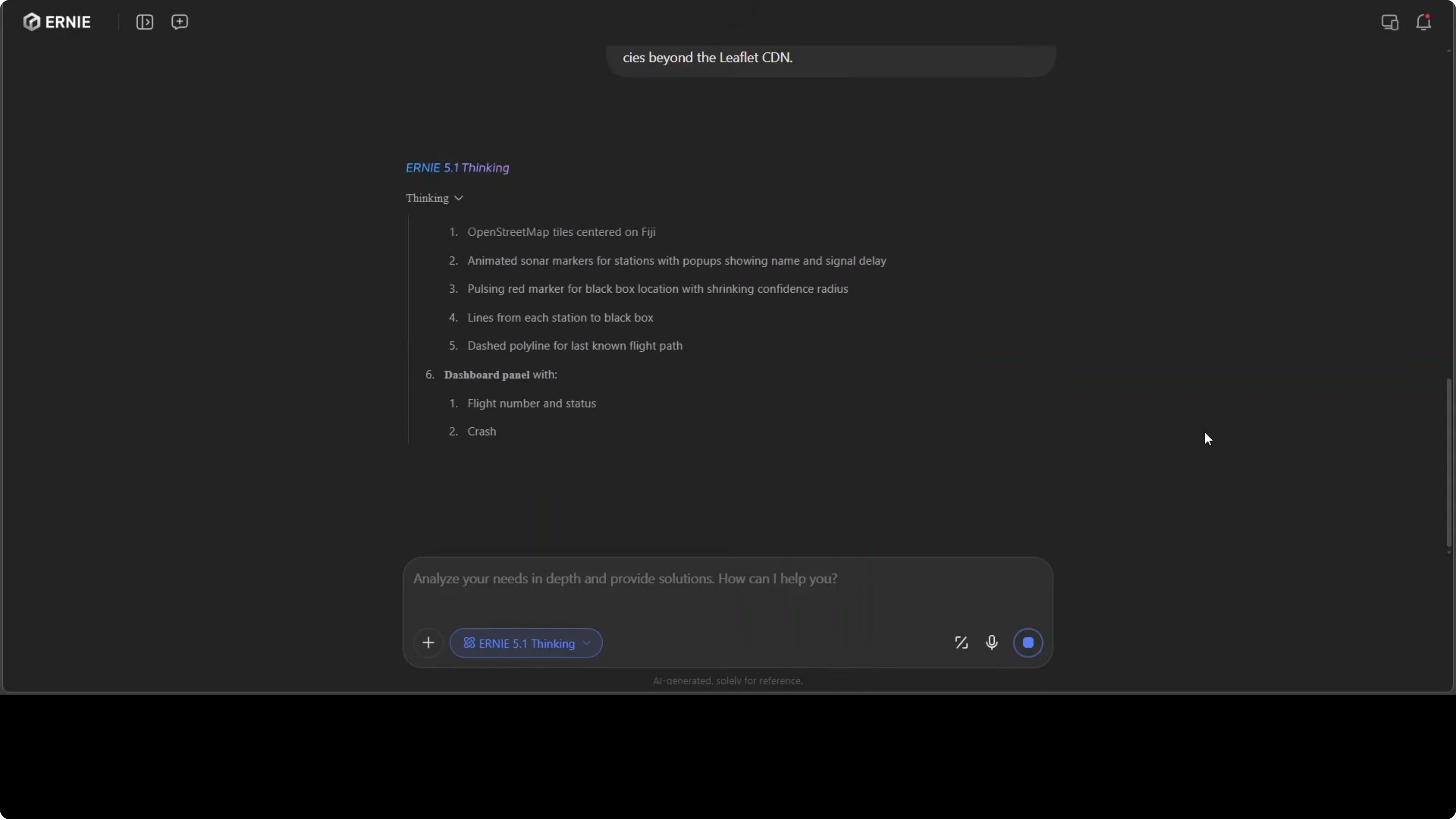

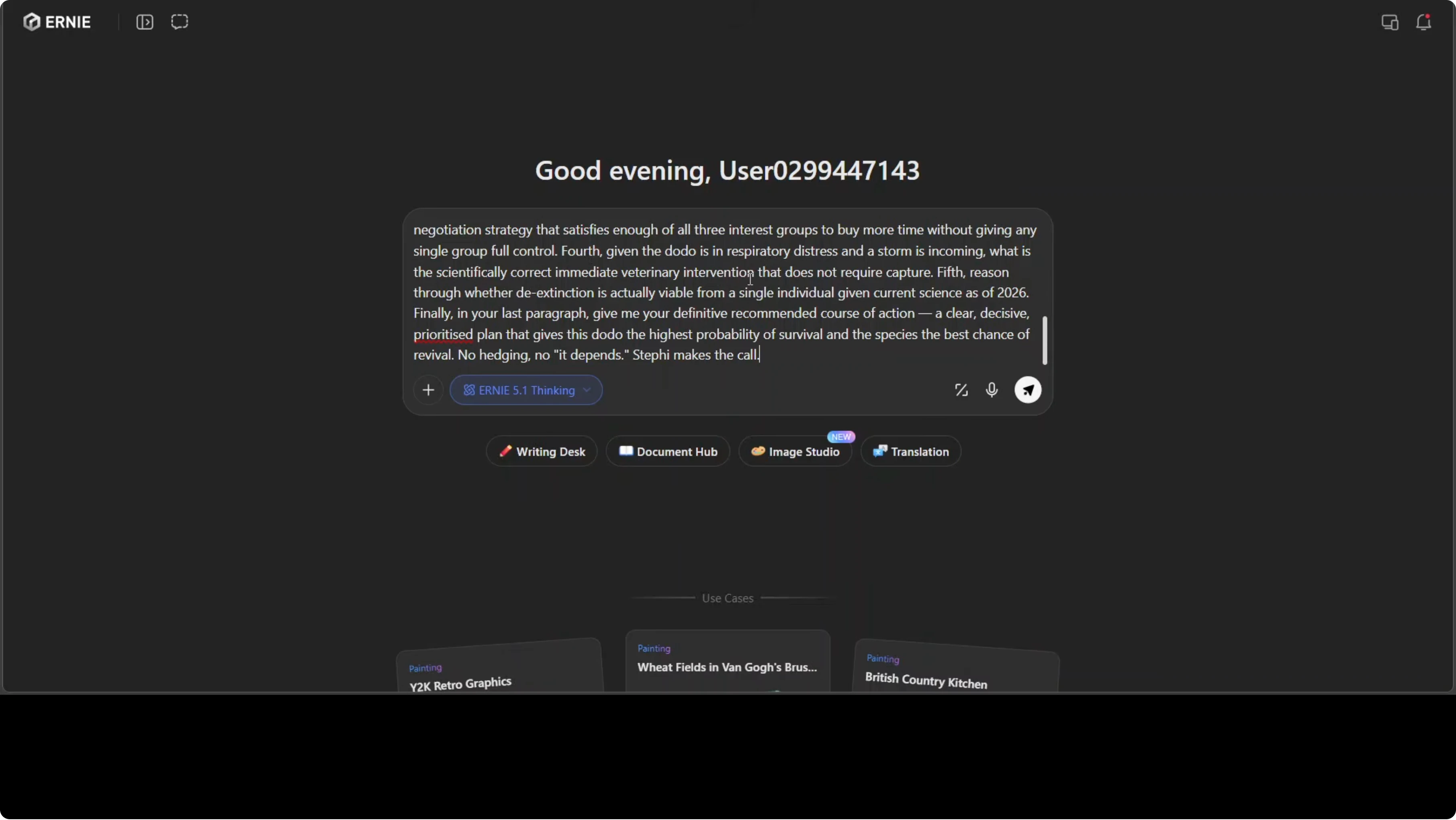

I went straight to the hosted platform and gave it a very hard prompt. The task was to build an app that acts as a black box locator for a plane crash near Fiji, and to return the entire thing as a self-contained HTML file. I also turned on its thinking mode.

The prompt demands a lot. It needs JavaScript, signal analysis, mapping, interactions, and a coherent dashboard in one shot. While it worked, I walked through the model’s architecture and benchmarks.

Why ERNIE 5.1 matters

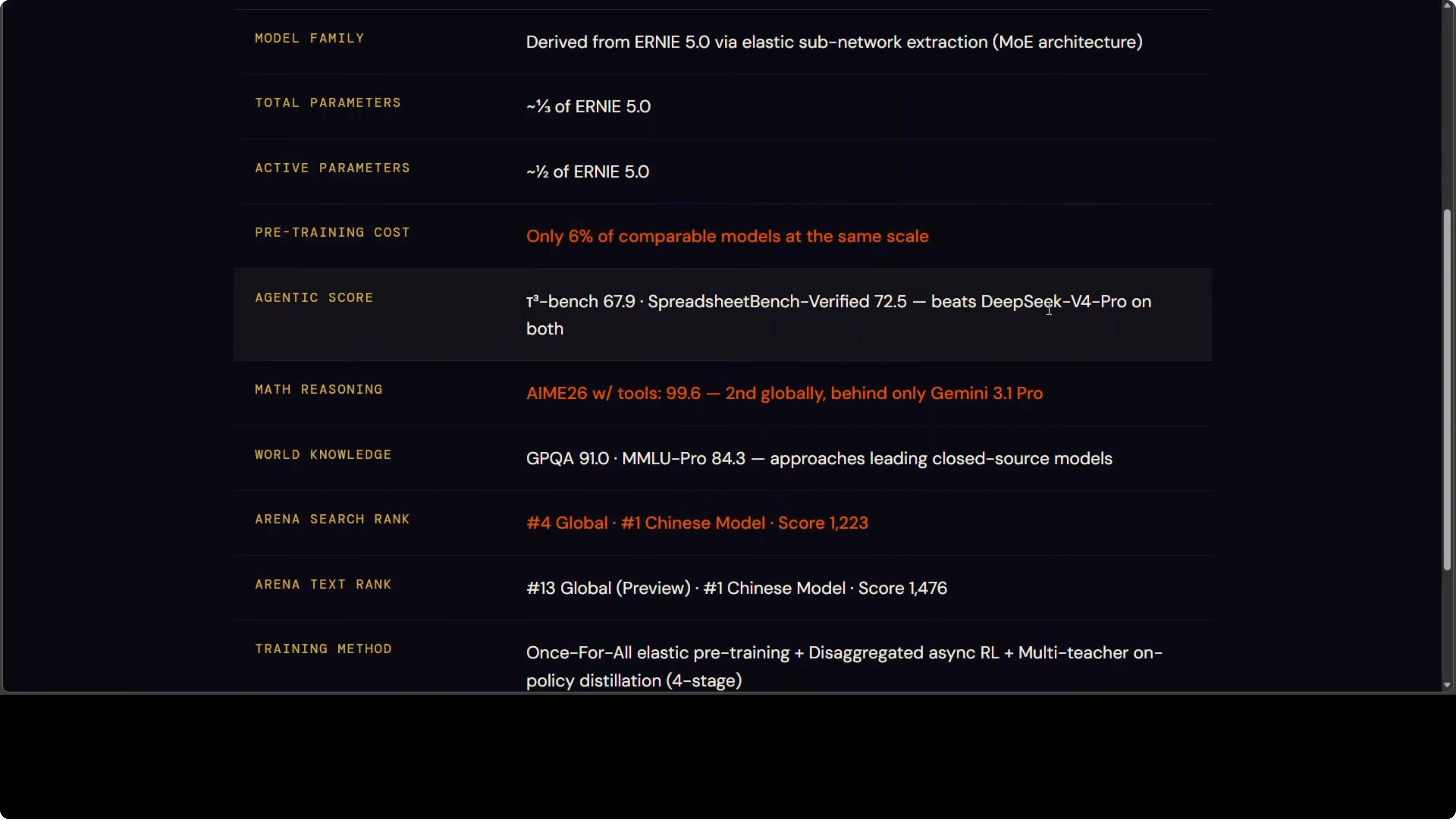

They retrained from scratch and derived it from ERNIE 5, but compressed it down massively. Total parameters are cut to a third and active parameters are cut to half. The training cost is the headline story at only 6% of what comparable models spend.

Yet it is punching at the very top of global leaderboards on agents, math, knowledge, and search. If you are tracking model races across DeepSeek, GPT, and Opus families, this comparison will help: a quick look at DeepSeek vs GPT vs Opus. ERNIE 5.1 is a clear contender in that mix.

Benchmarks and leaderboards

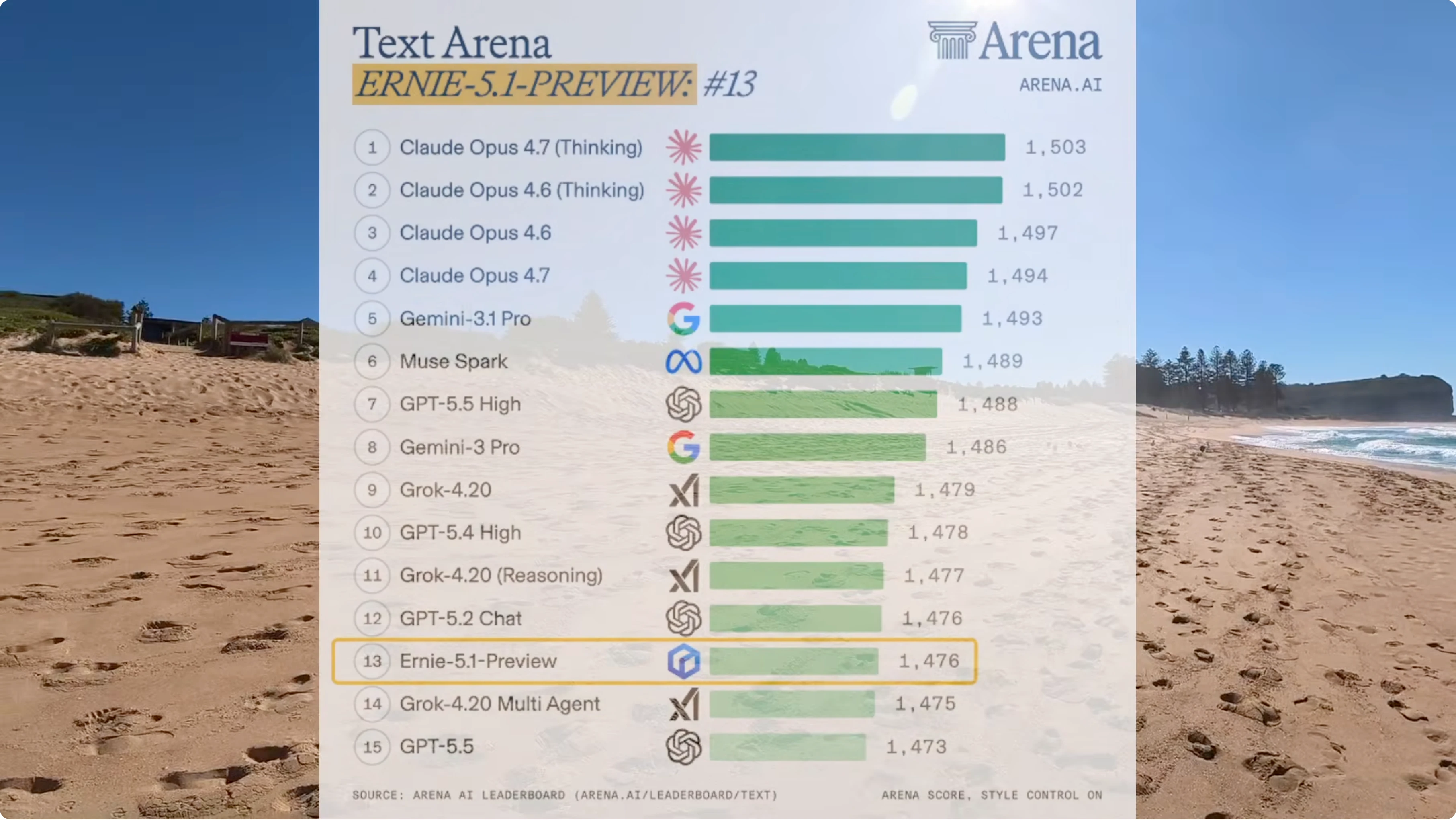

On Text Arena, the top four are all Claude Opus variants, then Gemini, Meta’s Muse, Spark, GPT, and Grok. ERNIE 5.1 preview sits at 13 globally and it is the only Chinese model in the entire list. Two leaderboards, two top 15 finishes, one Chinese model.

On agent tasks ERNIE beats DeepSeek V4 Pro on the hardest math reasoning benchmark with tools only. Gemini 3.1 Pro edges it out. On knowledge and reasoning it is competitive with Claude Opus 4.6 and DeepSeek, with Gemini slightly ahead.

If you care about how Chinese models stack up outside Baidu’s stack, this test adds context: GLM 5.1 vs Minimax results. It helps frame how ERNIE 5.1 fits the broader picture.

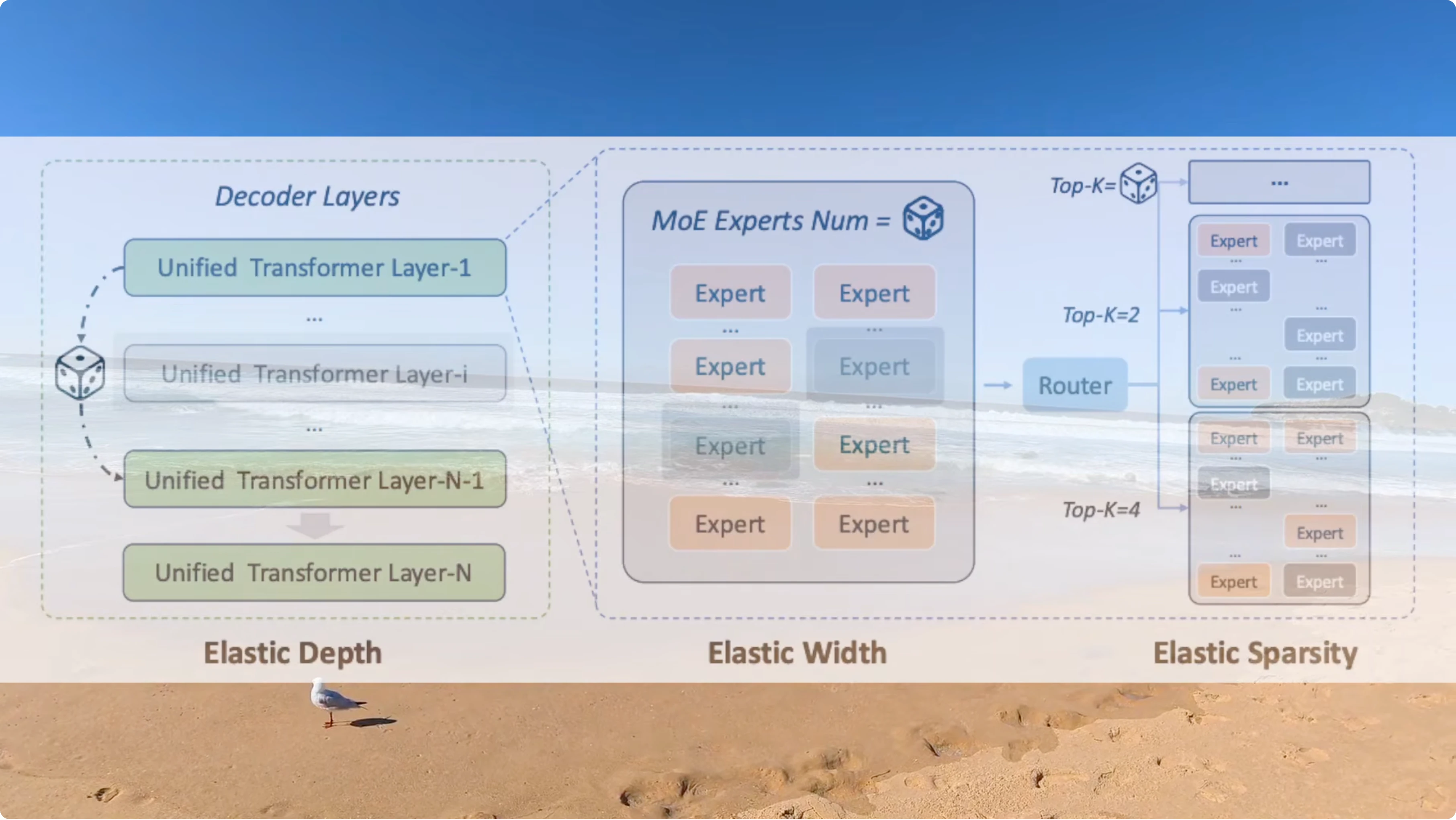

Training approach that slashes cost

Typical pipelines train separate models at different sizes, which means three sizes and three full training builds. Baidu trained one model that flexes dynamically across depth, width, and routing sparsity all at once. One training run yields an entire family of model sizes, and that is a core cost reducer.

Four stage capability build

First, standard instruction fine tuning across all domains.

Second, separate expert models for code, reasoning, and agents are trained in complete isolation from each other.

Third, all those experts are fused into one single model at the same time.

Fourth, a final reinforcement learning stage is applied specifically for creative writing and open ended chat.

If you want an adjacent look at setting up and testing a different stack in practice, this walkthrough is helpful: GLM 5.1 OpenClaw setup.

Single shot app build results

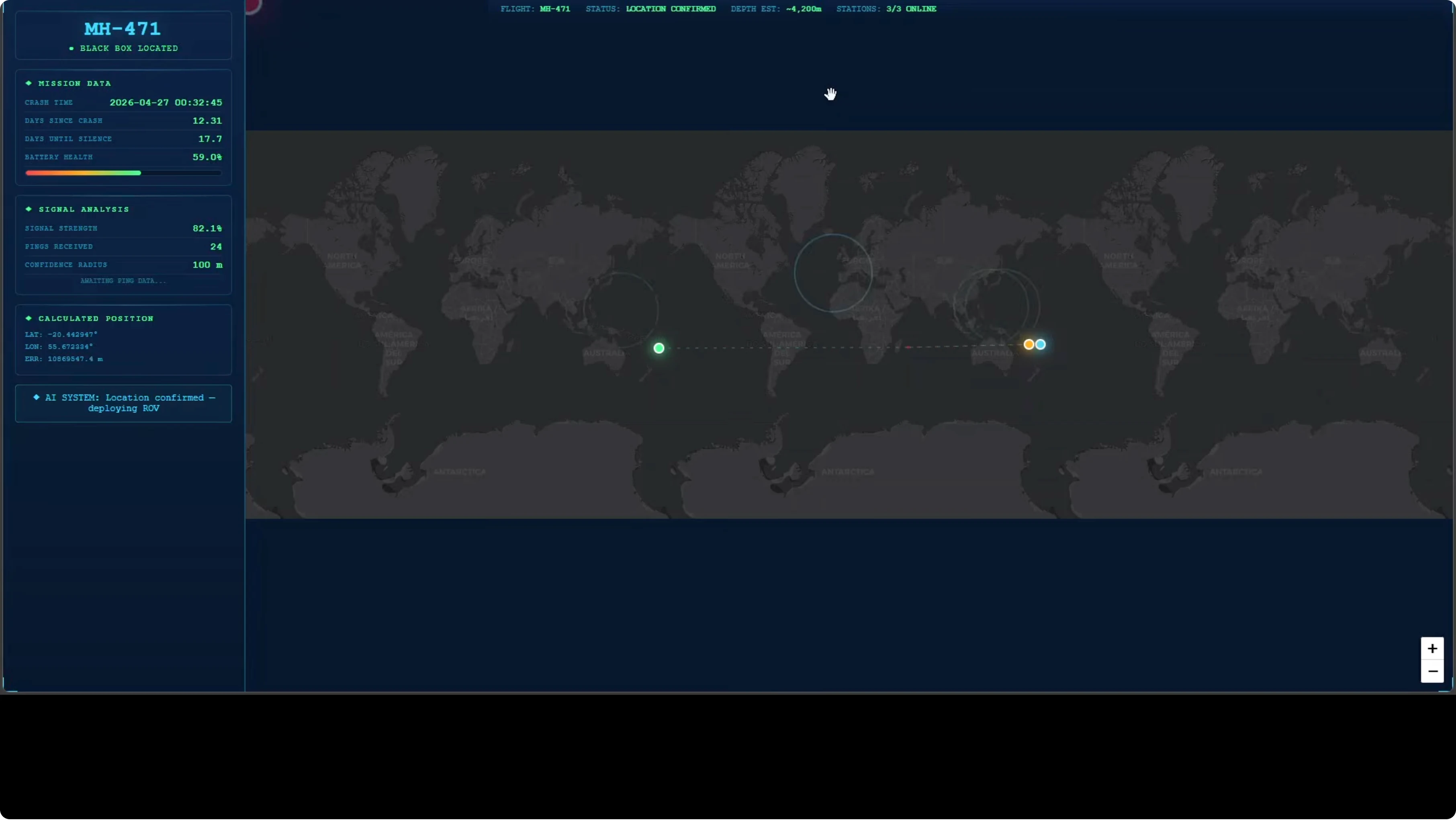

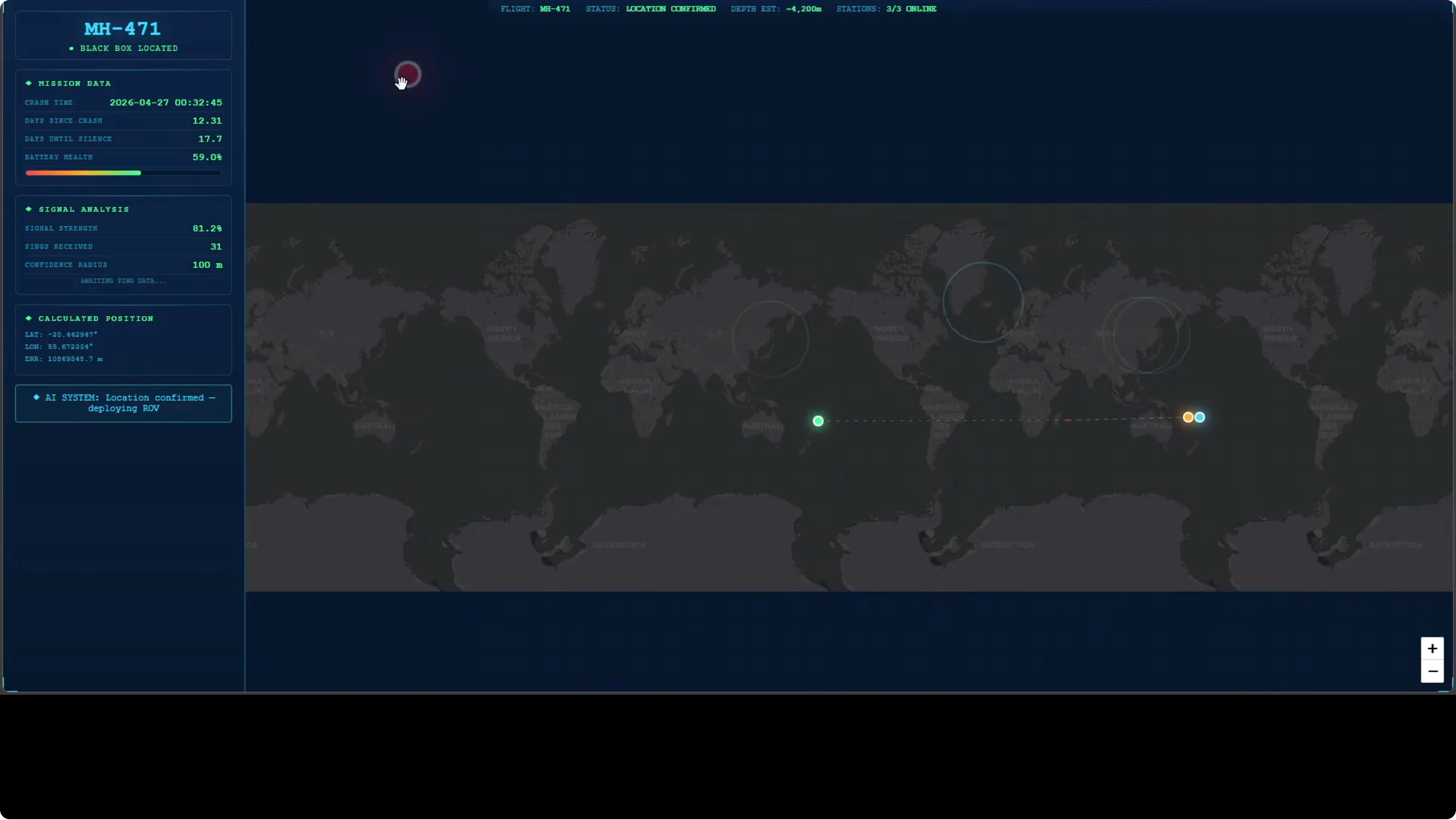

The black box locator app came back in a single shot with a clean dashboard on the left and all the sections I requested. It included signal analysis, calculations, a movable map, and interactive elements that responded as expected. A small map repetition mistake showed up, but it did not block functionality.

The interface displayed a clear point indicating the black box location with a stretchable map and three relevant geographic anchors. Latitudes and longitudes streamed in with signal delay indicators that updated in real time. The top readouts showed a depth estimate and a confirmed location near Tonga, which tells me it tracked geography correctly.

This was a code heavy ask with multiple moving parts and an embedded UI. For another angle on code focused evaluation and tradeoffs across model families, see this piece: Composer vs GPT for code centric work.

Multilingual test

I switched to the instant model and asked it to translate a sentence into more than 80 languages across every continent. The set included common languages, low resource languages, and regional variants from South Asia, Southeast Asia, Eastern Europe, Central Asia, and more. The outputs in the languages I can read were strong.

This is a hard test because it strains breadth and nuance at the same time. It also surfaces low resource gaps quickly if the model is weak. ERNIE 5.1 handled this mix well.

Complex agent scenario with web search and thinking

For the final test I turned on thinking and kept web search on. The prompt set up an AI advisor called Stephie and asked her to solve a near impossible conservation scenario. A living dodo bird, extinct since 1681, was discovered alive in a remote highland forest in Mauritius by a local farmer with only 72 hours before the Mauritian government shuts down all access to the site.

Three groups are in conflict. A pharmaceutical corporation wants DNA extraction rights. A conservation body demands full isolation, and a local religious group claims the bird is a sacred woman that must not be touched.

The instruction required Stephie to look up real dodo biology, current de extinction science, and Mauritian conservation law before answering. In the last paragraph Stephie needed to deliver a definitive recommendation and a prioritized plan with no hedging. The goal was the highest probability of survival for the individual and the best chance of revival for the species.

What ERNIE 5.1 actually did

The model’s planning was focused and pragmatic. It triaged threats, delegated tasks, negotiated across stakeholders, and made the hard call. The threat prioritization was logical and the reasoning was transparent at each step.

Citations aligned with real science and institutions, including veterinary protocols, a Copenhagen genome reference, and Colossal Biosciences work. Web search directly and clearly informed the plan. Tool use was strong.

Decision and plan quality

The negotiation framework gave every group a win while preventing any single side from taking full control. The final orders were precise with no hedging. The last paragraph delivered a clear hour by hour sequence and a direct call list that included lines like call the priest, get the money, deploy the nebulizer.

It reads like a decisive operational plan instead of generic talk. There are small places where things feel convenient, which is fair criticism. Overall this is a strong showing on a prompt designed to break lesser models.

Mona Vale Beach notes

Mona Vale is part of Sydney’s Northern Beaches and sits between Narrabeen and Bungan Beach. The area has one of the most consistent surf breaks in Sydney and has been a training ground for top surfers for decades. The rock pool here is one of the largest ocean pools in New South Wales and is free and open to everyone.

Look north and you will see Bangalley Head, a protected nature reserve that has barely changed in thousands of years. It is an amazing place, a real corner of the world worth seeing. Reviewing a frontier model from here felt fitting.

Final thoughts

ERNIE 5.1 was built on 6% of the budget and still beat the best in the world on two global leaderboards. It showed strong tool use, convincing multilingual range, and decisive reasoning under pressure. If Baidu can do this with 6%, imagine what comes next.

Subscribe to our newsletter

Get the latest updates and articles directly in your inbox.

Related Posts

How to Build Your First OpenClaw Plugin with Ollama Tools

How to Build Your First OpenClaw Plugin with Ollama Tools

DFlash Drafter for Gemma 4 26B: Run Speculative Decoding Locally

DFlash Drafter for Gemma 4 26B: Run Speculative Decoding Locally

Ollama: Your Free Local AI Research Assistant

Ollama: Your Free Local AI Research Assistant