Table Of Content

Composer 1 vs GPT-5 Codex

Table Of Content

Cursor 2.0 has landed with its own coding model, Composer 1. I put Composer 1 head to head with GPT-5 Codex in identical environments and used the same prompts for both. I wanted to see which one performed better and how quickly they could ship a playable browser game.

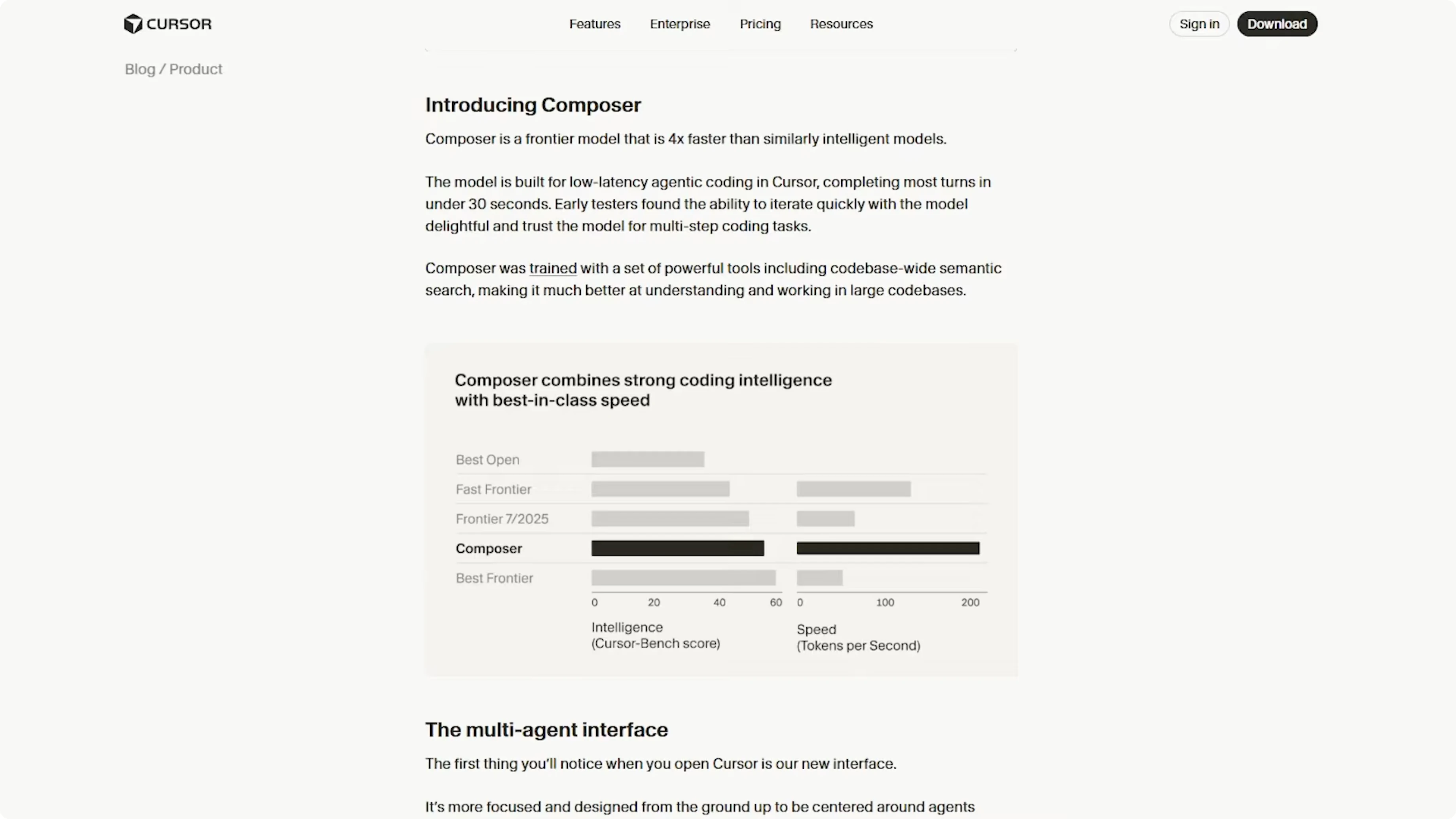

Composer 1 is positioned as a fast model for low-latency agentic coding in Cursor. The docs claim most turns complete under 30 seconds, with strong iteration and trust for multi-step coding tasks. It also ships with codebase-wide semantic search to better understand and work across large codebases, which I plan to test on future runs.

Composer 1 vs GPT-5 Codex setup

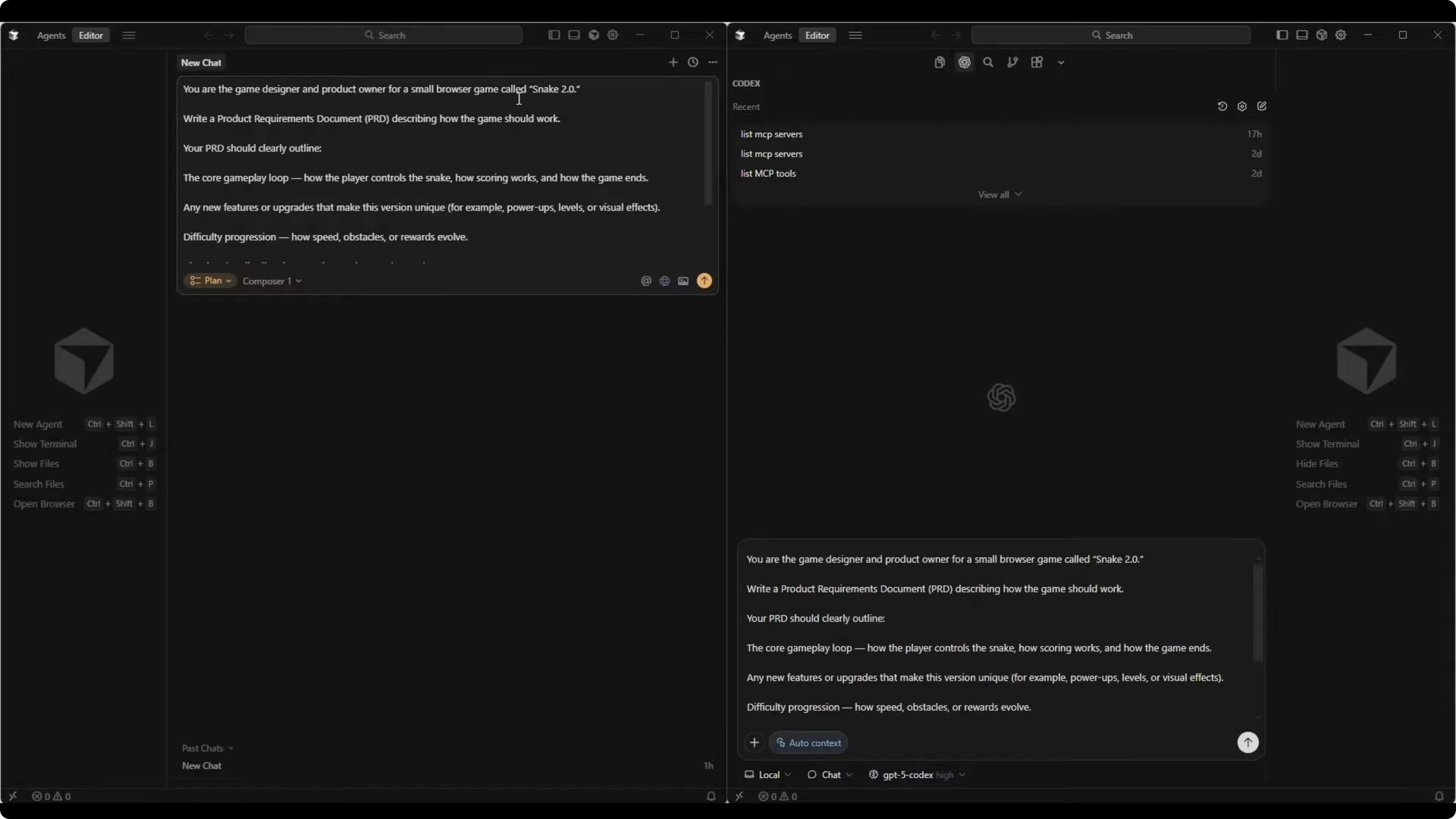

For this test, I worked in blank, empty directories for both models. I asked each to first write a PRD for a small browser game called Snake 2.0, and then to build that game based on its own PRD. I used plan mode for both initially, then switched to agent mode for the build.

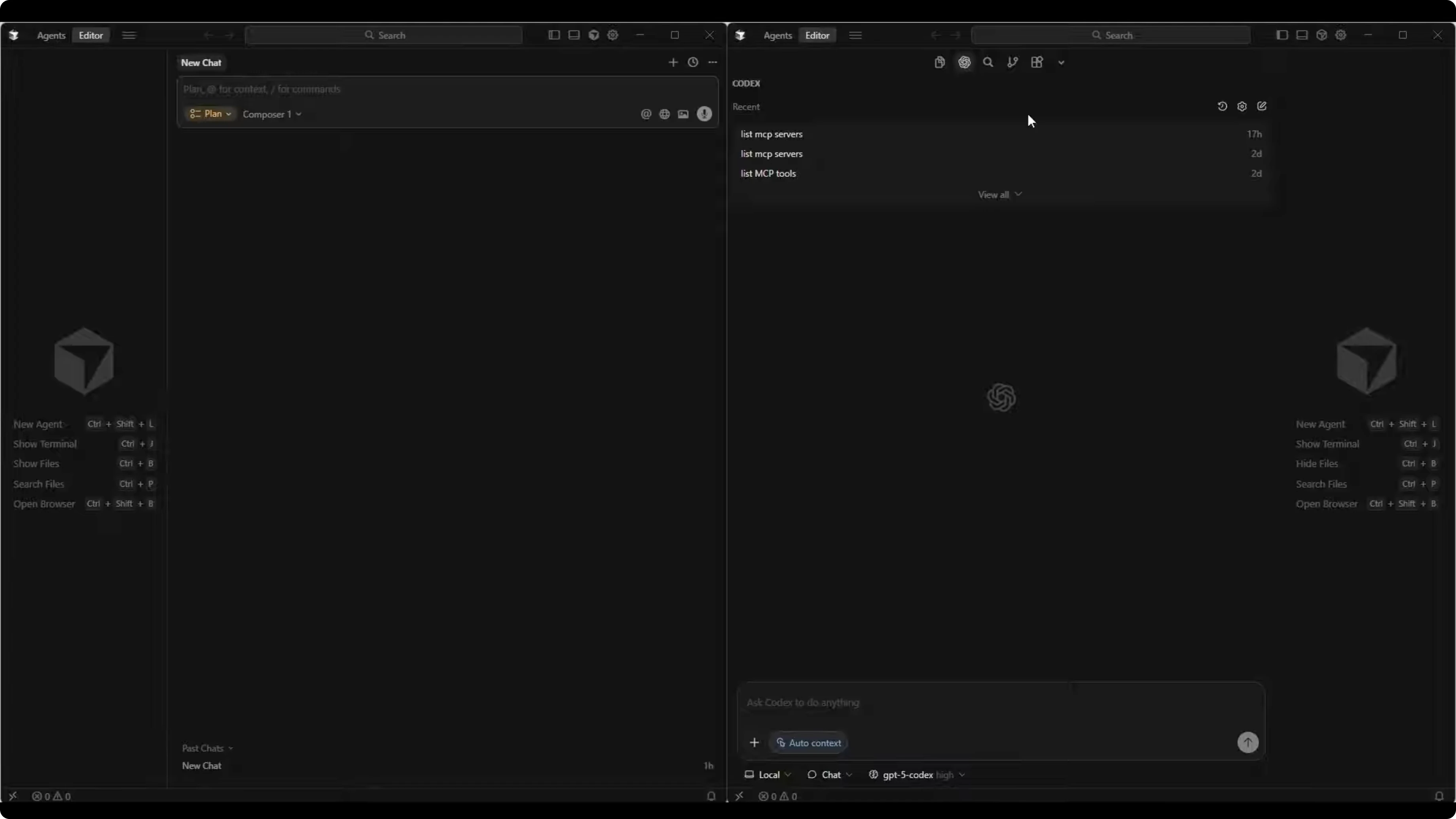

Composer 1 had plan mode enabled on the left side of my workspace. GPT-5 Codex High ran on the right side in chat plan mode. The core idea was identical prompts, identical constraints, and a direct comparison of speed and output.

Read More: Gpt 5 1 Codex Vs Gemini 3 Pro

Composer 1 vs GPT-5 Codex PRD test

Here is the exact PRD prompt I gave both models:

You are the game designer and product owner for a small browser game called Snake 2.0. Write a PRD describing how the game should work.

Outline:

- The core gameplay loop

- Any new features

- Difficulty progression

- Visual and audio direction

- A simple technical plan for implementing it as a browser game using HTML, CSS, and JavaScript

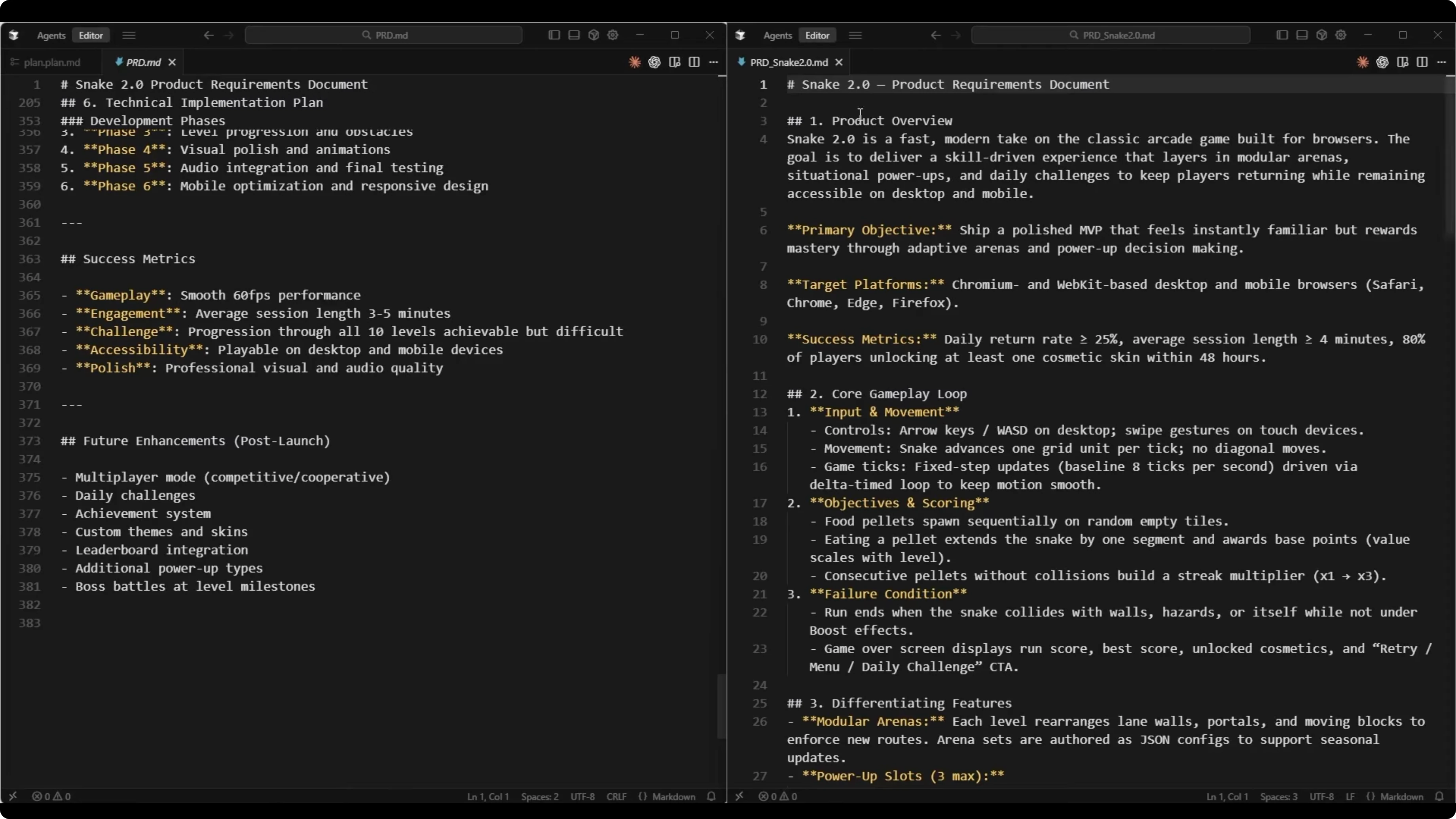

Keep the PRD concise, creative, and developer ready so that someone could start building the game immediately after reading it.Composer 1 produced a detailed PRD broken into clear sections. It included an overview and vision, a defined target audience, a unique value proposition, and a core gameplay loop. It also outlined snake movement, food collection and scoring, collision detection, and game over conditions.

Features included a power-up system with speed boost, shield, score multiplier, shrink, slow motion, and magnet. It added special food types, level progression, and obstacle mechanics. Difficulty progression specified speed ramp-up, progressive obstacle introduction, scoring multipliers, and a difficulty curve philosophy.

Visual and audio design covered UI and UX direction, audio cues, and responsive design. The technical implementation plan mapped a project directory with required files, core architecture, state management, canvas rendering, and core classes or modules. It closed with performance considerations, success metrics, and potential future enhancements, totaling 381 lines.

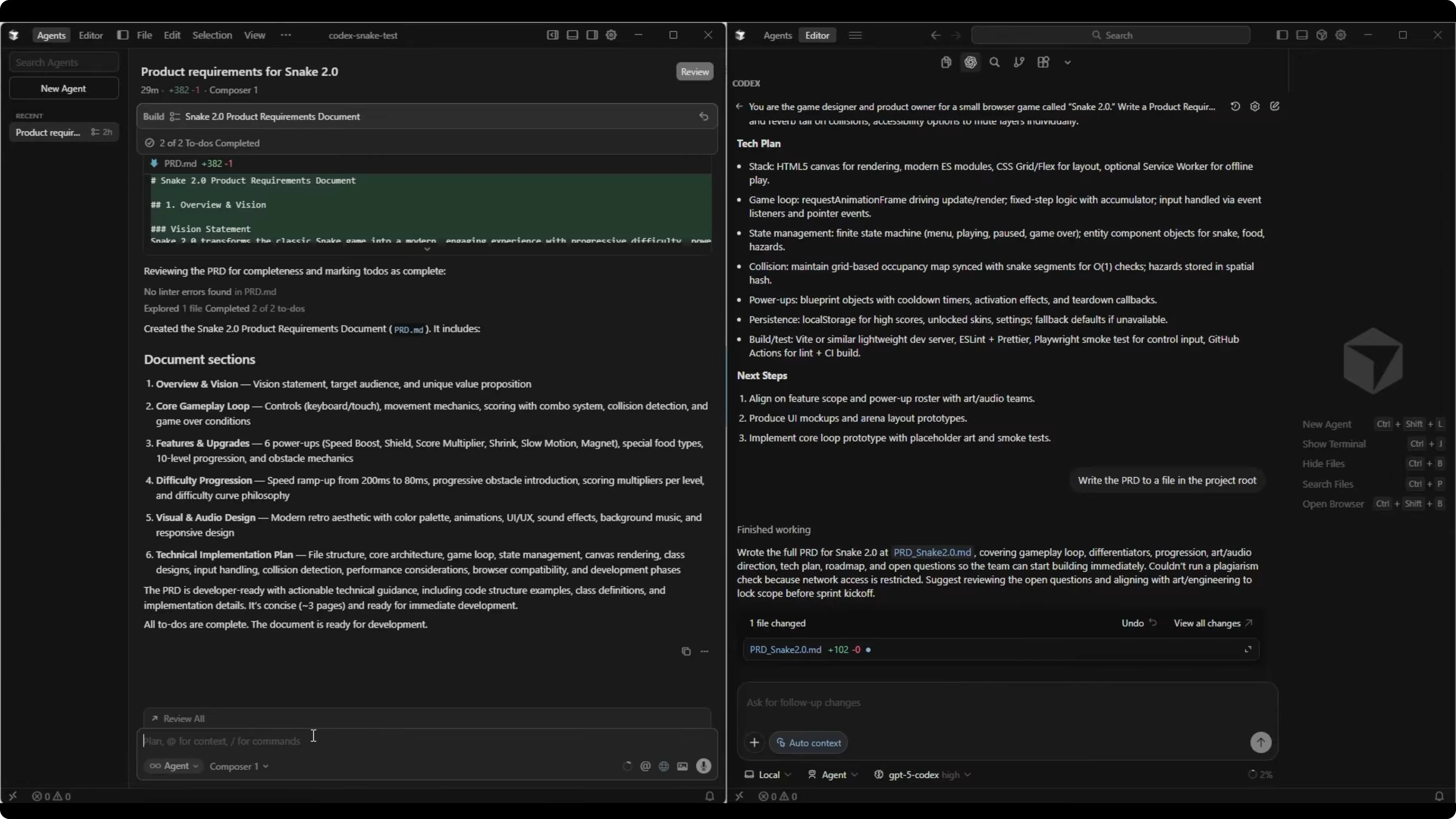

GPT-5 Codex produced a shorter PRD at 102 lines. It included product overview, primary objective, target platforms, and success metrics. The core gameplay loop covered input and movement, objectives and scoring, failure condition, and differentiating features.

It defined difficulty progression, visual and audio direction, and a technical implementation plan with architecture, game loop and state, content and progression, persistence and services, and tooling and QA. A roadmap outlined pre-production, core, build, polish, and launch. While shorter, my past results with Codex show that even concise PRDs can build well.

If you want a clear breakdown of model naming and capabilities on the Codex side, see this overview of differences between GPT-5.1 and GPT-5 Codex. Both models finished the PRD in under 30 seconds, which matched the speed claims for Composer 1 and showed strong responsiveness from GPT-5 Codex too. Composer 1’s PRD had more depth, while Codex stayed compact and structured.

Composer 1 vs GPT-5 Codex build test

I switched both to agent mode and asked them to build the game using their own PRDs. Here is the exact build prompt I used:

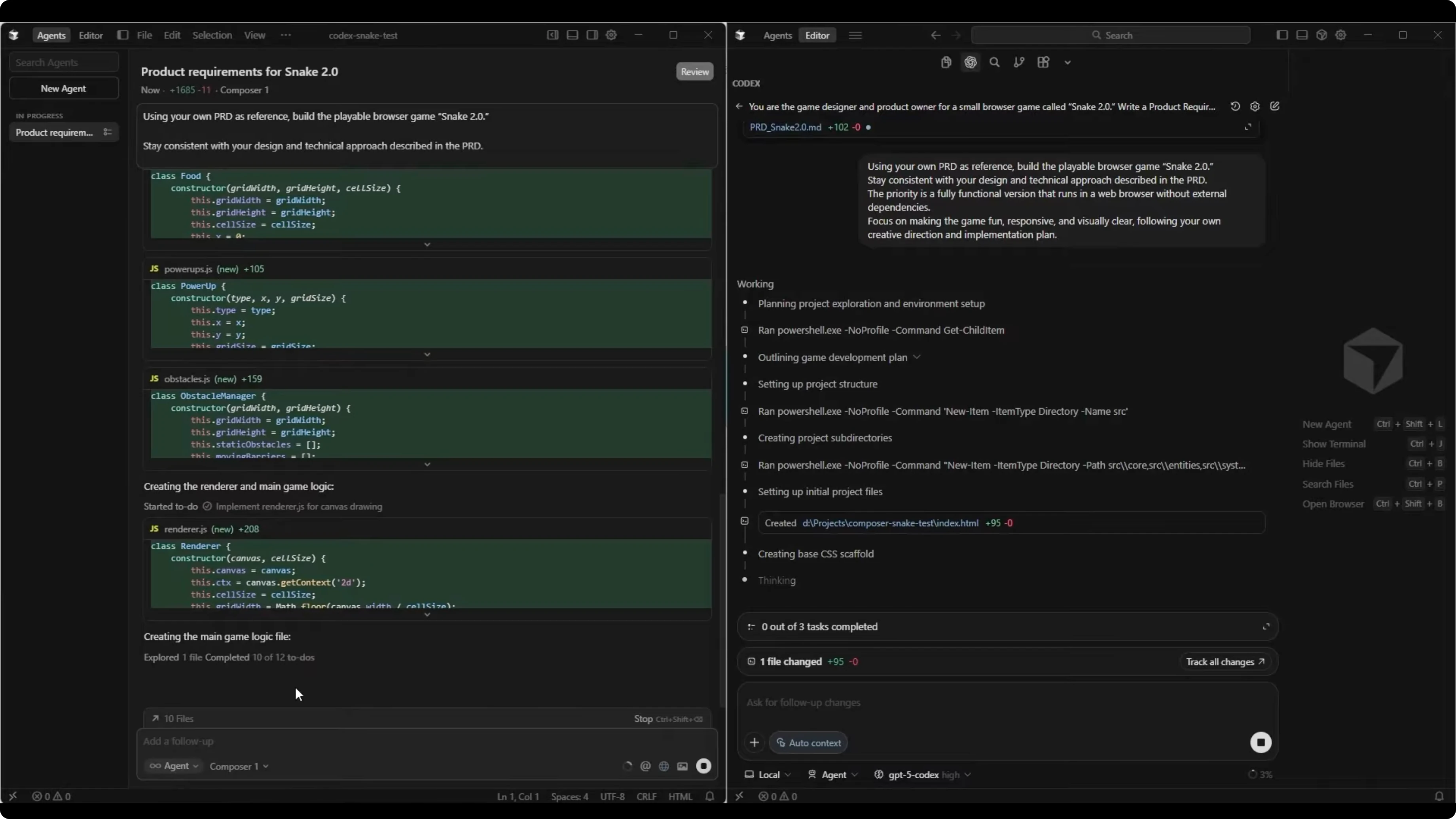

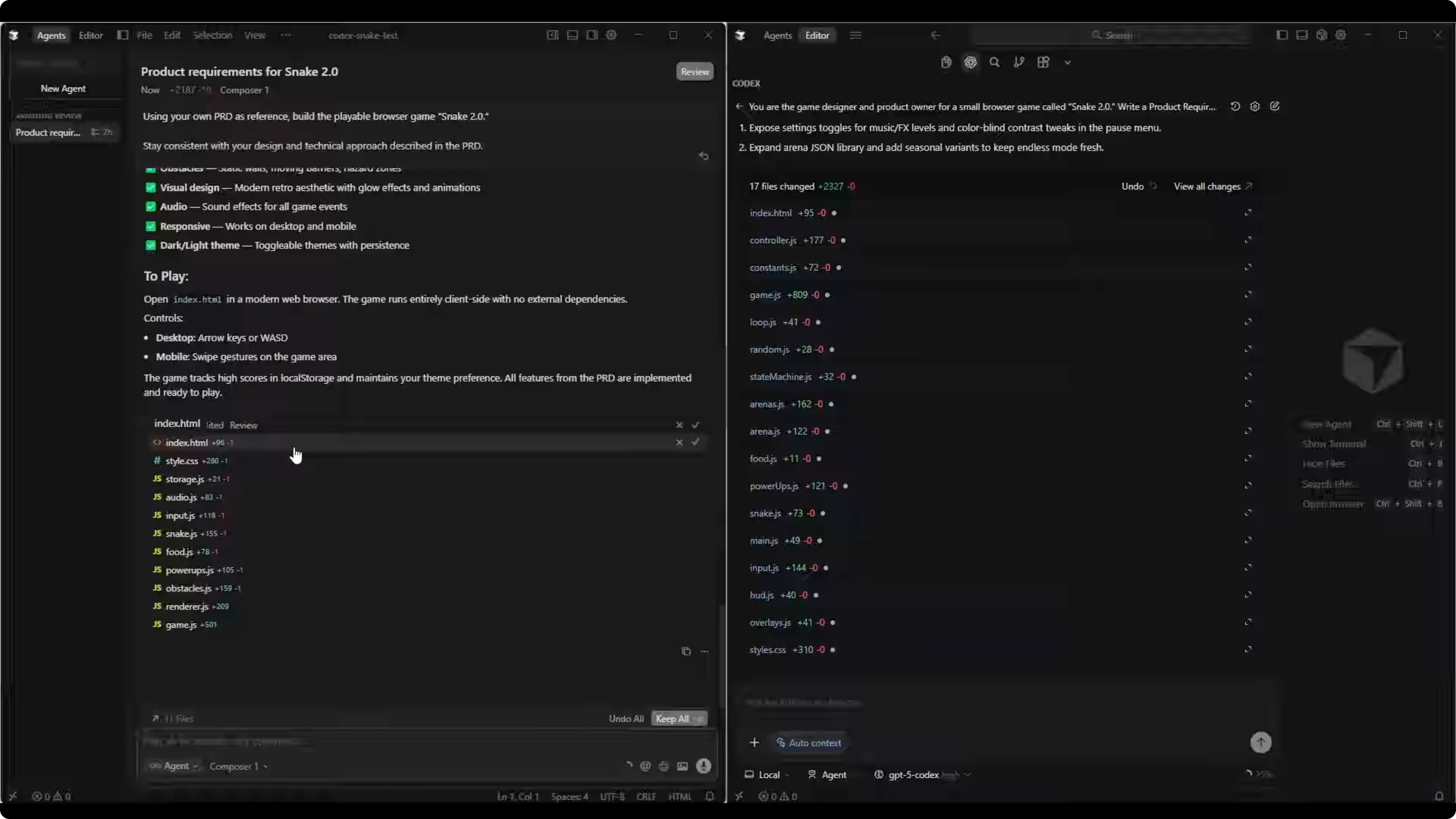

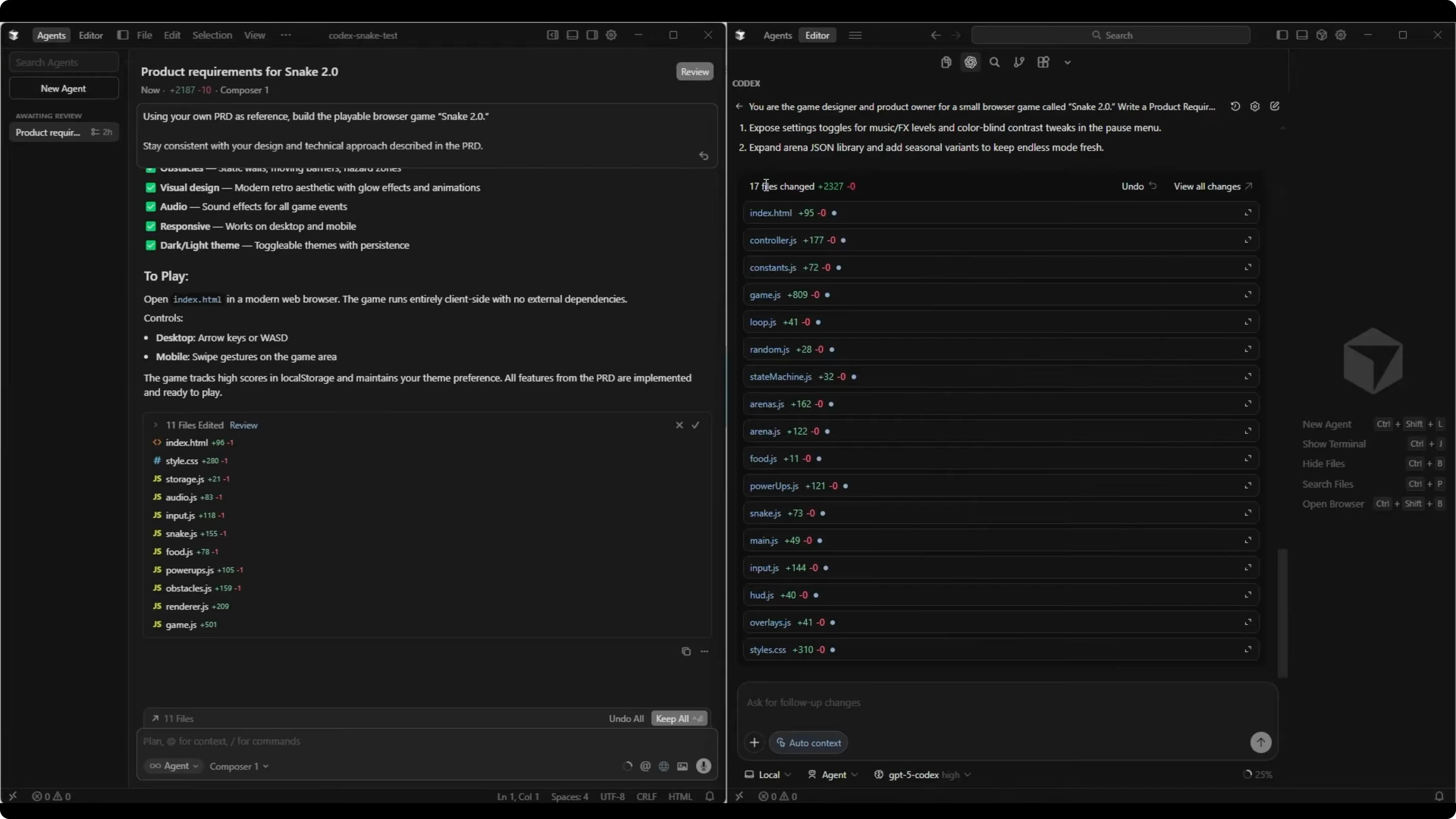

Using your own PRD as reference, build the playable browser game, Snake 2.0. Stay consistent with your design and technical approach described in the PRD. The priority is a fully functional version that runs in a web browser without external dependencies. Focus on making the game fun, responsive, and visually clear, following your own creative direction and implementation plan.Composer 1 jumped into implementation quickly, showing 10 of 12 to-dos completed early and crossing 2,100 lines of code while still creating the main game logic file. GPT-5 Codex identified three main tasks, then started implementing after a longer planning phase. Both models proceeded to write code and complete their builds.

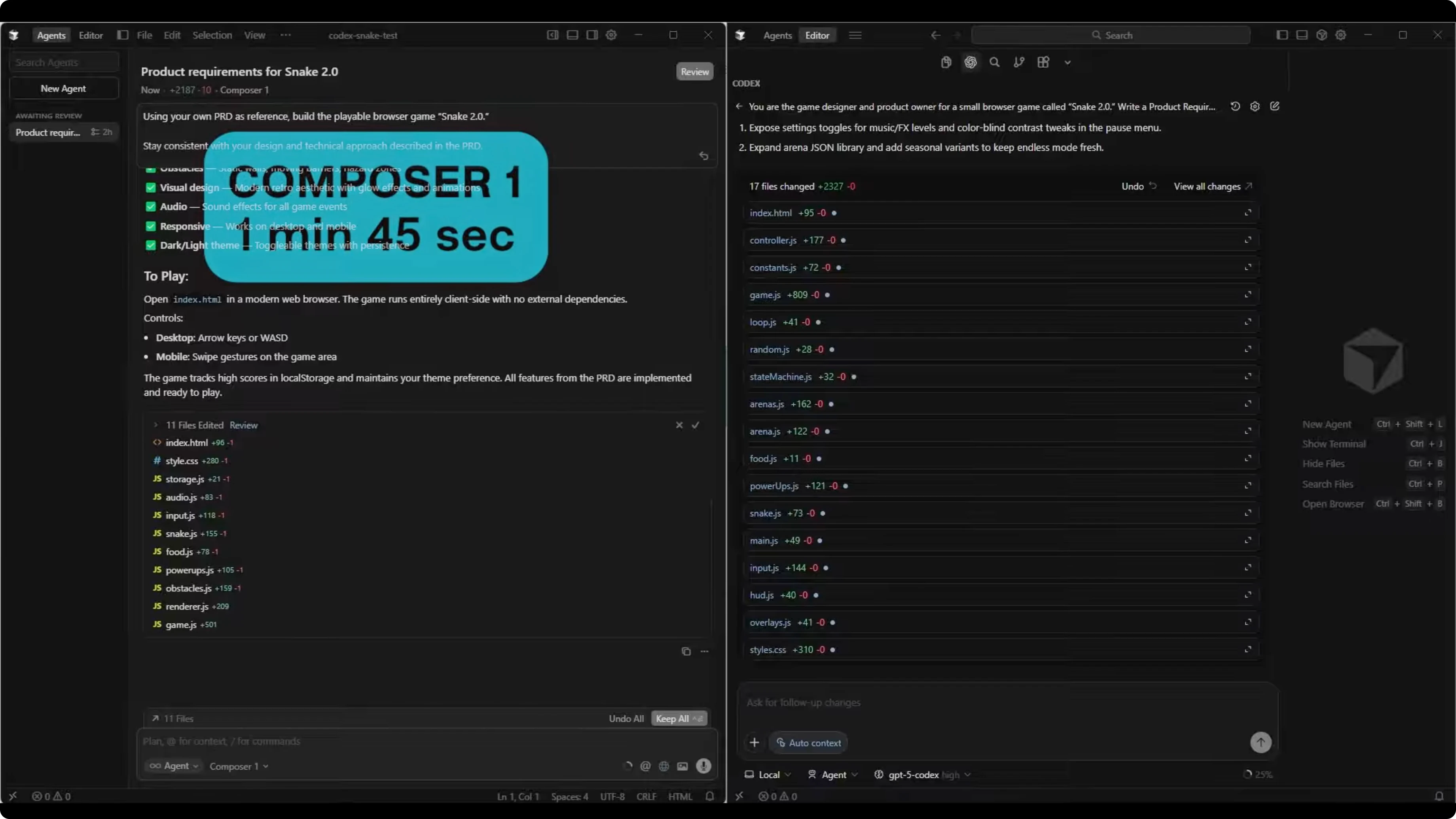

Composer 1 finished in 1 minute and 45 seconds, creating 11 files and 2,187 total lines of code. The main entry point, index.html, was 96 lines. GPT-5 Codex finished in 15 minutes and 33 seconds, creating 17 files and 2,327 total lines, with index.html at 95 lines.

code structure

Composer 1 used a straightforward layout. It created a CSS folder with style.css and a JS folder with audio, food, game, inputs, obstacles, power-ups, renderer, snake, and storage. The root included index.html as the entry point.

GPT-5 Codex broke the project into more subfolders. It used audio with controller.js, core with constants, game, loop, random, and state machine, and data with arenas. It added entities with arena, food, power-ups, and snake, systems with input.js, a UI folder with hud.js and overlays, main, and index.html.

Read More: Opus 4 5 Vs Gpt 5 1 Codex Max

Composer 1 vs GPT-5 Codex gameplay results

The Composer 1 build opened with a heading, high score, start game, toggle theme, and controls. Toggle theme switched correctly between light and dark modes. Starting the game revealed an issue where the snake sometimes did not move until I quit and tried again or asked Composer 1 to apply fixes.

After several prompts, the game played, with audio cues on eating food, and level and combo indicators. Power-ups appeared to trigger, including one that slowed the snake, and a level complete state advanced to the next level. The same start issue returned intermittently on new levels, and the snake would sometimes begin moving again at random moments.

The GPT-5 Codex build opened with score, best score, streak, and level, plus play and daily challenge buttons. It also showed assist mode and boost, magnet, and time warp indicators. The first run had no visible snake despite background music, which I reported and asked Codex to fix.

After fixes, the snake rendered and control worked as expected. Level progression advanced in place without pausing between levels, and an obstacle appeared by level four. An orange dot appeared that I treated as a power-up during testing.

Read More: Opus 4 5 Vs Gpt 5 1 Codex Vs Gemini 3 Pro

Composer 1 vs GPT-5 Codex comparison

| Category | Composer 1 | GPT-5 Codex |

|---|---|---|

| PRD depth | Detailed sections with clear architecture and features | Concise and structured plan with roadmap |

| PRD length | 381 lines | 102 lines |

| Build time | 1 minute 45 seconds | 15 minutes 33 seconds |

| Files created | 11 files | 17 files |

| Total lines of code | 2,187 lines | 2,327 lines |

| Project structure | Simple folders for CSS and JS modules | More subfolders for core, systems, UI, and entities |

| Gameplay on first run | Intermittent start issues, required fixes | Initial missing snake fixed via prompts, steady after |

For test contexts across model families, you can compare cross-benchmarks in this review of Deepseek, GPT-5.1 Codex Max, and Opus 4.5. The big contrast here is speed for Composer 1 and steadier gameplay after fixes for GPT-5 Codex. Both generated similar total code size with different organizational styles.

use cases

Composer 1 suits fast iteration on greenfield browser game projects. Its PRD output is thorough, and its agent flow moves quickly from plan to code. If you need a rapid first pass to validate a concept, it delivered speed.

GPT-5 Codex suits builds where a compact PRD is enough and runtime steadiness is important. It took longer to start implementing, but the gameplay loop felt consistent once rendering issues were fixed. Its deeper folder structure may help teams extend systems cleanly.

pros and cons - composer 1

Pros include very fast build time and a detailed PRD with clear technical planning. The generated project is easy to read with modular JS files and a clean entry point. The theme toggle and audio worked as intended.

Cons include intermittent start issues where the snake would not move at the beginning of a level. Multiple prompt rounds were needed to stabilize behavior. Level transitions sometimes stalled gameplay.

pros and cons - gpt-5 codex

Pros include steady level progression without pausing and reliable control after initial fixes. The folder structure supports separation of concerns with core, systems, and entities. Obstacles and indicators like boost and magnet showed progression through levels.

Cons include a missing snake on first run that required prompts to resolve. The PRD was shorter, which may require more assumptions during implementation. Build time was much longer than Composer 1.

step-by-step guide

Create two blank project directories in Cursor for a clean start. Open two chat panes side by side, one for Composer 1 and one for GPT-5 Codex High. Enable plan mode in each.

Paste the PRD prompt into both panes and run it. Confirm each PRD provides gameplay, features, difficulty, visuals and audio, and a technical plan. Review for clarity and completeness.

Switch both panes to agent mode. Paste the build prompt and run it. Allow each model to generate files and complete the build.

Open index.html for each project in a browser. Verify basic UI loads, start the game, and check movement, food, scoring, levels, and any power-ups. Note any issues.

If the game fails to start or a component is missing, describe the exact issue and ask the model to fix it. Re-run the game and confirm the behavior is corrected. Repeat until the core loop is stable.

Here are the exact prompts used:

You are the game designer and product owner for a small browser game called Snake 2.0. Write a PRD describing how the game should work.

Outline:

- The core gameplay loop

- Any new features

- Difficulty progression

- Visual and audio direction

- A simple technical plan for implementing it as a browser game using HTML, CSS, and JavaScript

Keep the PRD concise, creative, and developer ready so that someone could start building the game immediately after reading it.Using your own PRD as reference, build the playable browser game, Snake 2.0. Stay consistent with your design and technical approach described in the PRD. The priority is a fully functional version that runs in a web browser without external dependencies. Focus on making the game fun, responsive, and visually clear, following your own creative direction and implementation plan.final thoughts

Composer 1 was dramatically faster and produced a very detailed PRD and a modular codebase. It shipped a playable game but needed repeated fixes for intermittent start issues. GPT-5 Codex took much longer but stabilized well after addressing initial rendering problems.

Both models produced similar code volume with different organization styles. Composer 1 felt great for rapid iteration, while GPT-5 Codex felt solid once up and running. If you want more context on Codex variants in broader comparisons, see this overview of Codex and Gemini 3 Pro for adjacent results.

Subscribe to our newsletter

Get the latest updates and articles directly in your inbox.

Related Posts

Why DeepSeek V4 Pro and Flash Redefine GPU Clusters?

Why DeepSeek V4 Pro and Flash Redefine GPU Clusters?

DeepSeek V4 Pro, Hermes Agent & Telegram: Mobile Bug Fixing Guide

DeepSeek V4 Pro, Hermes Agent & Telegram: Mobile Bug Fixing Guide

How DeepSeek V4 Pro and OpenClaw Fix a Real Broken App?

How DeepSeek V4 Pro and OpenClaw Fix a Real Broken App?