Table Of Content

- Setup for Opus 4.5 vs GPT-5.1 Codex vs Gemini 3 Pro

- Build times and process

- Code structure overview

- Gemini 3 Pro results

- GPT-5.1 Codex results

- Opus 4.5 results

- Comparison overview table

- Use cases by model

- Pros and cons for Gemini 3 Pro

- Pros and cons for GPT-5.1 Codex Max

- Pros and cons for Claude Opus 4.5

- Recreate the test

- Final thoughts

Opus 4.5 vs GPT-5.1 Codex vs Gemini 3 Pro

Gemini API Pricing Calculator

Dynamically estimate your Google Gemini API costs for text, audio, images, and context caching. Covers new 3.1 Pro, Flash, and 2.5 models.

Table Of Content

- Setup for Opus 4.5 vs GPT-5.1 Codex vs Gemini 3 Pro

- Build times and process

- Code structure overview

- Gemini 3 Pro results

- GPT-5.1 Codex results

- Opus 4.5 results

- Comparison overview table

- Use cases by model

- Pros and cons for Gemini 3 Pro

- Pros and cons for GPT-5.1 Codex Max

- Pros and cons for Claude Opus 4.5

- Recreate the test

- Final thoughts

It’s been a massive week in AI. Three major models have dropped: Claude Opus 4.5, Gemini 3 Pro, and GPT-5.1 Codex Max. Benchmarks only tell part of the story, so I put all three head-to-head to build a complete productivity app from scratch and see how they perform in reality.

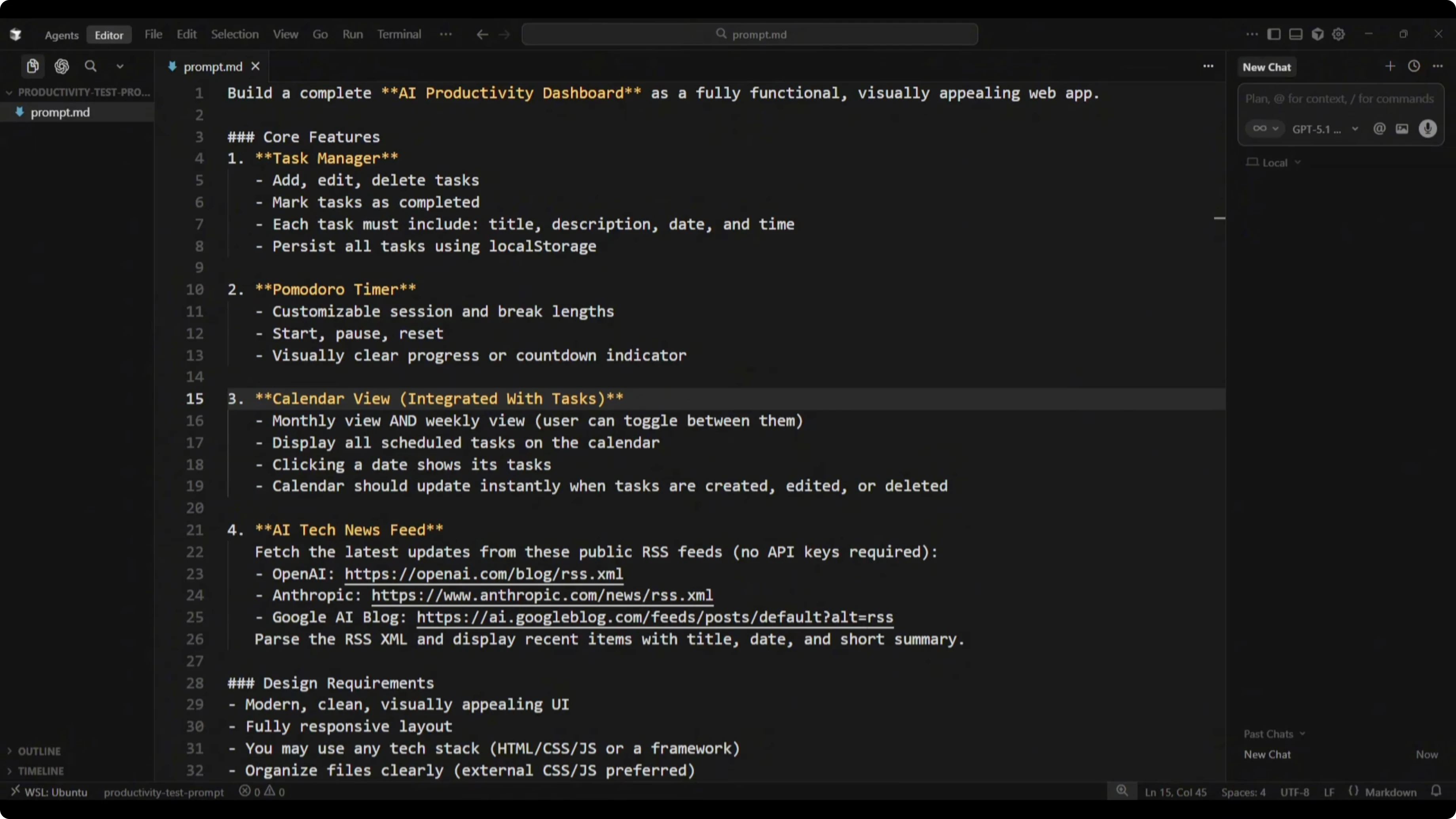

The brief was clear. Build a fully functional, visually appealing web app with four core features: a task manager with add, edit, delete, a Pomodoro timer, a calendar view integrated with tasks and due dates, and an AI tech news feed using public RSS feeds for OpenAI, Anthropic, and Google AI. The UI needed to be modern and clean, any tech stack was allowed, and I wanted the full working code so I could run and test each build.

This test pushes the major skills a coding model needs. UI and UX design, front-end architecture, state management, date and time logic, calendar rendering, task scheduling, async API fetching, XML to JSON parsing, error handling, local storage, and overall code quality and structure.

For a deeper side-by-side on model families, read this comparison.

Setup for Opus 4.5 vs GPT-5.1 Codex vs Gemini 3 Pro

I set up split workspaces in Cursor so each model could build in its own empty directory. Opus 4.5 ran in Cursor Chat on the left, GPT-5.1 Codex Max used the Codex extension with high reasoning mode in the middle, and Gemini 3 Pro ran in Cursor Chat on the right.

Each model received the exact same prompt and identical project conditions. I measured build times, inspected structure, and then launched each in dev mode to test feature-by-feature.

For broader testing across families, see this multi-model build test.

Build times and process

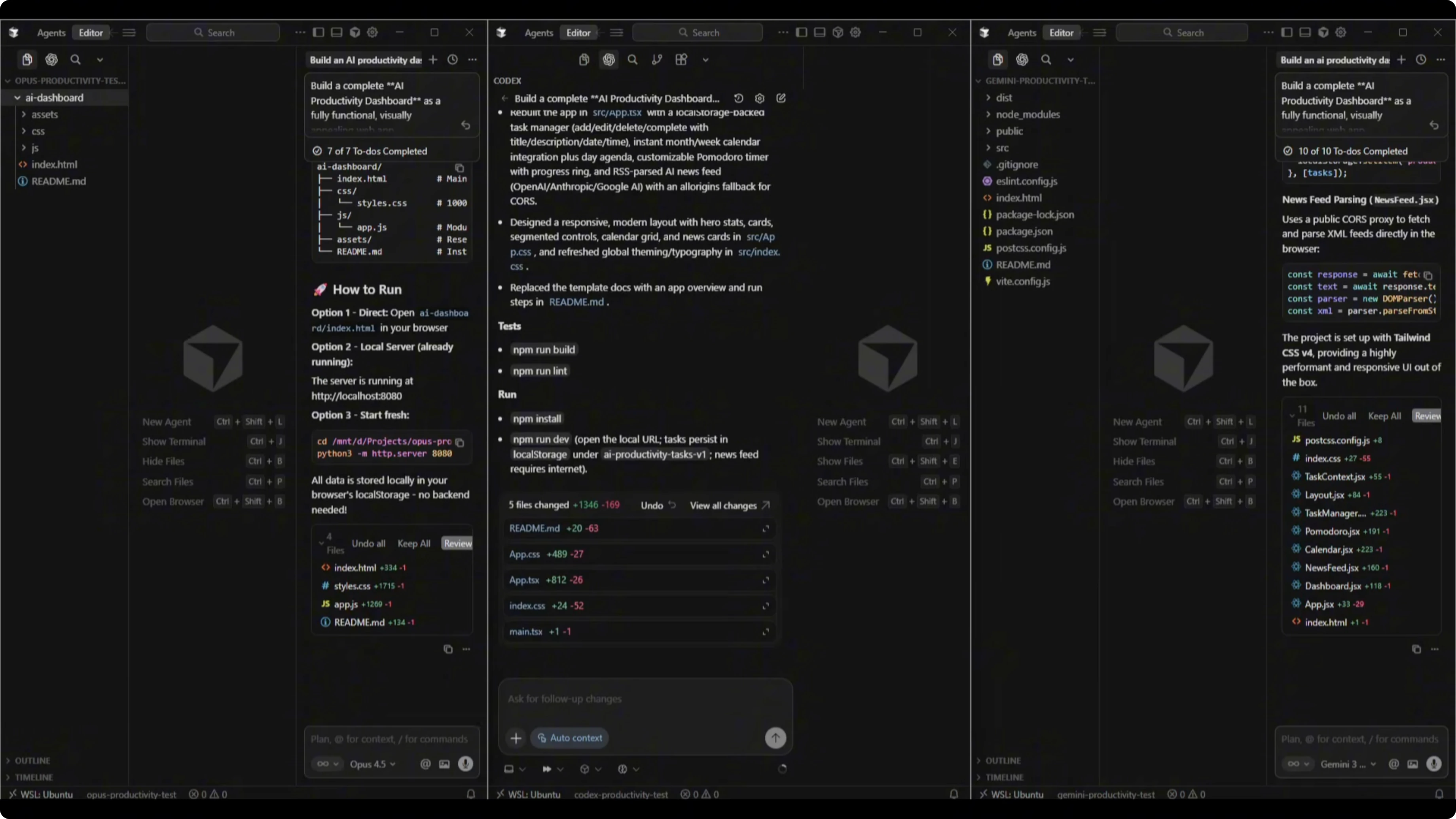

All three models finished the build process. Gemini 3 Pro was the quickest at 7 minutes and 29 seconds.

GPT-5.1 Codex Max finished in 8 minutes and 58 seconds. Claude Opus 4.5 took 12 minutes and 2 seconds.

Opus 4.5 spent extra time on thorough, in-depth testing. It opened browser mode automatically, launched the app in dev mode, tested every feature, and even captured screenshots, including the AI tech news feed sourced from RSS. That additional validation likely accounts for the extra 3 to 4 minutes.

If speed vs reliability matters to you, this analysis will help.

Code structure overview

Opus 4.5 produced a clearly larger codebase and documented its work. It created fewer files but far more lines of code than the others.

GPT-5.1 Codex Max created a moderate number of files with a smaller footprint than Opus. Gemini 3 Pro created the most files but a smaller overall codebase.

The standout during the build process was Opus 4.5’s testing discipline. It validated features end-to-end before handing results back.

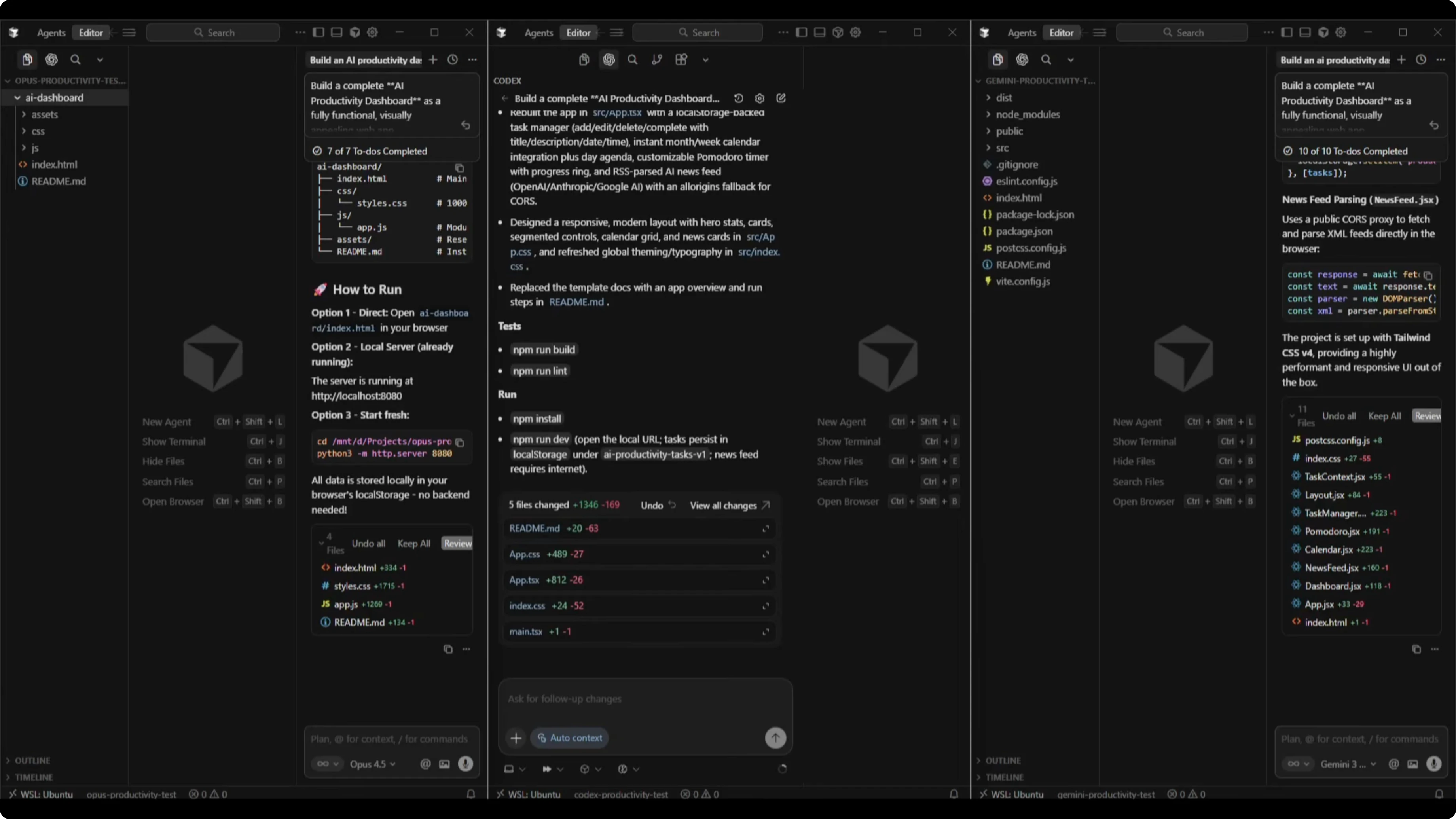

Gemini 3 Pro results

The Gemini 3 Pro dashboard showed a productivity tip in the bottom right. It did not implement the AI news feed from RSS and instead displayed a static message.

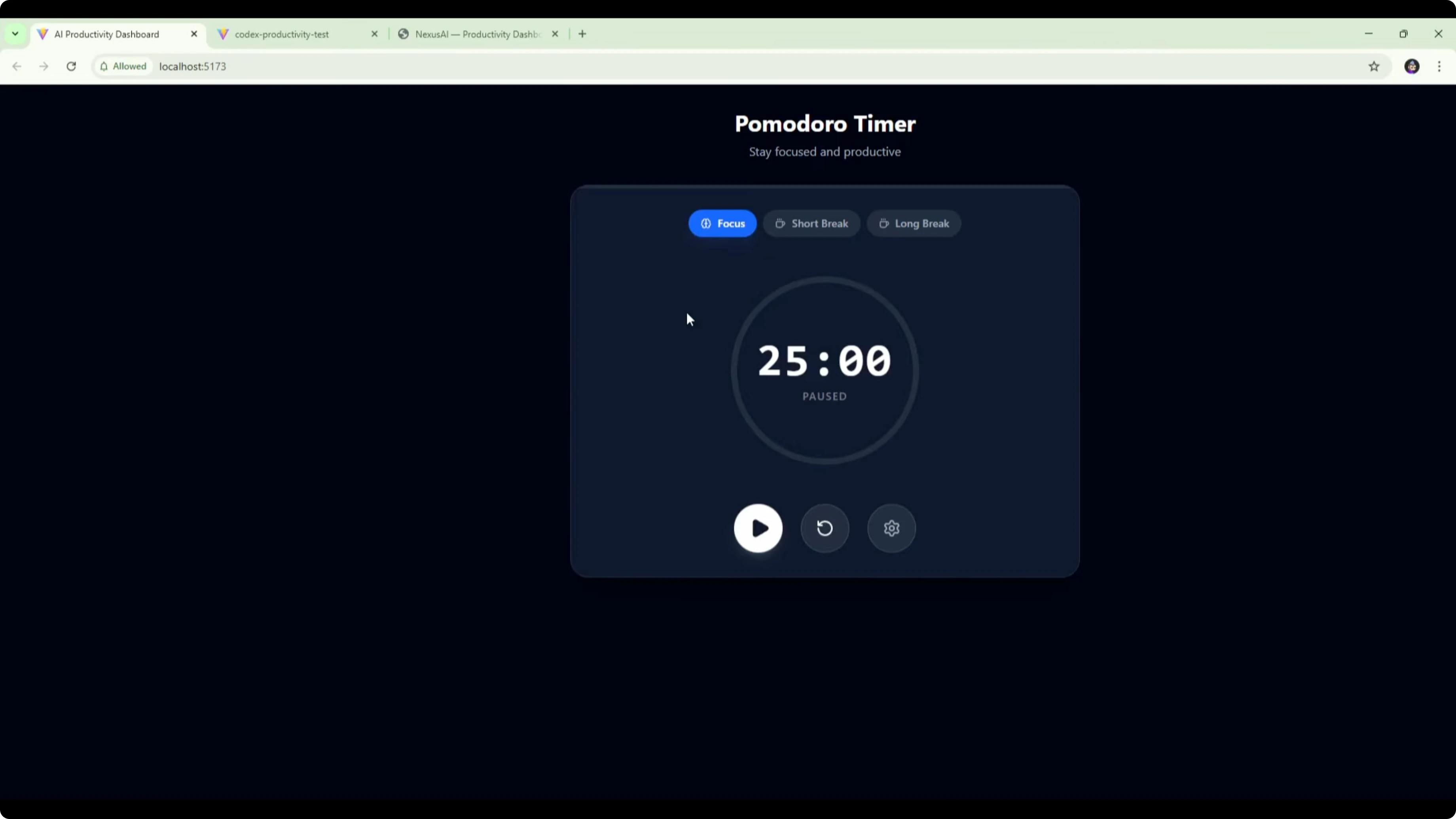

The Pomodoro timer worked with focus, short break, and long break modes, plus settings to adjust times. The circular progress indicator updated correctly, start and pause worked, and changing durations behaved as expected, though there was no back button from the timer screen.

Tasks could be added and edited, and manual date entry worked, but there was no date picker modal. The calendar was implemented as a daily schedule only, not the requested weekly and monthly views, although today’s tasks updated and completion stats reflected changes.

For more on Gemini across versions, see this head-to-head.

GPT-5.1 Codex results

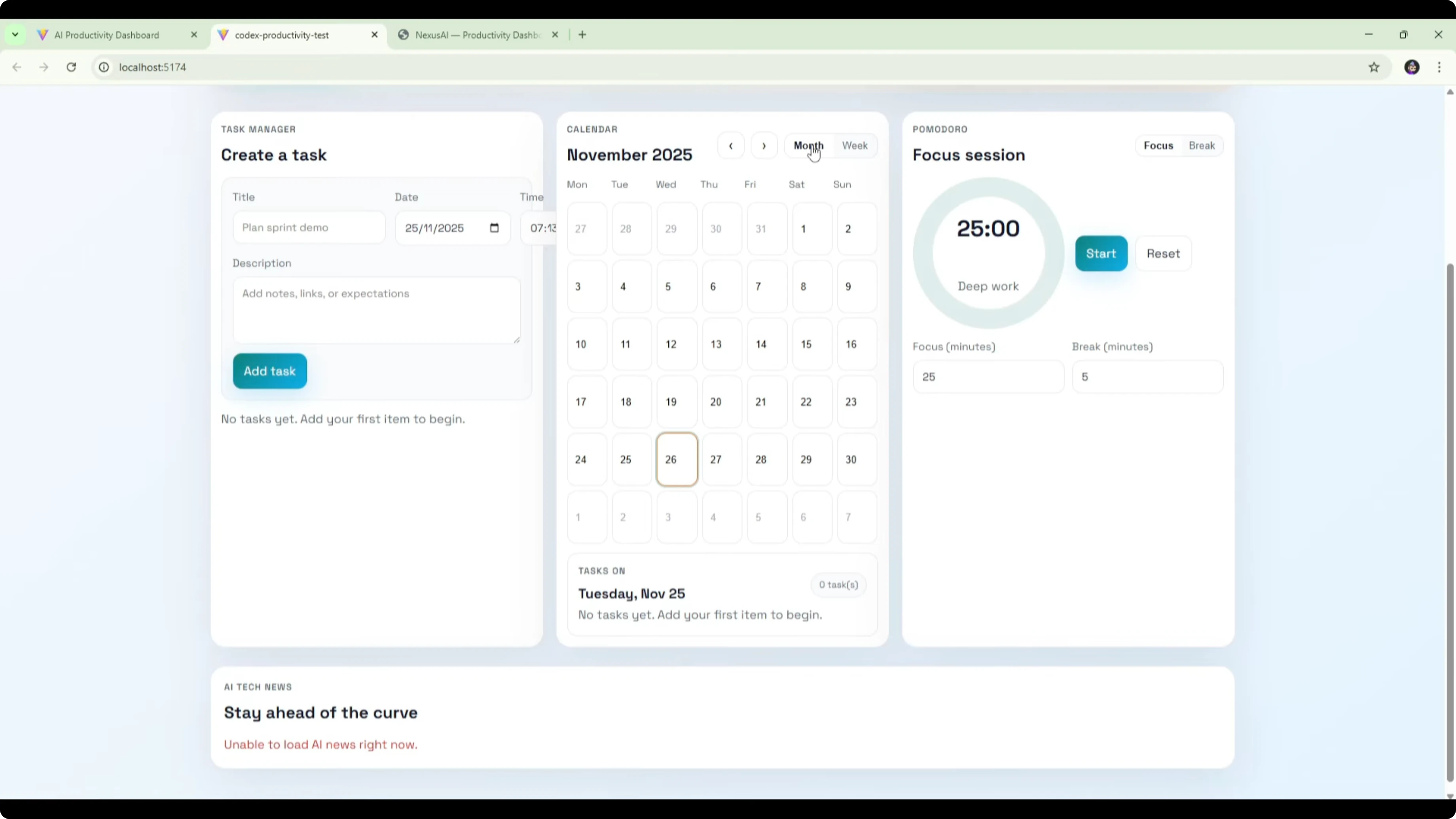

The Codex 5.1 Max dashboard opened with a headline, action buttons, and daily momentum stats. The layout showed some rendering issues like a time field slightly outside its container.

The calendar supported both monthly and weekly views as requested, and daily tasks populated correctly. The task manager integrated with the calendar, supported editing and deleting, and allowed date selection with a proper calendar picker.

The Pomodoro timer worked with focus and break modes, configurable times, and a circular progress indicator. A bug appeared where pause reset the timer instead of pausing, so that needs fixing.

The AI Tech News section showed an error loading items. I will check if the RSS feed links were the cause before judging that piece.

Opus 4.5 results

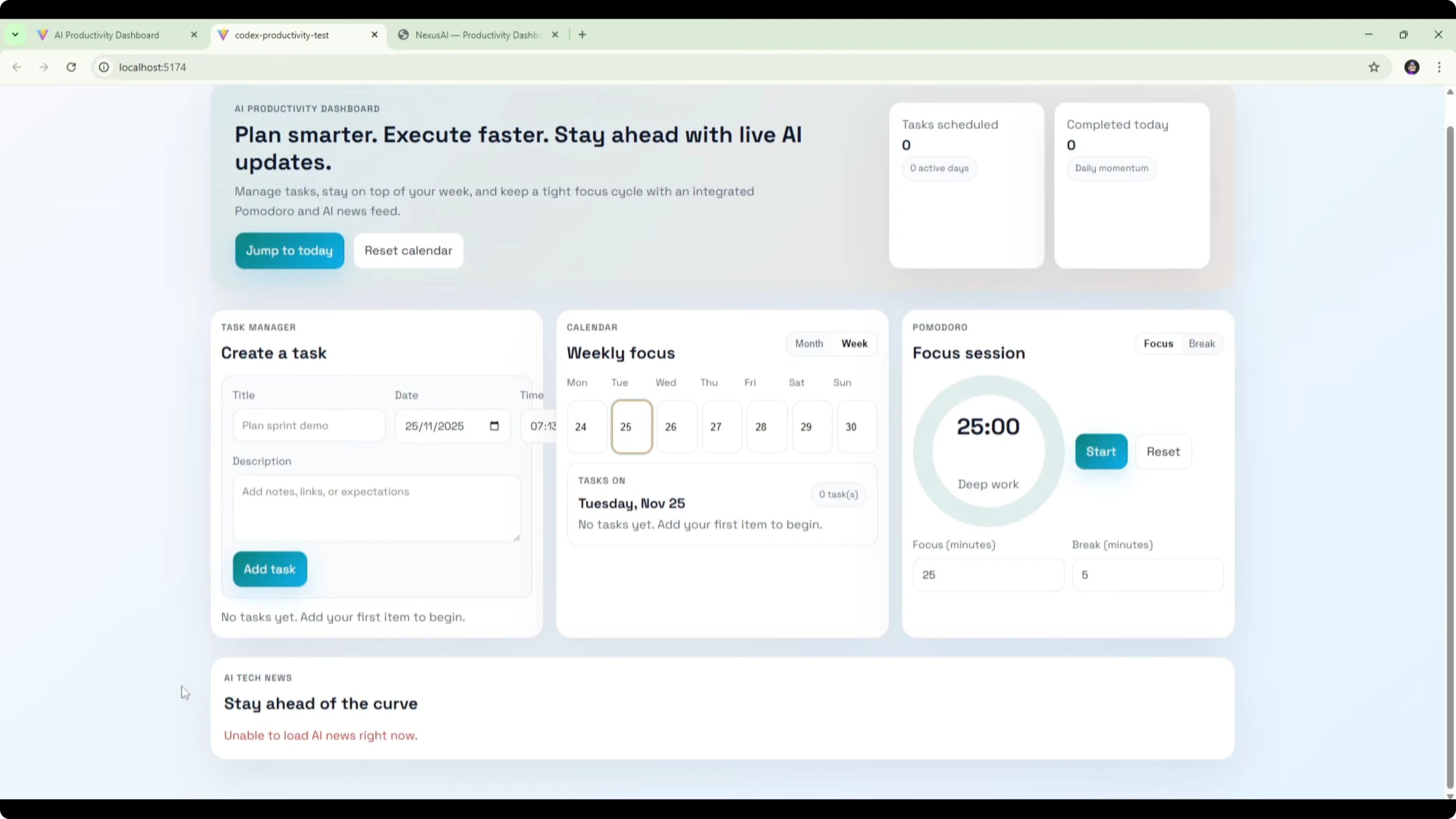

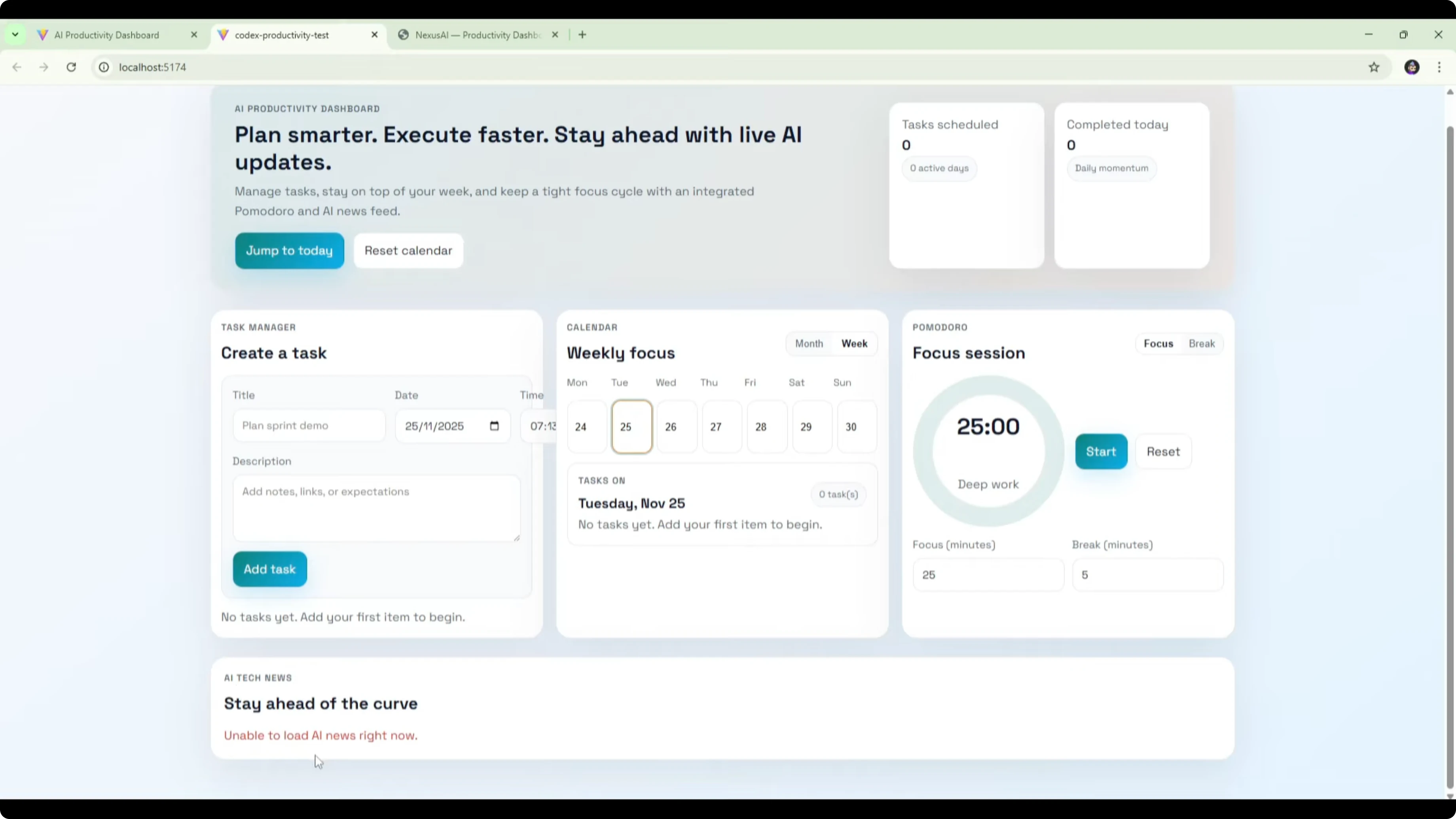

Opus 4.5 named the app Nexus AI. The main dashboard included a live clock, a task section in the center, a Pomodoro timer on the right, and today’s schedule at the bottom, with Calendar and AI News as top-level sections.

The Pomodoro timer worked as designed. Start, pause, reset, and mode switching all behaved correctly, and the circular progress tracked time accurately.

The calendar supported monthly and weekly views and separated the calendar from the main dashboard, which made navigation clearer. Opus 4.5 also validated features by launching dev mode and capturing screenshots, including the AI news feed populated from RSS.

Comparison overview table

| Model | Build time | UI quality | Pomodoro timer | Calendar view | Task manager | AI news feed | Testing behavior | Notable issues |

|---|---|---|---|---|---|---|---|---|

| Gemini 3 Pro | 7:29 | Clean but basic | Works with modes, settings, progress | Daily only | Add, edit, delete, manual date entry | Not implemented, showed a tip | No reported test logs | No back button from timer, no weekly or monthly views |

| GPT-5.1 Codex Max | 8:58 | Polished with minor layout issues | Start and reset work, pause resets by bug | Monthly and weekly implemented | Integrated with calendar, proper date picker | Failed to load during test, cause to be investigated | No reported test logs | Pause bug, formatting issues, completed items not separated |

| Claude Opus 4.5 | 12:02 | Best looking of the three | Works as expected with accurate progress | Monthly and weekly, separated section | Integrated and validated in testing | Implemented and validated via screenshots | Launched dev, tested all features, documented | Slowest due to thorough testing |

Use cases by model

Gemini 3 Pro is a strong pick when you need a working prototype very quickly. It got the core timer and task manager up and running the fastest and handled completion stats accurately.

GPT-5.1 Codex Max is suited for broader feature coverage with calendar depth. It implemented weekly and monthly views cleanly and integrated the task manager well.

Claude Opus 4.5 fits best when reliability and end-to-end validation matter. It tested what it built, documented results, and shipped the most refined UI with working timer logic and implemented RSS.

Pros and cons for Gemini 3 Pro

Pros: Fastest build time. Pomodoro timer worked with modes, settings, and visual progress. Task manager updates reflected accurately in completion stats.

Cons: Calendar limited to a daily view, not the requested weekly and monthly. RSS news feed replaced with a static productivity tip. No back button from the timer screen and no date picker modal.

Pros and cons for GPT-5.1 Codex Max

Pros: Implemented monthly and weekly calendar views as requested. Task manager integrated with the calendar and offered a date picker. UI quality was a step up over Gemini.

Cons: Pomodoro pause reset the timer due to a bug. Formatting issues like a time field overflowing its container. AI Tech News failed to load in testing and needs link verification, plus completed tasks did not move to a separate bucket.

Pros and cons for Claude Opus 4.5

Pros: Best overall UI design and clarity. Pomodoro timer worked flawlessly with accurate progress and controls. Thorough self-testing in dev mode, with screenshots and validation of features including RSS.

Cons: Slowest build time, likely due to comprehensive testing. Larger codebase than others, which could add overhead in small projects.

See a focused Opus vs Codex comparison for more context.

Recreate the test

Set up three empty project directories in Cursor, each bound to a different model session.

Provide the same prompt to each model: build a complete AI productivity dashboard with task manager, Pomodoro timer, calendar with weekly and monthly views integrated with task due dates, and an AI tech news feed from OpenAI, Anthropic, and Google AI RSS.

Allow each model to plan and generate the full codebase. Record build times from prompt submission to completion.

Launch each build in dev mode. Validate timer start, pause, reset, and mode switching with visual progress.

Add, edit, and delete tasks with due dates. Refresh to confirm persistence and check completion metrics.

Verify the calendar shows tasks on the correct dates. Toggle monthly, weekly, and daily views as applicable.

Test the AI news feed endpoint by fetching the RSS feeds, parsing XML to JSON, and rendering headlines. Confirm error handling for network or parsing failures.

For more cross-model build patterns, this breakdown can help.

Final thoughts

Gemini 3 Pro won on speed and shipped working timer and task management, but it missed key requirements like weekly and monthly calendar views and the RSS feed. GPT-5.1 Codex Max covered the calendar spec well and integrated tasks, though it needs fixes for the timer pause bug, formatting, and news loading.

Claude Opus 4.5 took the longest but delivered the strongest overall result, with the best UI, correct timer behavior, implemented RSS, and in-depth self-testing in dev mode. Benchmarks tell part of the story, but this build test shows process quality, validation, and spec adherence matter just as much as raw speed.

If you prioritize reliability, this model-by-model review is worth a read.

Subscribe to our newsletter

Get the latest updates and articles directly in your inbox.

Related Posts

Why DeepSeek V4 Pro and Flash Redefine GPU Clusters?

Why DeepSeek V4 Pro and Flash Redefine GPU Clusters?

DeepSeek V4 Pro, Hermes Agent & Telegram: Mobile Bug Fixing Guide

DeepSeek V4 Pro, Hermes Agent & Telegram: Mobile Bug Fixing Guide

How DeepSeek V4 Pro and OpenClaw Fix a Real Broken App?

How DeepSeek V4 Pro and OpenClaw Fix a Real Broken App?