Table Of Content

- How Tencent HY3 Preview Handles Near Impossible Tasks for Free?

- Model overview

- Coding test - reaction diffusion

- Replicate the coding test

- Example prompt

- Minimal working file

- Agentic research test - lithium battery supply chain

- Example prompt

- Benchmarks and positioning

- Crisis simulation test - 72 hour survival plan

- Getting access and setup

- Use cases

- Final thoughts

How Tencent HY3 Preview Handles Near Impossible Tasks for Free?

Table Of Content

- How Tencent HY3 Preview Handles Near Impossible Tasks for Free?

- Model overview

- Coding test - reaction diffusion

- Replicate the coding test

- Example prompt

- Minimal working file

- Agentic research test - lithium battery supply chain

- Example prompt

- Benchmarks and positioning

- Crisis simulation test - 72 hour survival plan

- Getting access and setup

- Use cases

- Final thoughts

There is quite a war happening in AI right now and it is not being fought by the names you would expect. While everyone is watching OpenAI, DeepSeek and Anthropic, Tencent has been doing something very different. Meet HY3 Preview.

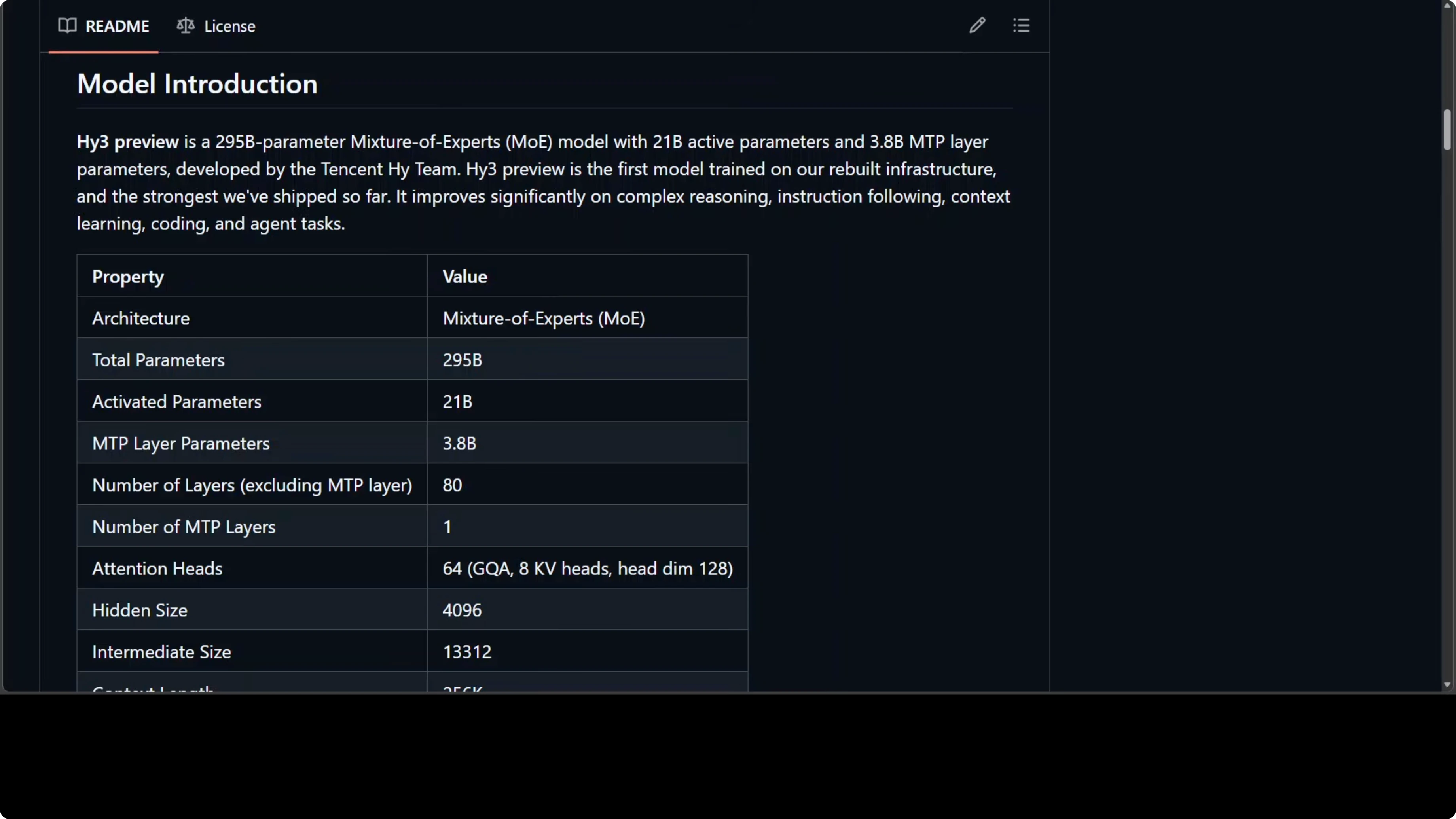

Tencent went dark for some time, tore down their entire infrastructure and rebuilt it from the ground up. On April 23rd, they came back with something very serious. HY3 Preview is a 295 billion parameter Mixture of Experts model that only activates 21 billion at any given time.

How Tencent HY3 Preview Handles Near Impossible Tasks for Free?

Model overview

That setup means you get the reasoning depth of a massive model without the compute cost. It supports a 256K context window and has a built-in reasoning mode you can dial up or down. Tencent calls it the most intelligent model they have ever released, the first born from their completely rebuilt pre-training and reinforcement learning infrastructure.

They are not claiming incremental improvement. They are claiming a new foundation. From what I have seen so far, it is a solid improvement compared to many other models, and the weights are openly available on Hugging Face and ModelScope for anyone to examine.

If you want more context on this release and its positioning, see this short primer on HY3 in our notes here: HY3 Preview deep notes. For broader coverage and related posts, you can browse our Tencent archive here: more posts on Tencent.

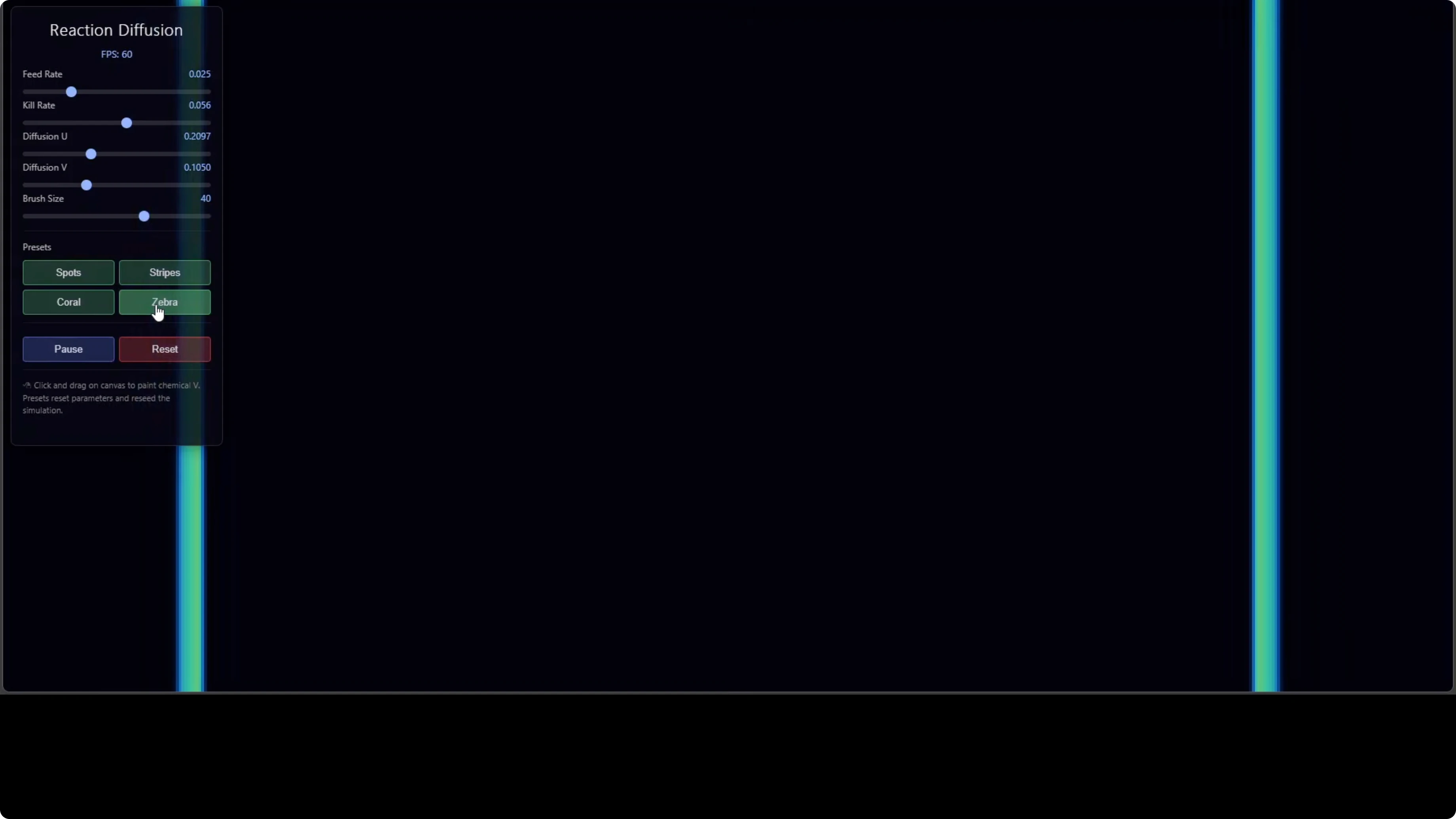

Coding test - reaction diffusion

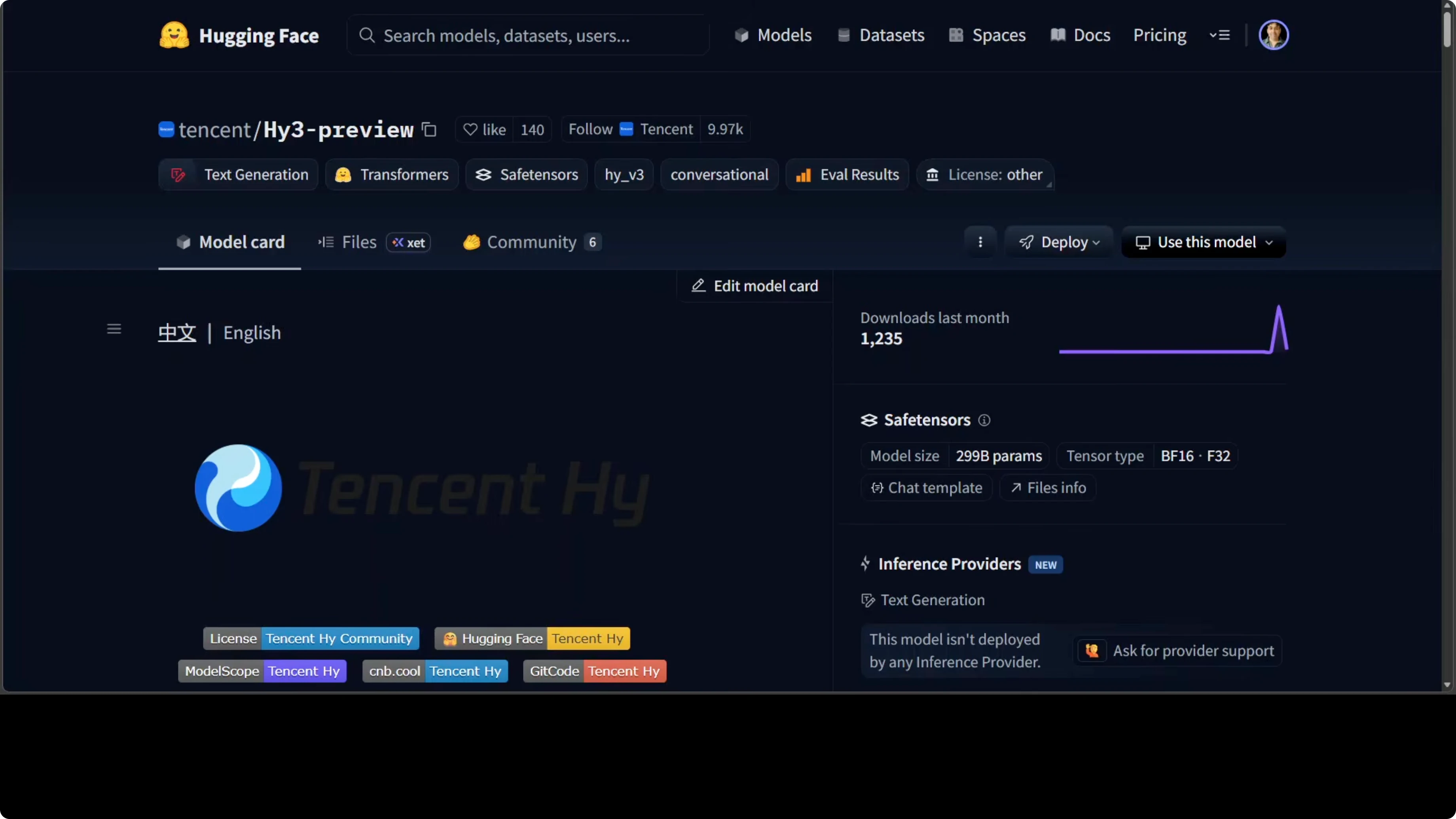

I kicked off testing with a hard coding challenge. I asked it to produce a self-contained HTML file that builds a visually strong reaction diffusion simulation, with proper JavaScript, solid HTML and canvas or WebGL rendering. This is something a senior engineer or a team might spend weeks building.

The model produced code that ran locally in the browser. The point here is that it was not just writing code. It was writing physics, modeling how two chemicals interact and self-organize into patterns found in actual living organisms.

I could reset the simulation and switch presets like zebra or coral, and dial parameters like feed rate, kill rate and diffusion. Building this from a single prompt is equivalent to asking someone to architect a real time rendering engine plus a polished interactive UI at the same time. If a model can do that in one shot with zero handholding, it can handle virtually any real world software engineering task you throw at it.

Replicate the coding test

Ask the model to generate a self-contained HTML file that implements a Gray-Scott reaction diffusion simulation with canvas or WebGL, plus UI controls for feed, kill and diffusion and presets like zebra and coral.

Save the response as index.html and open it in a modern browser.

Adjust parameters to verify it responds correctly and produces stable and turbulent patterns across presets.

Example prompt

Produce a single self-contained index.html that implements a Gray-Scott reaction diffusion simulation rendered to an HTML canvas. Include JavaScript in the same file. Add UI controls for feed rate f, kill rate k and diffusion coefficients Du and Dv. Include presets for "zebra" and "coral" that users can switch between. Keep it performant at 256x256 with requestAnimationFrame. No external libraries.

Minimal working file

Below is a compact self-contained HTML example you can use to sanity check outputs. It runs entirely in the browser and exposes feed, kill and diffusion controls with zebra and coral presets.

<!doctype html>

<html lang="en">

<head>

<meta charset="utf-8">

<title>Reaction Diffusion - Gray-Scott</title>

<meta name="viewport" content="width=device-width, initial-scale=1">

<style>

body { font-family: system-ui, sans-serif; margin: 20px; background:#111; color:#eee; }

#wrap { display:flex; gap:20px; align-items:flex-start; }

canvas { image-rendering: pixelated; border:1px solid #333; background:#000; }

.panel { max-width: 340px; }

.row { margin:8px 0; }

label { display:block; font-size:14px; margin-bottom:4px; }

input[type="range"] { width:100%; }

button, select { padding:6px 10px; background:#222; color:#eee; border:1px solid #444; }

.small { font-size:12px; color:#aaa; }

</style>

</head>

<body>

<div id="wrap">

<canvas id="c" width="256" height="256"></canvas>

<div class="panel">

<div class="row">

<label for="preset">Preset</label>

<select id="preset">

<option value="zebra">zebra</option>

<option value="coral">coral</option>

<option value="custom">custom</option>

</select>

</div>

<div class="row">

<label>Feed f: <span id="fVal">0.03</span></label>

<input id="f" type="range" min="0.0" max="0.08" step="0.0005" value="0.03">

</div>

<div class="row">

<label>Kill k: <span id="kVal">0.062</span></label>

<input id="k" type="range" min="0.0" max="0.08" step="0.0005" value="0.062">

</div>

<div class="row">

<label>Diffusion Du: <span id="duVal">0.16</span></label>

<input id="du" type="range" min="0.0" max="1.0" step="0.005" value="0.16">

</div>

<div class="row">

<label>Diffusion Dv: <span id="dvVal">0.08</span></label>

<input id="dv" type="range" min="0.0" max="1.0" step="0.005" value="0.08">

</div>

<div class="row">

<button id="reset">Reset</button>

<button id="seed">Seed Center</button>

<button id="pause">Pause</button>

</div>

<p class="small">

Gray-Scott PDE solved with finite differences on a 256x256 grid. Adjust f and k to move between spots and stripes.

</p>

</div>

</div>

<script>

const W = 256, H = 256, dt = 1.0;

const canvas = document.getElementById('c');

const ctx = canvas.getContext('2d');

const img = ctx.createImageData(W, H);

// State buffers

let U = new Float32Array(W * H);

let V = new Float32Array(W * H);

let U2 = new Float32Array(W * H);

let V2 = new Float32Array(W * H);

// UI elements

const fEl = document.getElementById('f');

const kEl = document.getElementById('k');

const duEl = document.getElementById('du');

const dvEl = document.getElementById('dv');

const fVal = document.getElementById('fVal');

const kVal = document.getElementById('kVal');

const duVal = document.getElementById('duVal');

const dvVal = document.getElementById('dvVal');

const presetEl = document.getElementById('preset');

const resetBtn = document.getElementById('reset');

const seedBtn = document.getElementById('seed');

const pauseBtn = document.getElementById('pause');

let running = true;

function setPreset(name) {

if (name === 'zebra') {

fEl.value = 0.030;

kEl.value = 0.062;

duEl.value = 0.16;

dvEl.value = 0.08;

} else if (name === 'coral') {

fEl.value = 0.0545;

kEl.value = 0.062;

duEl.value = 0.16;

dvEl.value = 0.08;

}

syncLabels();

}

function syncLabels() {

fVal.textContent = Number(fEl.value).toFixed(4);

kVal.textContent = Number(kEl.value).toFixed(4);

duVal.textContent = Number(duEl.value).toFixed(2);

dvVal.textContent = Number(dvEl.value).toFixed(2);

}

function idx(x, y) {

return y * W + x;

}

function clamp(v, lo, hi) {

return v < lo ? lo : v > hi ? hi : v;

}

function lap(buf, x, y) {

// 4-neighbor with simple wrap

const xm = (x - 1 + W) % W, xp = (x + 1) % W;

const ym = (y - 1 + H) % H, yp = (y + 1) % H;

const c = buf[idx(x, y)];

const l = buf[idx(xm, y)];

const r = buf[idx(xp, y)];

const u = buf[idx(x, ym)];

const d = buf[idx(x, yp)];

// Weights approximate Laplacian

return -4 * c + l + r + u + d;

}

function clear() {

U.fill(1.0);

V.fill(0.0);

}

function seed(centerSize = 16) {

const cx = W >> 1, cy = H >> 1;

for (let y = cy - centerSize; y < cy + centerSize; y++) {

for (let x = cx - centerSize; x < cx + centerSize; x++) {

const i = idx((x + W) % W, (y + H) % H);

U[i] = 0.50 + 0.1 * Math.random();

V[i] = 0.25 + 0.1 * Math.random();

}

}

}

function step() {

const f = parseFloat(fEl.value);

const k = parseFloat(kEl.value);

const Du = parseFloat(duEl.value);

const Dv = parseFloat(dvEl.value);

for (let y = 0; y < H; y++) {

for (let x = 0; x < W; x++) {

const i = idx(x, y);

const u = U[i];

const v = V[i];

const lu = lap(U, x, y);

const lv = lap(V, x, y);

const uvv = u * v * v;

let uN = u + (Du * lu - uvv + f * (1 - u)) * dt;

let vN = v + (Dv * lv + uvv - (f + k) * v) * dt;

U2[i] = clamp(uN, 0, 1);

V2[i] = clamp(vN, 0, 1);

}

}

// swap buffers

[U, U2] = [U2, U];

[V, V2] = [V, V2];

}

function render() {

const data = img.data;

for (let y = 0; y < H; y++) {

for (let x = 0; x < W; x++) {

const i = idx(x, y);

const v = V[i];

// Map V to grayscale with subtle color tint

const c = Math.floor(255 * v);

const o = i * 4;

data[o] = c * 0.9;

data[o + 1] = c * 0.95;

data[o + 2] = 255 - c * 0.7;

data[o + 3] = 255;

}

}

ctx.putImageData(img, 0, 0);

}

function loop() {

if (running) {

// Multiple simulation steps per frame to speed up pattern growth

for (let i = 0; i < 8; i++) step();

render();

}

requestAnimationFrame(loop);

}

// Event wiring

[fEl, kEl, duEl, dvEl].forEach(el => el.addEventListener('input', syncLabels));

presetEl.addEventListener('change', e => {

if (e.target.value !== 'custom') setPreset(e.target.value);

});

resetBtn.addEventListener('click', () => { clear(); seed(); });

seedBtn.addEventListener('click', () => seed(16));

pauseBtn.addEventListener('click', () => {

running = !running;

pauseBtn.textContent = running ? 'Pause' : 'Resume';

});

// Init

syncLabels();

clear();

seed();

setPreset('zebra');

loop();

</script>

</body>

</html>If you are collecting tools and model endpoints that make tests like this easier, you may find our quick starter list handy here: free models and APIs guide.

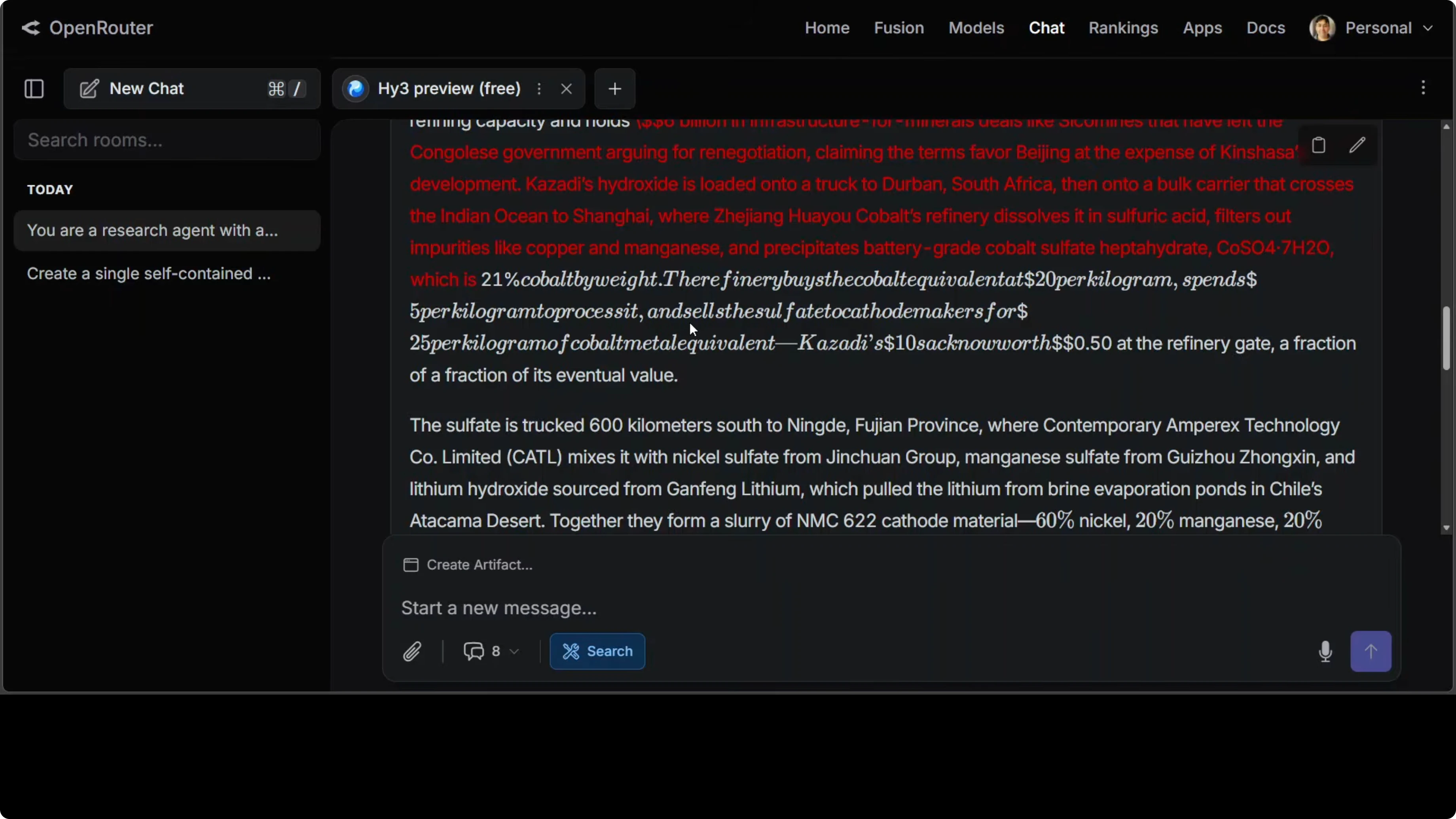

Agentic research test - lithium battery supply chain

Next, I checked agentic reasoning. I asked it to be a research agent with access to web search and to trace a complex supply chain journey of a single lithium battery cell, from raw cobalt pulled out of the ground in the Democratic Republic of Congo, through refining and chemical processing in China, through cell manufacturing in Asia, and then every step into a phone. The bar was simple but high: produce a single vivid authoritative narrative that tells this story end to end, not a bullet list.

A weak model gives you a Wikipedia dump. A strong model gives you something that feels like genuine understanding of how the world actually works. What I saw looked strong.

Example prompt

Act as a research agent with access to web search. Trace the end-to-end supply chain of a single lithium-ion battery cell, starting from cobalt extraction in the DRC, through chemical refining in China, cathode/anode and electrolyte production, cell manufacturing in Asia, pack integration, shipment, and integration into a smartphone. Write a single vivid narrative with specific names, coordinates, company sites, process chemicals, typical percentages, typical dollar values at each stage, and relevant legislation that touches each step. No bullets. Synthesize into flowing prose with citations inline where helpful.The model did not just trace the supply chain. It humanized both ends of it with specific names, specific numbers, and specific coordinates, which most models would not think to do unprompted. The level of technical and economic precision was remarkable, with cobalt percentages, compound names, dollar values at each stage and legislation references woven into narrative prose, which takes real synthesis, not just retrieval.

If you want to keep an eye on Tencent’s broader research push and product surface area, we keep a living index here: Tencent coverage and updates.

Benchmarks and positioning

HY3 Preview is holding its own against much larger models on some of the hardest benchmarks out there. That includes International Math Olympiad style reasoning, frontier science tasks and real world software engineering on instruction following and in context learning. Tencent moved away from leaderboards that are easy to game and built their own benchmarks from real business scenarios.

The headline is very simple. A 295B parameter model that costs you 21B parameters at runtime and punches well above its weight. That ratio matters when you care about reasoning depth and serving cost at the same time.

For practitioners who need a practical path from evaluations to deployment, I have a compact write up on HY3’s positioning you can skim here: HY3 Preview notes and pointers.

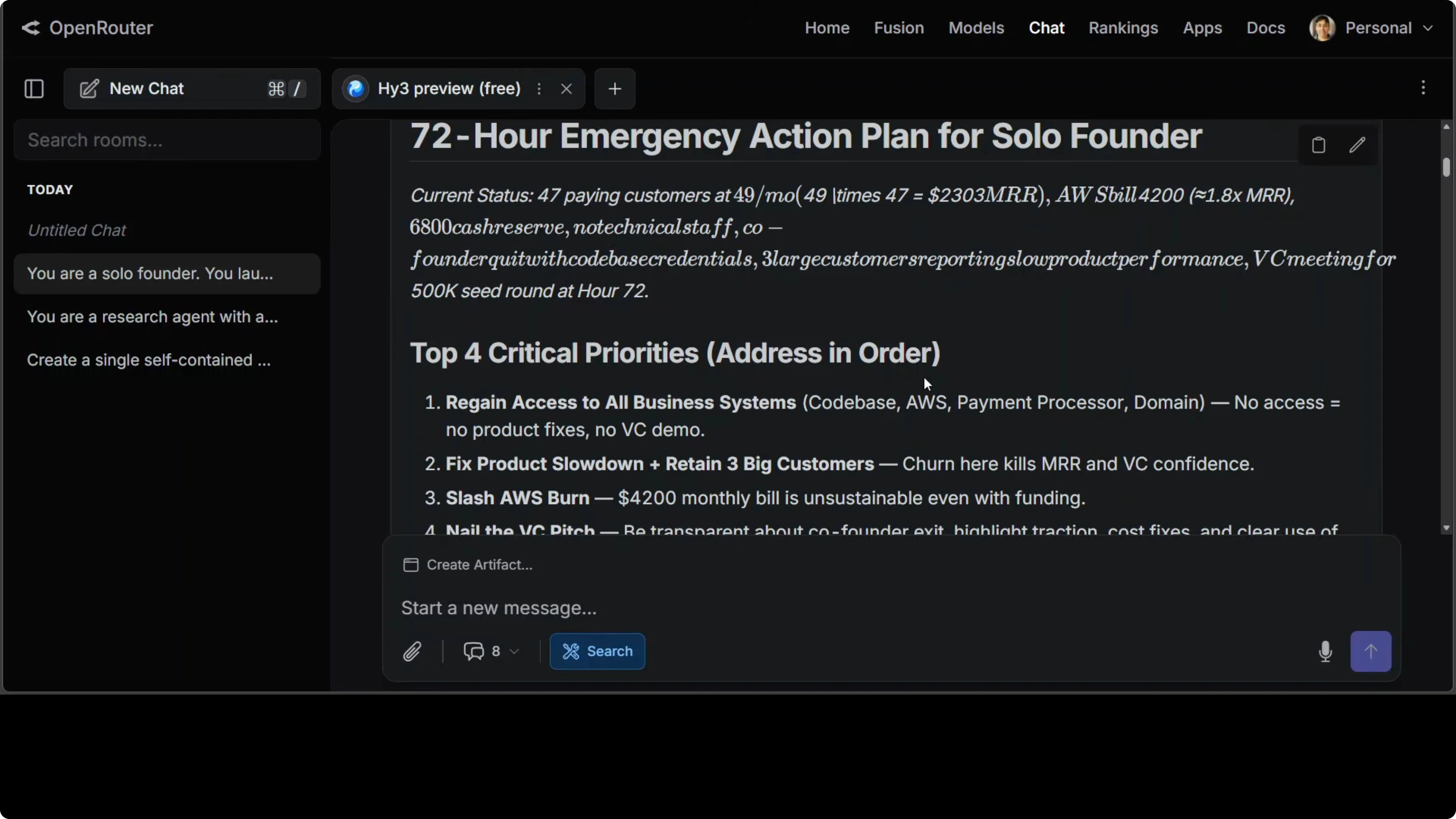

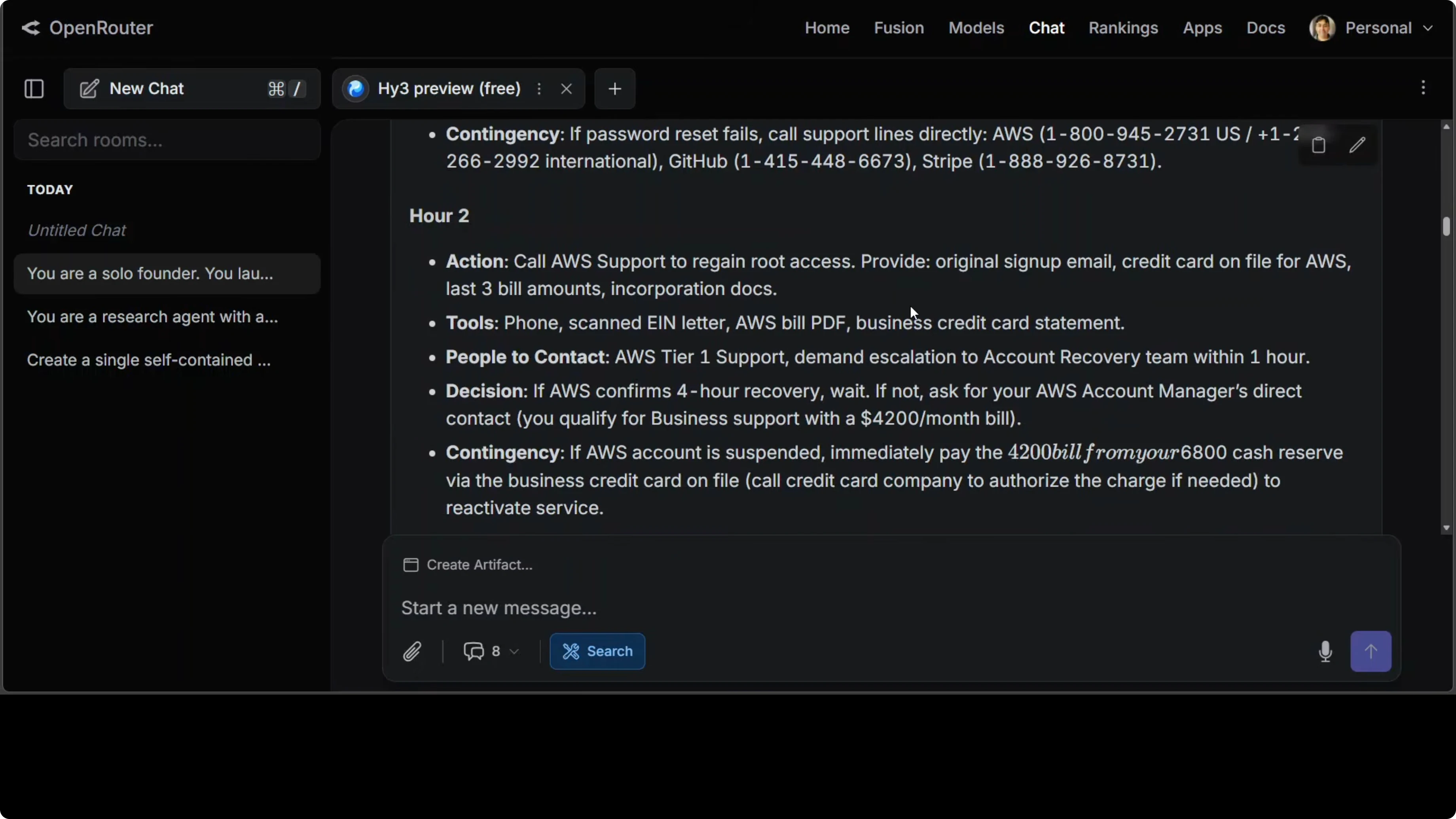

Crisis simulation test - 72 hour survival plan

Finally, I tested if the model could get out of an impossible situation. The setup was that a solo founder launched a SaaS product 8 months ago, has 47 paying customers at 49 dollars per month, and the co-founder quit this morning and took the codebase access credentials. The AWS bill is about 4,200 dollars this month, there is very little cash left in the bank, there is no technical staff, three of the biggest customers say the product is running slow, and a VC meeting is on the calendar.

I asked for a complete hour by hour action plan for the next 72 hours with specific tools, specific people to contact and specific decisions to make. The instruction was that nothing should be assumed or handed to you and every recommendation must be actionable by someone exhausted, alone and running out of time. There is a human element here and I wanted to see if the model can keep its head.

The response was not a generic startup advice listicle. It read like a forensically detailed survival manual with specific phone numbers, legal frameworks like CFAA, named tools at every step and a cash flow analysis that correctly identified burn at roughly 1.8x MRR on AWS before any fixes. What separated it from a weak model response was the sequencing.

It correctly prioritized regaining system access before fixing the product, fixing the product before prepping the pitch and sleeping before the meeting, which is exactly the order an experienced operator would choose under pressure. It also provided contingencies with fallbacks at each step. The final escape hatch was to sell the customer list on MicroAcquire to recover 10k and preserve a 4k cash reserve, which showed the model was thinking about founder survival beyond 72 hours, not just the VC meeting.

If you are integrating HY3 into a custom workflow for incident response, RAG or back office automations, I have notes on implementation patterns here: custom Tencent integrations.

Getting access and setup

You can try HY3 Preview free right now through OpenRouter. No waitlist hoops or strange API gymnastics at the time of testing. Here is a simple way to reproduce the tests.

Create a free account on OpenRouter and select the Tencent HY3 Preview model in the model picker.

Enable reasoning mode and set the context window high if your prompt is long.

Run the coding, research and crisis prompts as shown above and compare outputs to your current stack.

If you prefer calling it from code, this minimal cURL will get you started. Replace the token header with your own.

curl https://openrouter.ai/api/v1/chat/completions \

-H "Authorization: Bearer YOUR_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "tencent/hy3-preview",

"messages": [

{"role": "system", "content": "You are a careful, step-by-step reasoner. Use chain-of-thought privately, output only final answers."},

{"role": "user", "content": "Produce a self-contained index.html that implements a Gray-Scott reaction diffusion simulation with canvas, plus UI for f, k, Du, Dv and zebra/coral presets."}

],

"temperature": 0.4,

"max_tokens": 2000

}'If you want a zero friction path to stack this with other free endpoints and debugging tools, I documented a short setup here: OpenClaw setup walkthrough. For a curated list of free endpoints and operator tools that pair well with HY3 while testing, see this page: free models and APIs toolkit.

Use cases

Complex software engineering from high level prompt to production grade scaffolding benefits from HY3’s reasoning depth. You can ask for architecture, implementation and instrumentation in one pass and then refine with smaller deltas. The ability to write physics and UI together from a single instruction is a strong indicator.

Research and analysis that demand synthesis across sources, numbers and regulatory context map well to its narrative outputs. Ask for a single cohesive story with constraints and it will often hold that form without collapsing into lists. This suits due diligence, market mapping and technical briefs.

Crisis planning and operations where ordering is everything map well to its stepwise outputs. Give it real constraints like time, budget and legal boundaries and examine how it sequences actions and proposes fallbacks. That is where you can see if it is truly thinking or just dumping checklists.

Back office automation and knowledge workflows benefit from the 256K context window. You can drop large policy docs, tickets and logs and get structured actions or messaging without splitting context. Cost at 21B active parameters makes iterative loops more tolerable.

Education and simulation are a natural fit. Ask it to build scientific models and interactive controls that teach a concept, then refine the UI copy for clarity. That feedback loop compounds quickly when it can both author code and explain the math.

Final thoughts

You have seen three tests in three very different domains. HY3 Preview did not just answer, it thought, and it did so in one shot, free to try at the moment. A massive MoE with 295B parameters that only activates 21B per token, open weights, long context and strong outputs across coding, research and crisis planning is worth your time.

Subscribe to our newsletter

Get the latest updates and articles directly in your inbox.

Related Posts

Why DeepSeek V4 Pro and Flash Redefine GPU Clusters?

Why DeepSeek V4 Pro and Flash Redefine GPU Clusters?

DeepSeek V4 Pro, Hermes Agent & Telegram: Mobile Bug Fixing Guide

DeepSeek V4 Pro, Hermes Agent & Telegram: Mobile Bug Fixing Guide

How DeepSeek V4 Pro and OpenClaw Fix a Real Broken App?

How DeepSeek V4 Pro and OpenClaw Fix a Real Broken App?