Table Of Content

- Why Qwen3.6-27B

- Dense Over MoE For Stable, Practical Use

- Setup On Ubuntu

- System-level prerequisites may include CUDA and the correct driver for A100.

- Python environment

- Core packages

- Hugging Face login to access gated weights if needed

- Run Qwen3.6-27B Locally With vLLM

- Replace the model ID with the exact name on Hugging Face once you have access.

- Many Qwen releases require trust-remote-code for custom modeling code.

- Call The Local Endpoint

- Vision Input Locally

- Reasoning And Long Context

- Benchmarks For Practical Coding

- VRAM Notes And Throughput

- Stable, Practical Use With Qwen3.6-27B

- Practical Use Cases

- Final Thoughts

How to Run Qwen3.6-27B Locally for Stable, Practical Use?

Qwen Model Recommender

Not sure which Qwen model fits your GPU? Pick your VRAM, use case, and task type — get matched to the right model instantly. Covers Qwen3, QwQ, QVQ, Qwen2.5-VL, Qwen3-Coder, Qwen3-TTS, and 80+ models.

Table Of Content

- Why Qwen3.6-27B

- Dense Over MoE For Stable, Practical Use

- Setup On Ubuntu

- System-level prerequisites may include CUDA and the correct driver for A100.

- Python environment

- Core packages

- Hugging Face login to access gated weights if needed

- Run Qwen3.6-27B Locally With vLLM

- Replace the model ID with the exact name on Hugging Face once you have access.

- Many Qwen releases require trust-remote-code for custom modeling code.

- Call The Local Endpoint

- Vision Input Locally

- Reasoning And Long Context

- Benchmarks For Practical Coding

- VRAM Notes And Throughput

- Stable, Practical Use With Qwen3.6-27B

- Practical Use Cases

- Final Thoughts

From the Ming Dynasty’s Jungle Encyclopedia in 1408 to Qwen 3.6 in 2026, the obsession with organizing and mastering knowledge never really went away. Alibaba just dropped a 27 billion parameter model that beats models many times its size on coding benchmarks. Do more with less is still the story.

I am installing it locally and testing it thoroughly on Ubuntu with a single Nvidia A100 80 GB. Stability and real-world utility come first in this setup. I will keep it practical and reproducible.

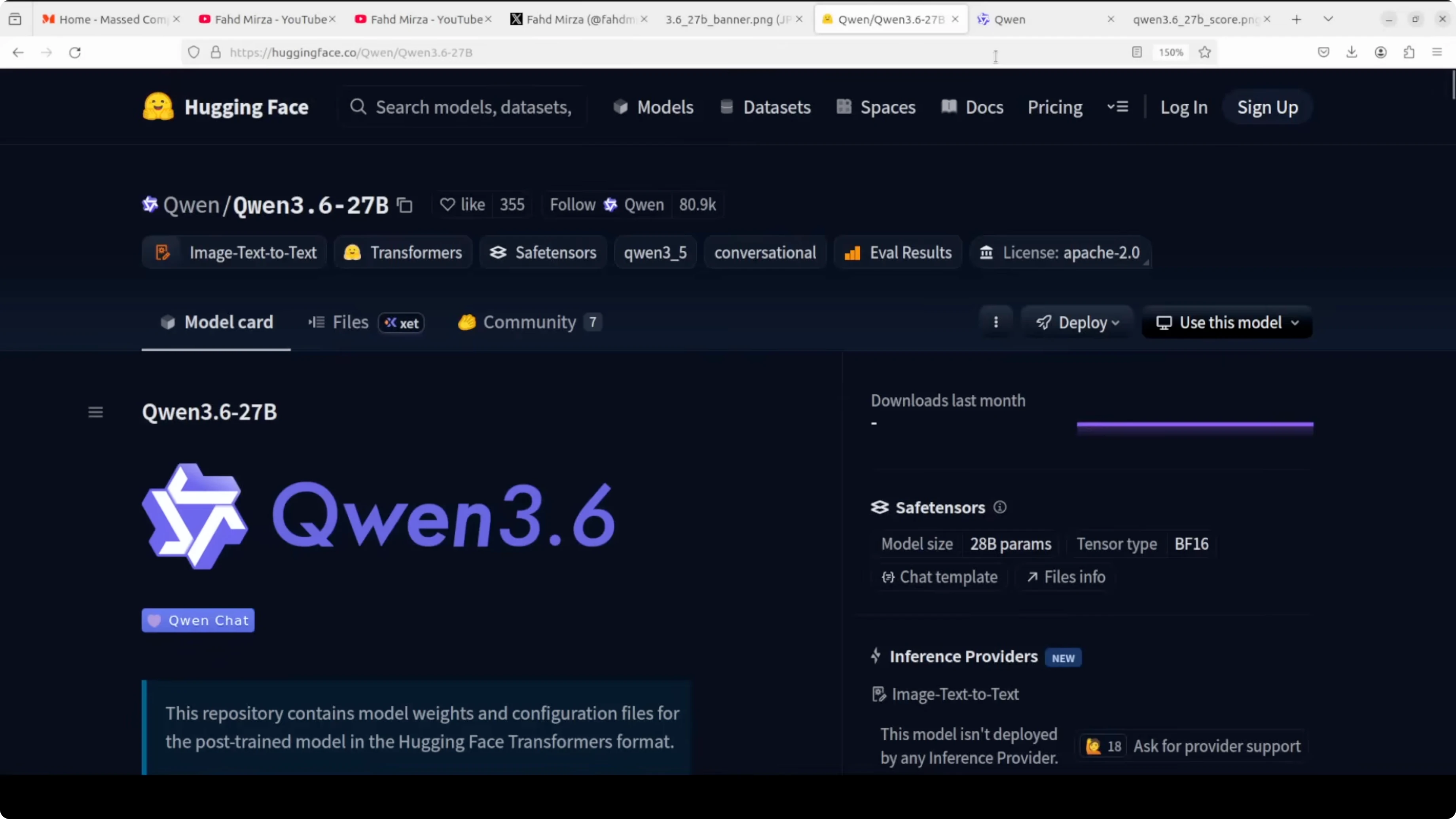

Why Qwen3.6-27B

Qwen3.6-27B is a dense model, meaning every single one of its 27 billion parameters fires on every token. No routing tricks, no sparse shortcuts. Dense models are simpler, more predictable, and easier to deploy.

That is the opposite of mixture of experts like Qwen 3.5-35B or Qwen 3.6-35B, which might have 35 billion parameters but only activate around 3 billion at a time. Qwen is betting that with the right training, dense can win over mixture of experts. The model is also natively multimodal for text and vision.

For a pure coder-focused local setup, check out this comparative guide on running Qwen3 Coder Next locally. It pairs well with the workflow in this article.

Dense Over MoE For Stable, Practical Use

Dense models avoid routing variance and gate failures that can show up in MoE. That leads to steadier latencies and saner deployment. If you care about stable service under load, dense often wins.

Qwen3.6-27B supports up to 262k context length natively. It also has a feature called preserved thinking where it remembers its own reasoning across the whole conversation, not just the last message. That is a big deal for agentic coding tasks where context compounds.

If you are comparing precision settings against Ollama-based runs, this breakdown on full precision vs Ollama for Qwen3.6 will help you pick the right trade-offs.

Setup On Ubuntu

I am on Ubuntu with an A100 80 GB. VRAM usage sits just under 74 GB for the full model load. Everything runs from a clean vLLM install for predictability.

Install the basics.

# System-level prerequisites may include CUDA and the correct driver for A100.

# Python environment

python3 -m venv qwen36env

source qwen36env/bin/activate

pip install -U pip

# Core packages

pip install -U vllm transformers accelerate huggingface_hub pillow safetensors

# Hugging Face login to access gated weights if needed

huggingface-cli loginIf you need a smaller model to fine tune on your own data before moving up to 27B, here is a hands-on walkthrough for fine tuning Qwen3.5 8B locally.

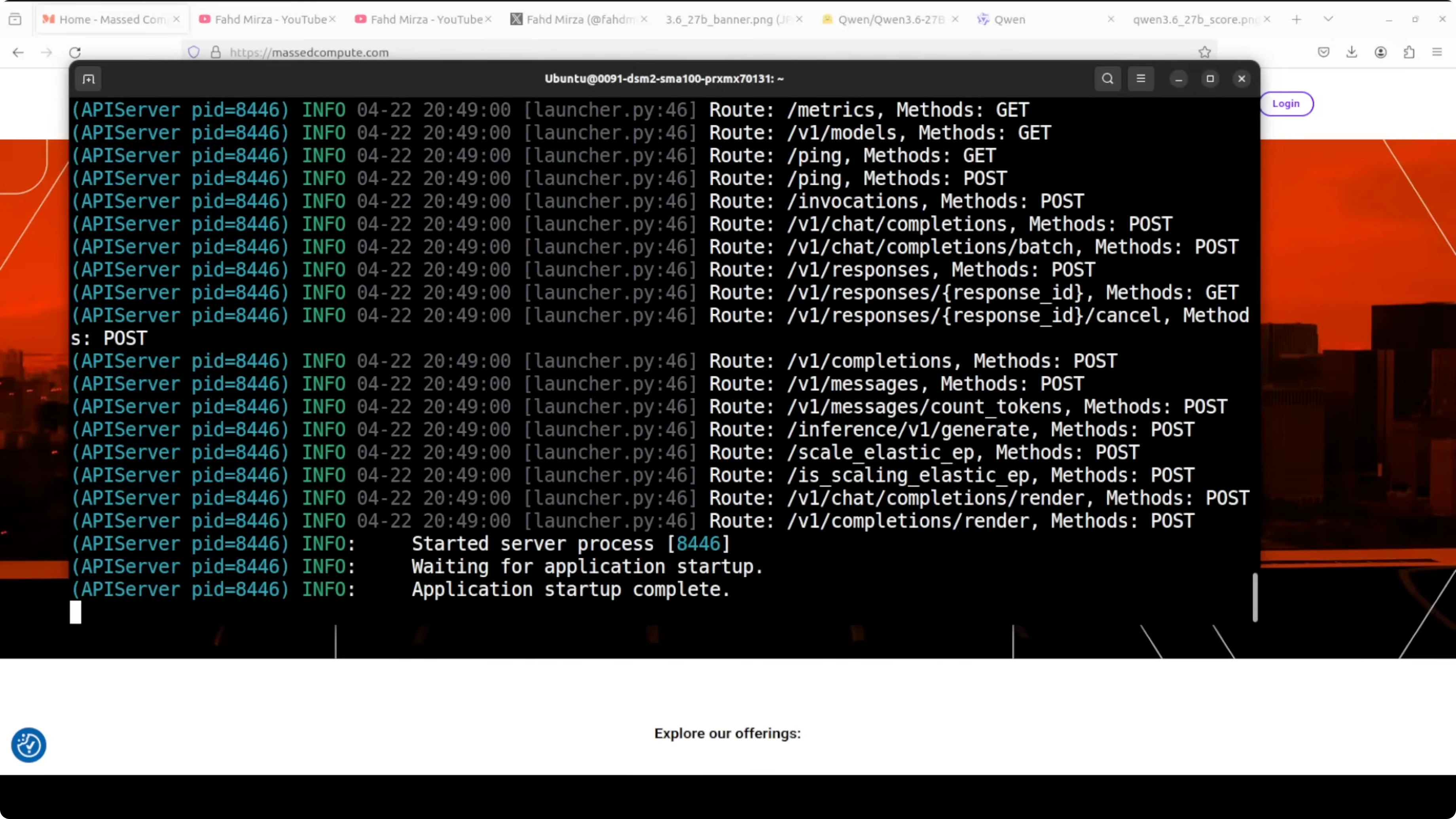

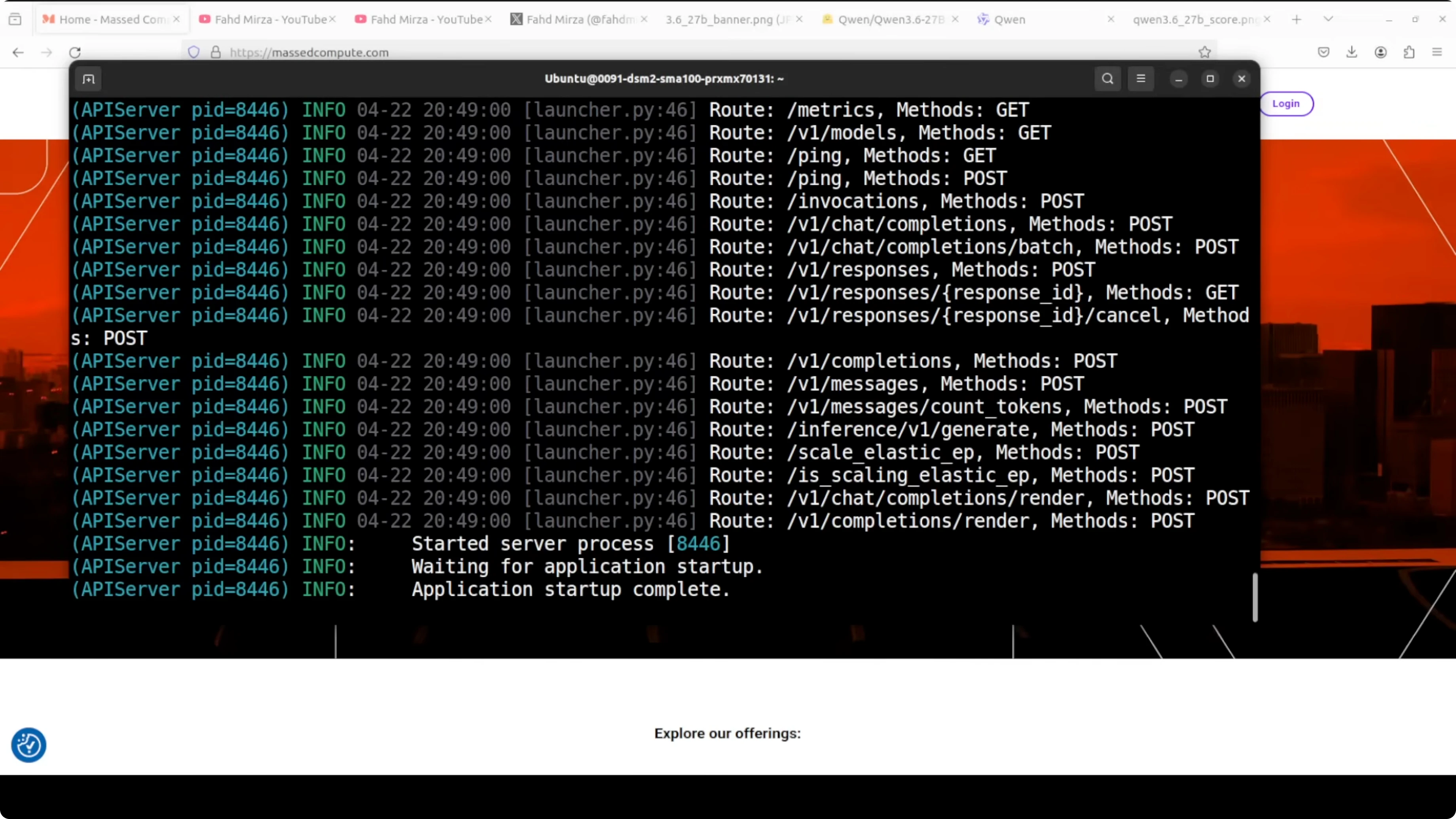

Run Qwen3.6-27B Locally With vLLM

Start the OpenAI-compatible API server on port 8000. The flags here prioritize stability and long context on a single large GPU.

# Replace the model ID with the exact name on Hugging Face once you have access.

# Many Qwen releases require trust-remote-code for custom modeling code.

python -m vllm.entrypoints.openai.api_server \

--model Qwen3.6-27B-Instruct \

--trust-remote-code \

--dtype bfloat16 \

--max-model-len 32768 \

--port 8000 \

--gpu-memory-utilization 0.95If your GPU is smaller, reduce --max-model-len to fit memory. You can also load quantized variants if available for this release to shrink VRAM needs. Keep an eye on stability and throughput as you tune these knobs.

For a broader context on how Qwen stacks up against peers at higher parameter counts, this side-by-side look at Gemma 4 31B vs Qwen3.5 27B will be useful while planning deployments.

Call The Local Endpoint

You can use curl to sanity-check the local server. This shows a basic chat completion call with temperature pinned for consistency.

curl http://localhost:8000/v1/chat/completions \

-H "Content-Type: application/json" \

-d '{

"model": "Qwen3.6-27B-Instruct",

"messages": [

{"role": "user", "content": "Your prompt here"}

],

"temperature": 0.2,

"max_tokens": 512

}'For Python, requests works fine with the same OpenAI-compatible route. This client keeps the interface minimal and easy to reuse.

import requests

url = "http://localhost:8000/v1/chat/completions"

payload = {

"model": "Qwen3.6-27B-Instruct",

"messages": [

{"role": "user", "content": "Your prompt here"}

],

"temperature": 0.2,

"max_tokens": 512

}

resp = requests.post(url, json=payload, timeout=120)

print(resp.json()["choices"][0]["message"]["content"])Vision Input Locally

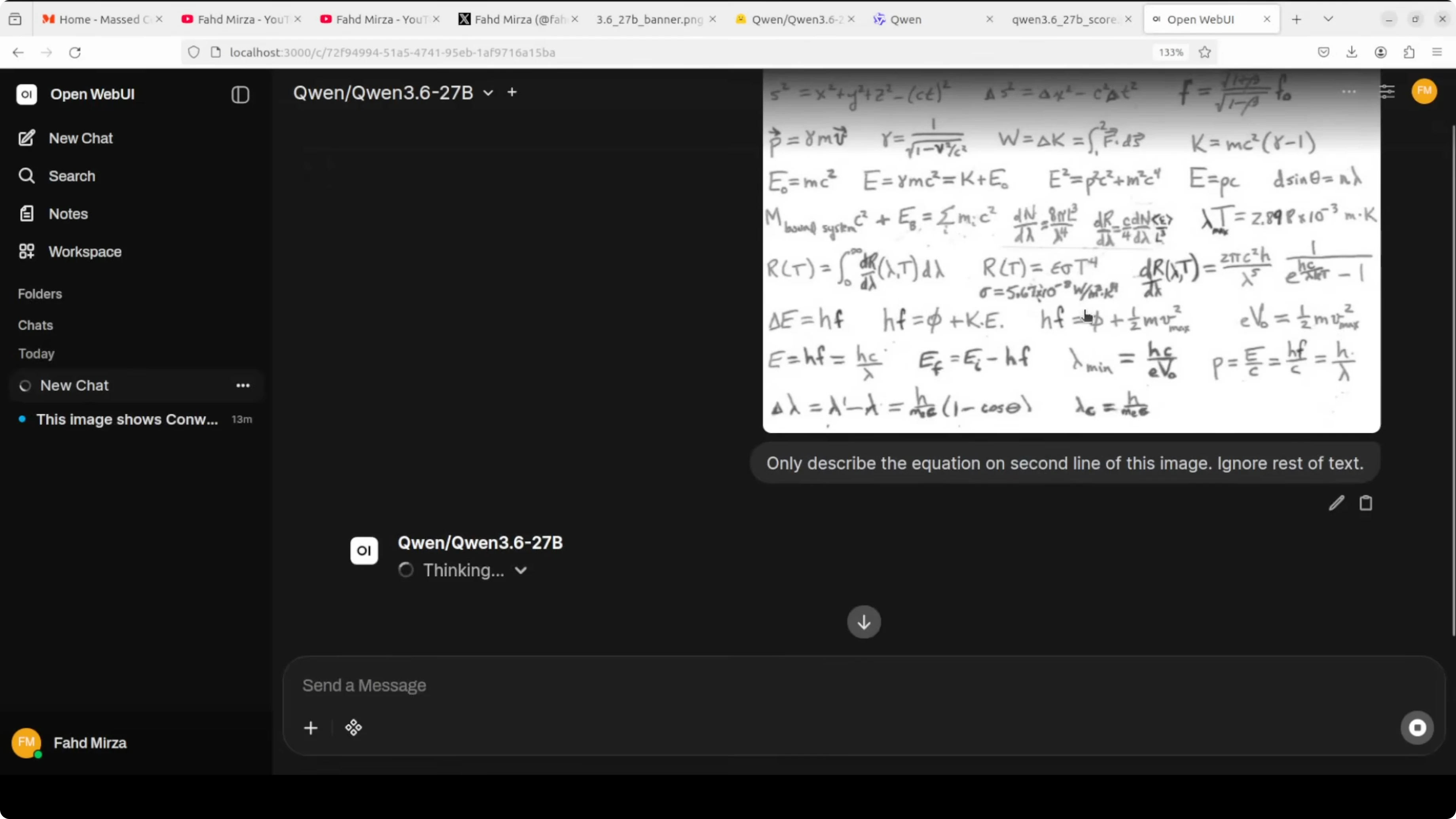

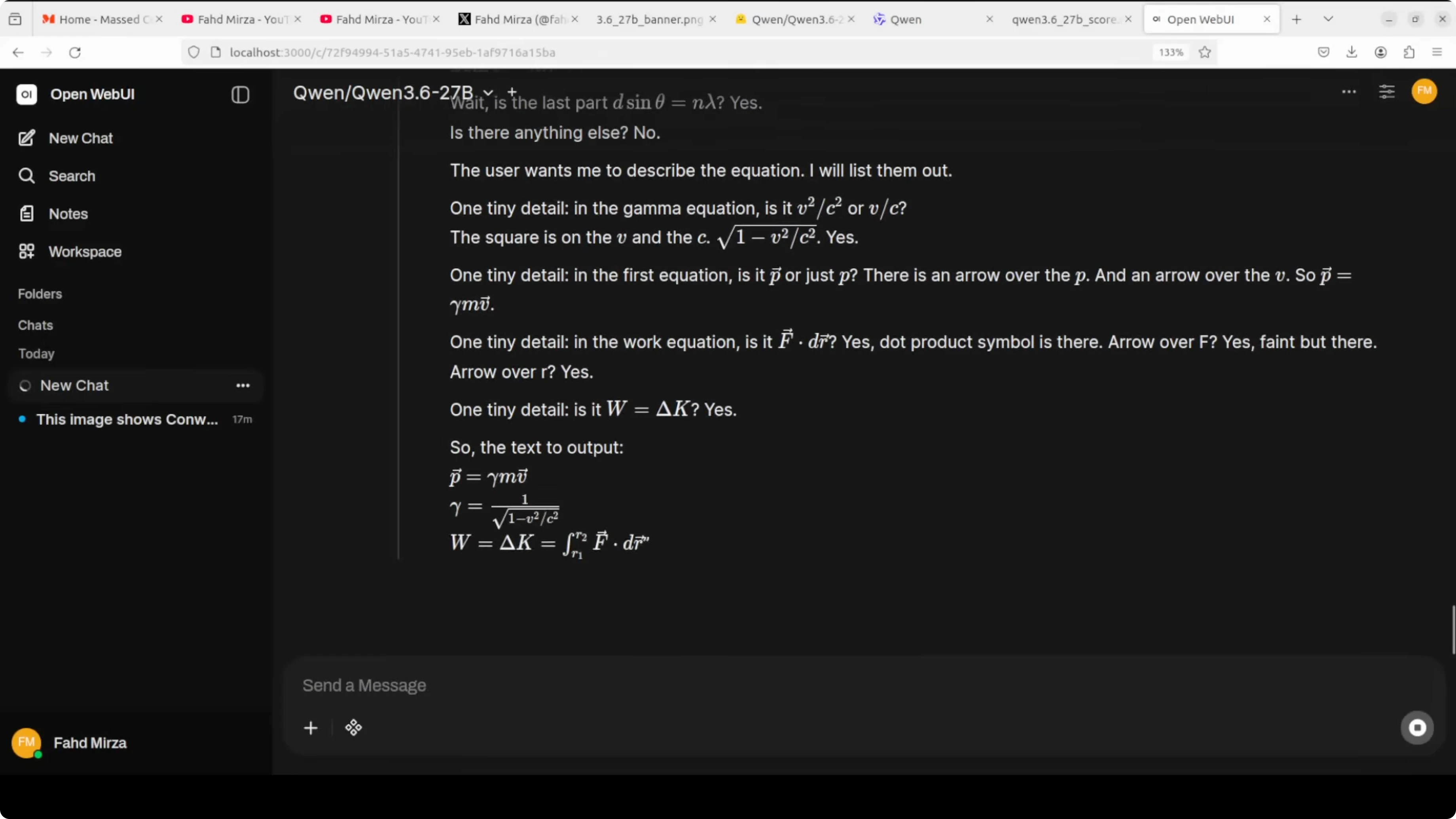

Qwen3.6-27B is natively multimodal for text and vision. vLLM accepts image inputs using the OpenAI content parts format. This is essential for OCR, charts, UI mocks, and code from screenshots.

import base64, requests

def read_image_b64(path):

with open(path, "rb") as f:

return "data:image/png;base64," + base64.b64encode(f.read()).decode()

messages = [

{

"role": "user",

"content": [

{"type": "text", "text": "Answer only about the second line of the image."},

{"type": "input_image", "image_url": {"url": read_image_b64("/path/to/image.png")}}

]

}

]

resp = requests.post(

"http://localhost:8000/v1/chat/completions",

json={"model":"Qwen3.6-27B-Instruct","messages":messages,"temperature":0.1,"max_tokens":400},

timeout=180

)

print(resp.json()["choices"][0]["message"]["content"])If you are exploring an Ollama-based workflow with tool use for smaller Qwen variants, this quickstart on running Qwen3.5 with OpenClaw on Ollama gives a lightweight alternative.

Reasoning And Long Context

Qwen3.6-27B includes preserved thinking so the model keeps track of its own intermediate reasoning across the entire conversation. That is different from just peeking at the last message. Agentic coding tasks, multi-hop research, and long troubleshooting sessions benefit directly.

The model is comfortable at 32k context for day-to-day work. If you need very long documents, it can support up to 262k with the right configuration and memory. Reduce the context length when you need to fit a smaller GPU.

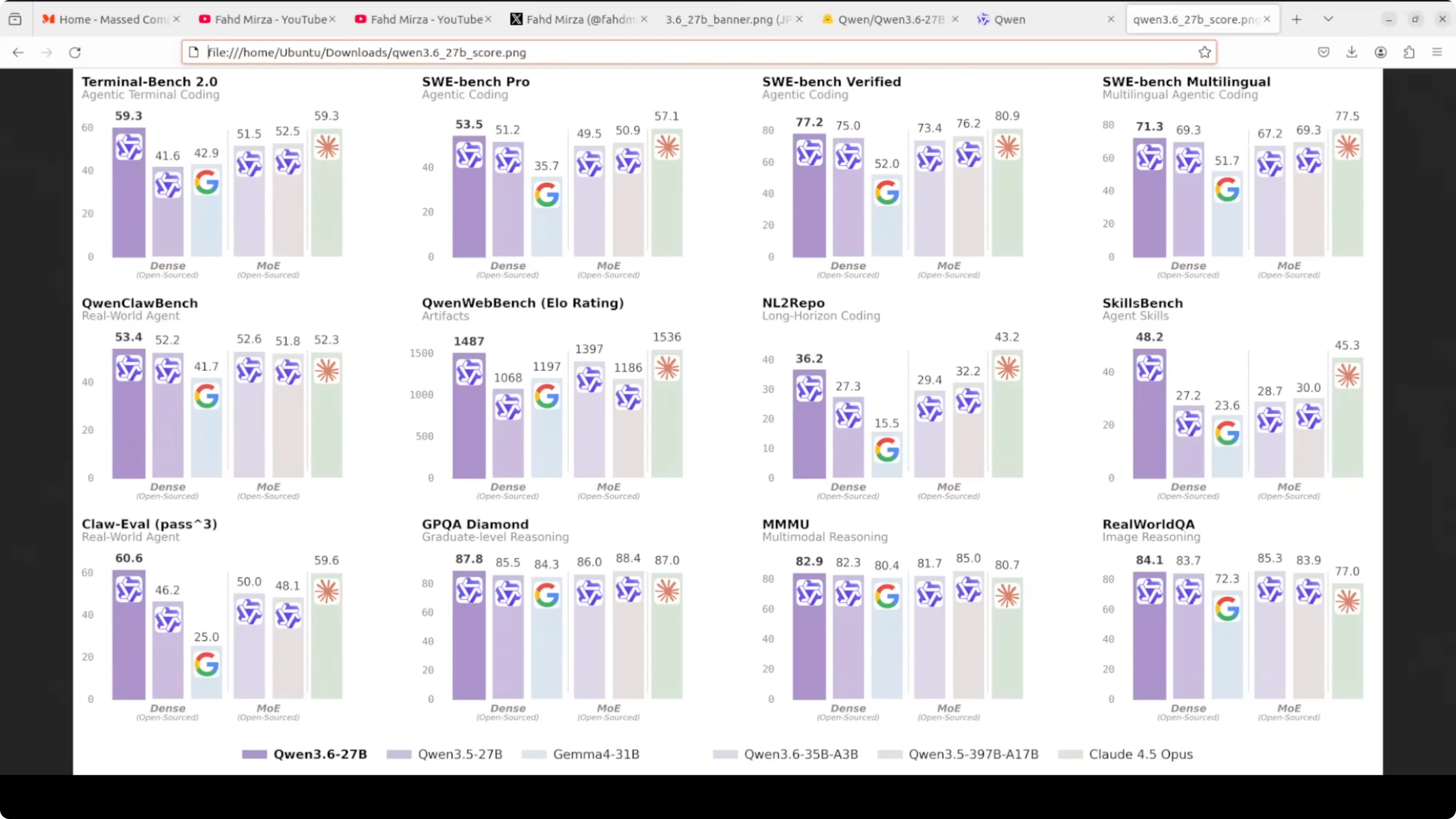

Benchmarks For Practical Coding

This is where it gets interesting. Qwen3.6-27B scores 77.2 on SWE-bench Verified, beating the much larger Qwen 3.5 on that test. On Skill Bench it scores 48.2 versus 28.7 for the prior baseline.

Across many coding tasks it sits just below Claude 3.5 Opus, which we know is way bigger than 27B. That is the whole point here. You get serious coding performance without massive hardware.

If you want a deeper, apples-to-apples comparison of precision modes and model sizes before committing GPU budget, see this practical review on precision trade-offs for Qwen3.6.

VRAM Notes And Throughput

On an A100 80 GB, the full model load sits just under 74 GB of VRAM. That keeps headroom for the KV cache at 32k context. You can scale context down to reduce memory pressure.

Throughput is steady because dense models avoid expert routing overhead. Plan your batch sizes and max tokens based on how interactive your workload is. If you push longer contexts and higher batch sizes, monitor token latency closely.

Stable, Practical Use With Qwen3.6-27B

This release prioritizes reliability in real-world tasks. The native multimodal stack, preserved thinking, and long context make it a strong daily driver. It is built for shipping, not just demos.

If you need a smaller stepping stone before rolling out 27B across your org, this hands-on article on fine tuning Qwen3.5 8B locally shows how to adapt a compact model to your domain. For more coder-first workflows and agents, also see the local setup notes on Qwen3 Coder Next.

Practical Use Cases

Agentic coding with preserved thinking for multi-file refactors and long-running tool plans. OCR plus math for extracting specific lines or regions from notes, whiteboards, and scanned papers with strict instructions.

Multimodal product research by reading charts, UI mocks, and code snippets in screenshots. Multilingual support for content teams who need accurate translation and tone across many languages.

If your decision includes comparing it against nearby large models, keep this reference handy on Gemma 4 31B vs Qwen3.5 27B to balance cost and quality.

Final Thoughts

Qwen3.6-27B is dense, predictable, and deployable. Coding scores are strong, memory is manageable on a single A100 80 GB, and the feature set targets real work.

Run it locally with vLLM, keep context reasonable, and lean on preserved thinking for long sessions. Do more with less, and keep it stable.

Subscribe to our newsletter

Get the latest updates and articles directly in your inbox.

Related Posts

Hy3 Preview: Inside Tencent’s Most Powerful AI Model Yet

Hy3 Preview: Inside Tencent’s Most Powerful AI Model Yet

How to Install Open WebUI Desktop App on Linux, Windows & Mac?

How to Install Open WebUI Desktop App on Linux, Windows & Mac?

Qwen3.6-27B + OpenClaw: Scalable Local Multifile Coding

Qwen3.6-27B + OpenClaw: Scalable Local Multifile Coding