Table Of Content

- DeepSeek V3.2 vs Claude Opus 4.5: test setup

- DeepSeek V3.2 vs Claude Opus 4.5: PRD results

- DeepSeek PRD review

- Opus PRD review

- PRD verdict

- DeepSeek V3.2 vs Claude Opus 4.5: build results

- Setup in Cursor

- DeepSeek build walkthrough

- Opus build walkthrough

- Build verdict

- DeepSeek V3.2 vs Claude Opus 4.5: comparison overview

- Use cases

- DeepSeek V3.2: pros and cons

- Claude Opus 4.5: pros and cons

- Step-by-step: reproducing the build

- Code examples

- Final thoughts

DeepSeek V3.2 vs Claude Opus 4.5

Table Of Content

- DeepSeek V3.2 vs Claude Opus 4.5: test setup

- DeepSeek V3.2 vs Claude Opus 4.5: PRD results

- DeepSeek PRD review

- Opus PRD review

- PRD verdict

- DeepSeek V3.2 vs Claude Opus 4.5: build results

- Setup in Cursor

- DeepSeek build walkthrough

- Opus build walkthrough

- Build verdict

- DeepSeek V3.2 vs Claude Opus 4.5: comparison overview

- Use cases

- DeepSeek V3.2: pros and cons

- Claude Opus 4.5: pros and cons

- Step-by-step: reproducing the build

- Code examples

- Final thoughts

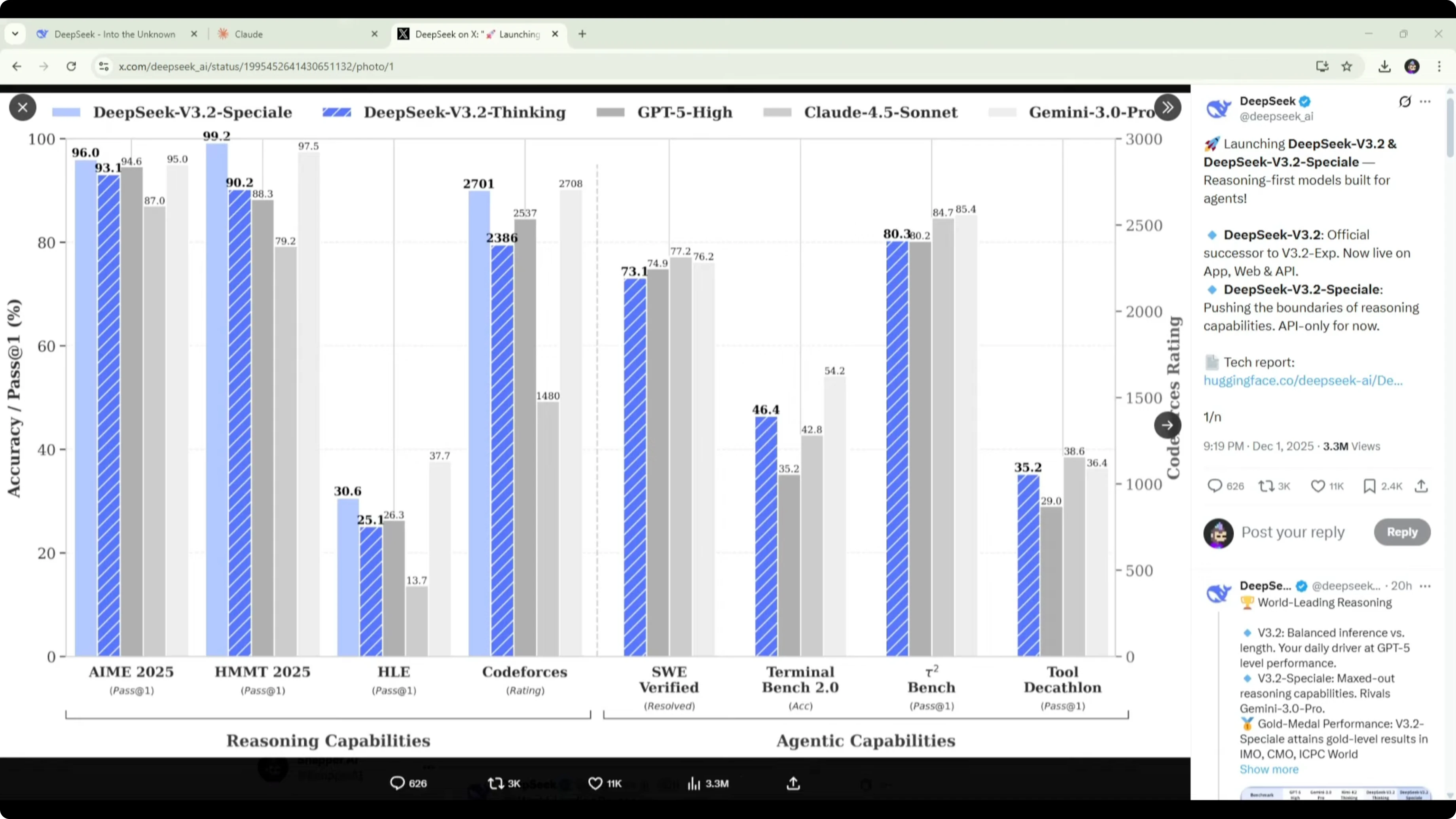

DeepSeek just released V3.2. Their published benchmarks show strong results across a range of tests against models like GPT5 High, Gemini 3 Pro, and Claude Sonnet 4.5. The one missing comparison was Opus 4.5, so I ran a head-to-head across two tasks.

First, I asked both models to write a PRD for a space dashboard. Then I asked each model to turn that PRD into a working dashboard and evaluated the result.

DeepSeek V3.2 has been getting attention for how it handles reasoning. Most language models work in two separate phases where they think through the problem as a hidden step, then execute by writing code, calling an API, or performing a tool action. DeepSeek says V3.2 changes that by reasoning within tool use, adjusting its plan on the fly while generating code or making calls, which they call thinking in tool use.

The idea is that the model can plan, revise, and correct itself as it is actively building something rather than thinking first and executing after.

That is what I tested here to see how DeepSeek V3.2 performs against Opus 4.5 on both planning and implementation.

For a broader comparison across adjacent models and tiers, see this review of related matchups that include V3.2 and Opus 4.5 in a single place: deep model comparison that includes DeepSeek V3.2 and Opus 4.5.

DeepSeek V3.2 vs Claude Opus 4.5: test setup

I asked for a personal mission control style space dashboard that pulls live space data from free public APIs. The scope included ISS position, upcoming rocket launches, astronomy imagery, and people in space. I used the same open-ended prompt for both models and let each decide structure, features, and technical approach.

This tested how well each model understands what a good PRD should include. It also tested how each model prioritizes features and reasons through UI and technical requirements without being handheld. After PRD creation, I moved to implementation and evaluated the finished dashboards.

DeepSeek V3.2 vs Claude Opus 4.5: PRD results

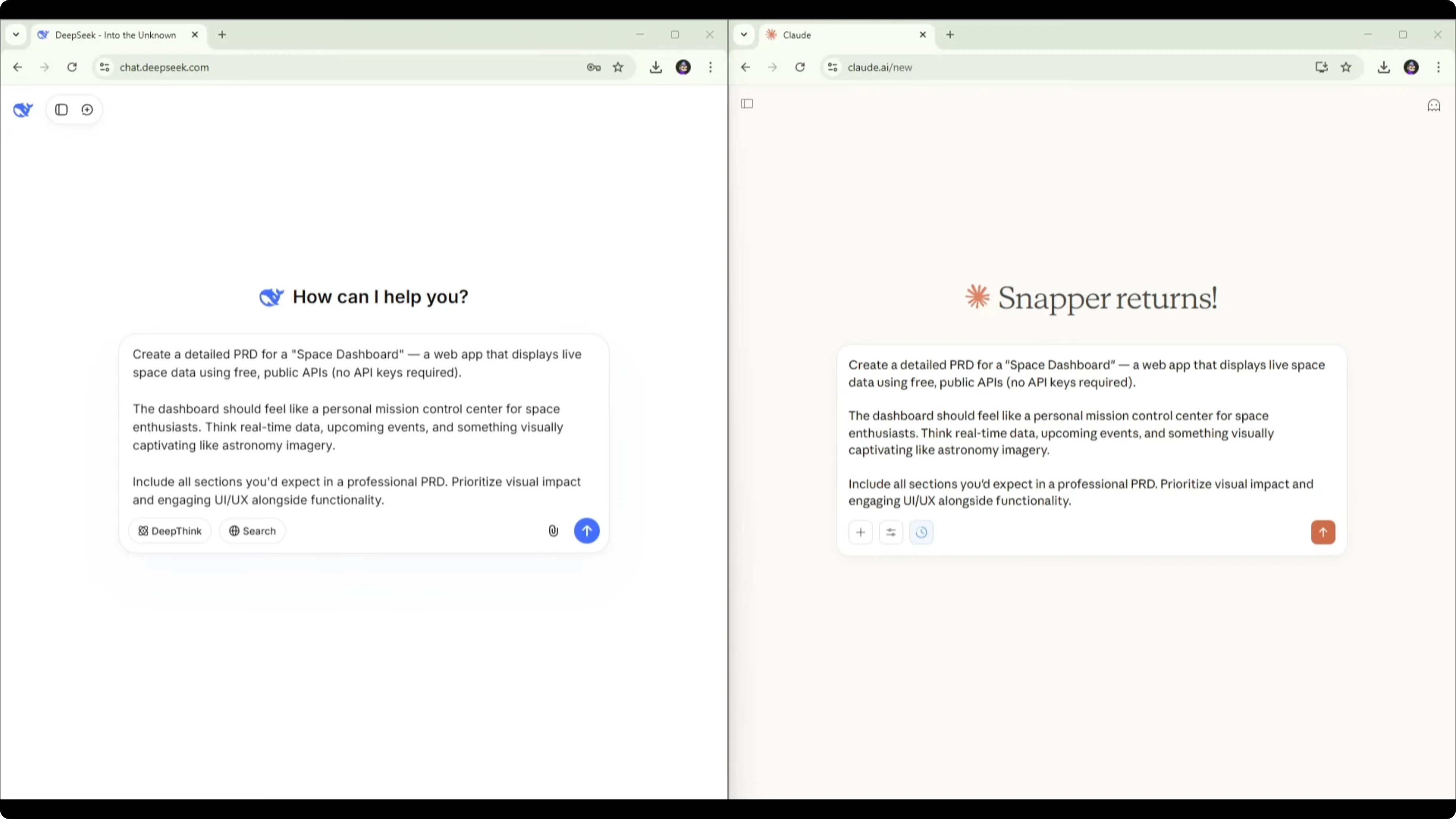

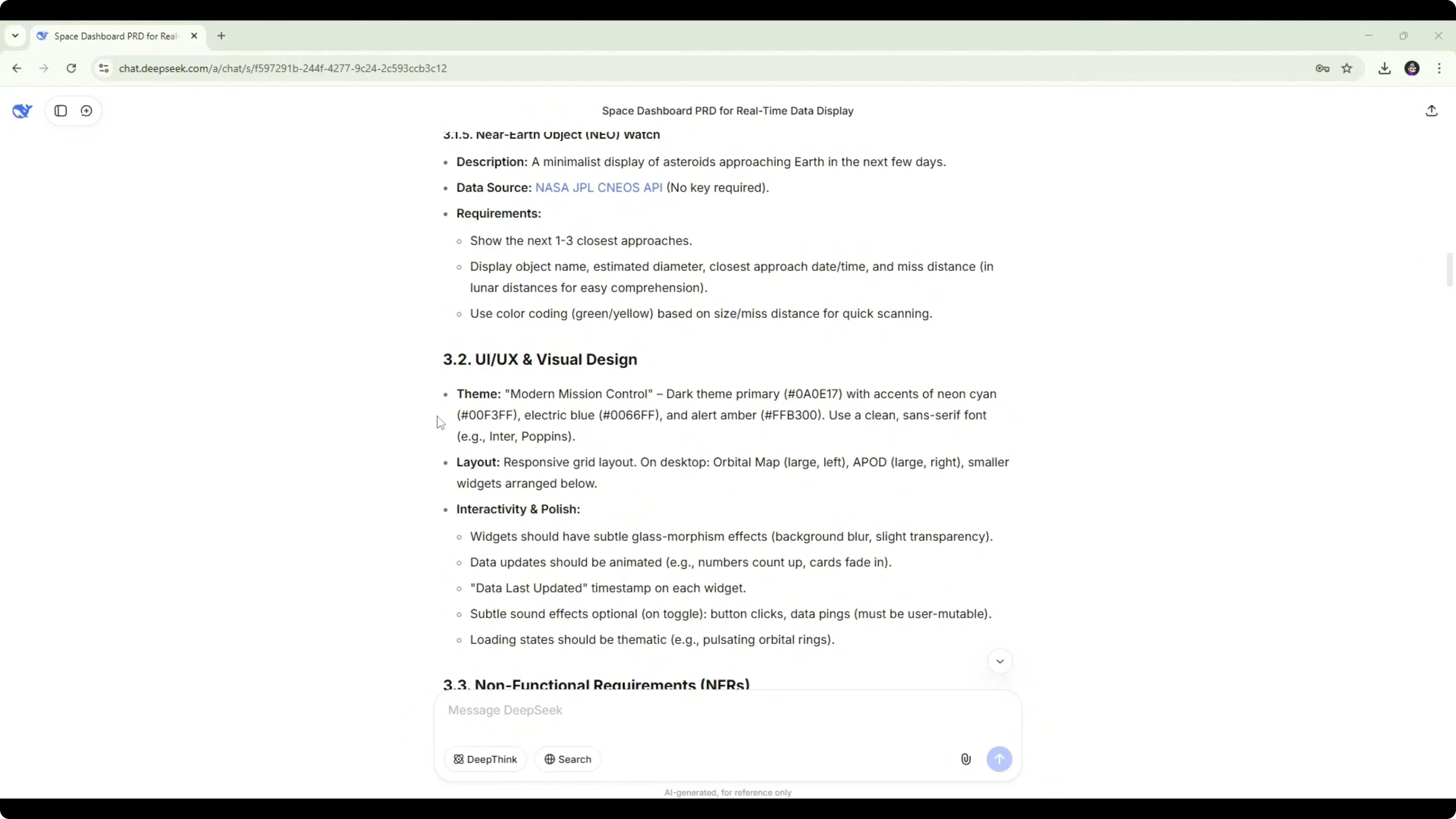

DeepSeek PRD review

The DeepSeek PRD had a clear structure with vision and objectives that met the brief. It included user personas and stories, including Alex the enthusiast, which helped frame why the features exist. The features were solid and referenced the required data sources.

It included ISS tracking with an interactive globe, a live orbital map, people in space, astronomy picture of the day, launch countdown and schedule, and a near earth object watch.

The UI and visual design section was specific with a theme, hex codes, fonts, and a proposed layout. Technical specs recommended React or Vue for the front end, Three.js for the orbital globe, D3 or Chart.js for charts, and Tailwind CSS for styling.

There was no real prioritization across features. The roadmap was vague with out of scope for V1 and a future roadmap but no concrete implementation plan to build the MVP. It also lacked a risk assessment for common issues like API downtime.

It felt like a starting point PRD that would need to be fleshed out. It touched the important areas with usable information but stayed brief. It also displayed an incorrect date at the top, which is minor but still an error worth noting.

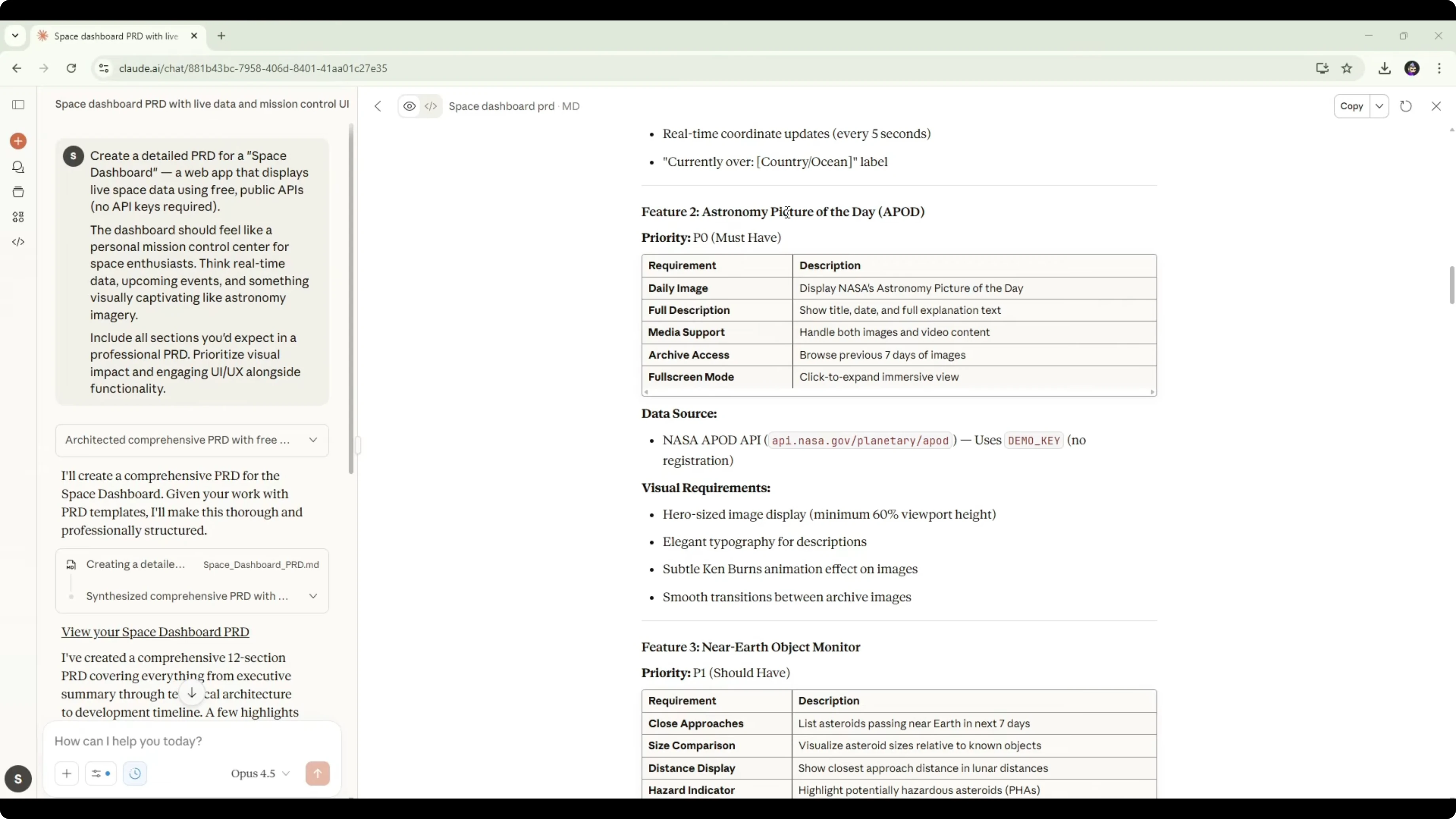

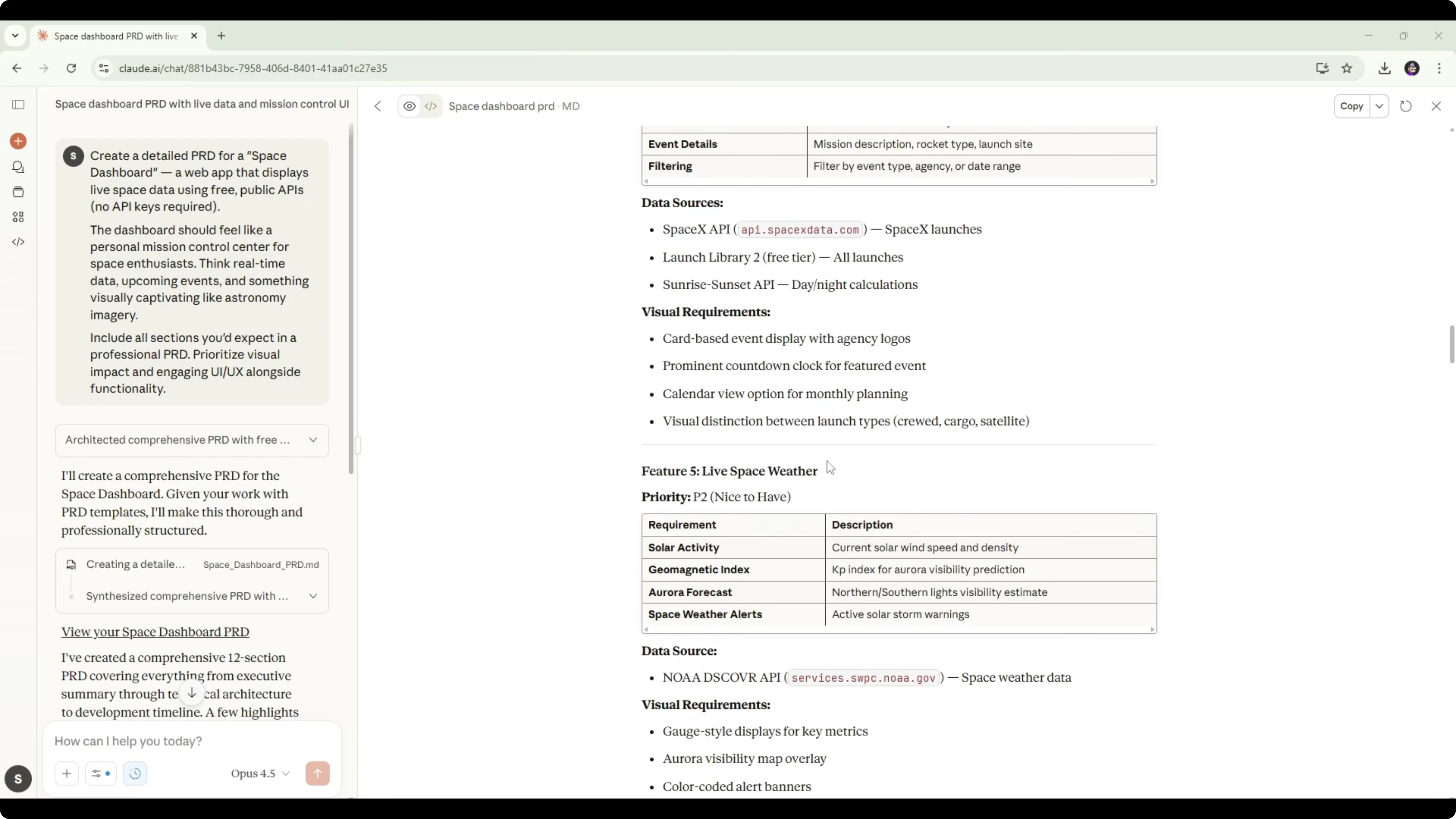

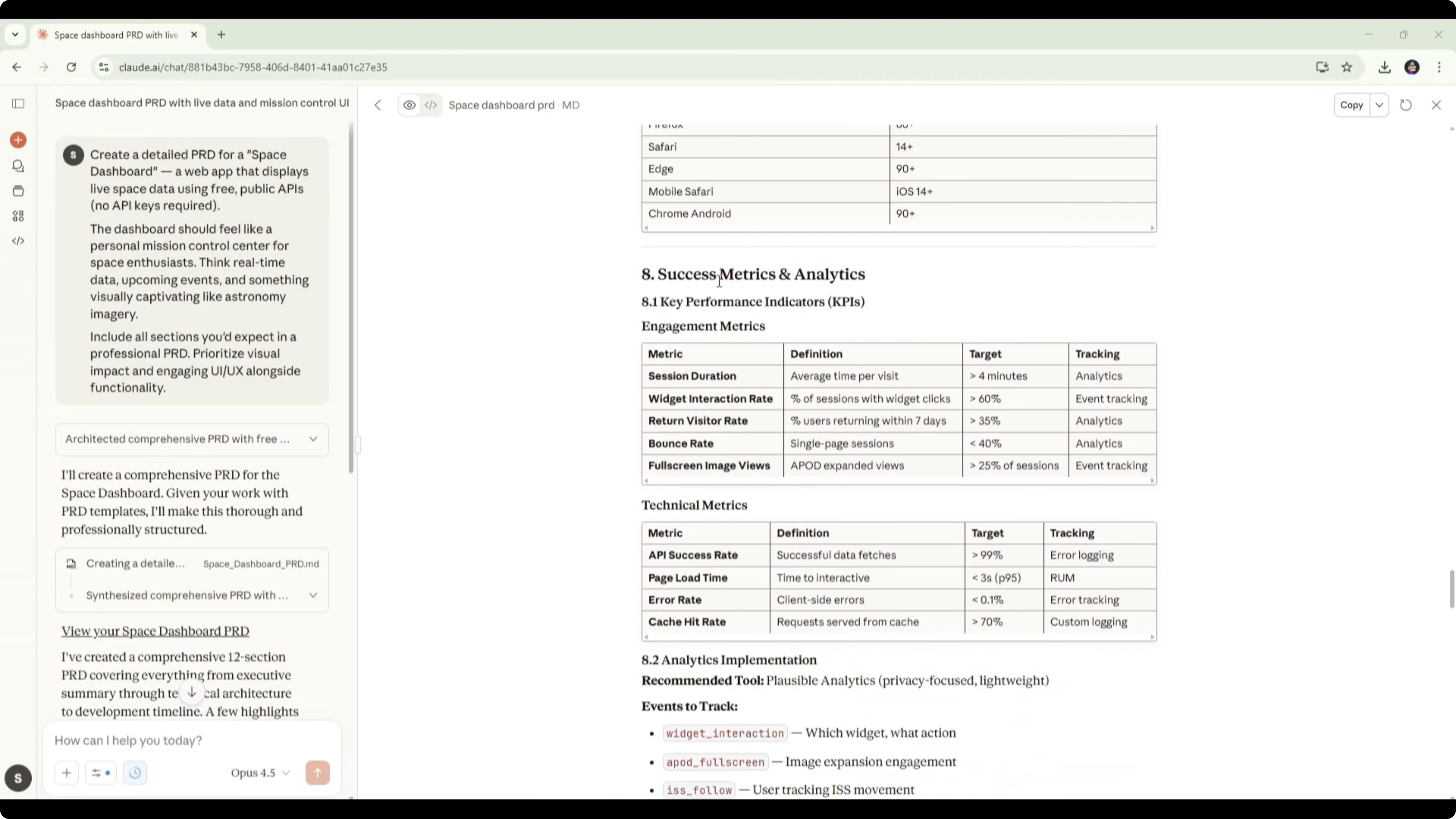

Opus PRD review

Opus 4.5 produced a more detailed and structured PRD. It opened with an executive summary, a problem statement, and a more thorough goals and objectives section. It defined target users and included a persona similar to the space enthusiast concept.

Feature requirements were broken into core MVP with clear prioritization. P0 must-haves included an ISS live tracker, astronomy picture of the day, near object monitoring, a space events calendar, and live space weather. Each feature listed required APIs and described functional and non-functional details.

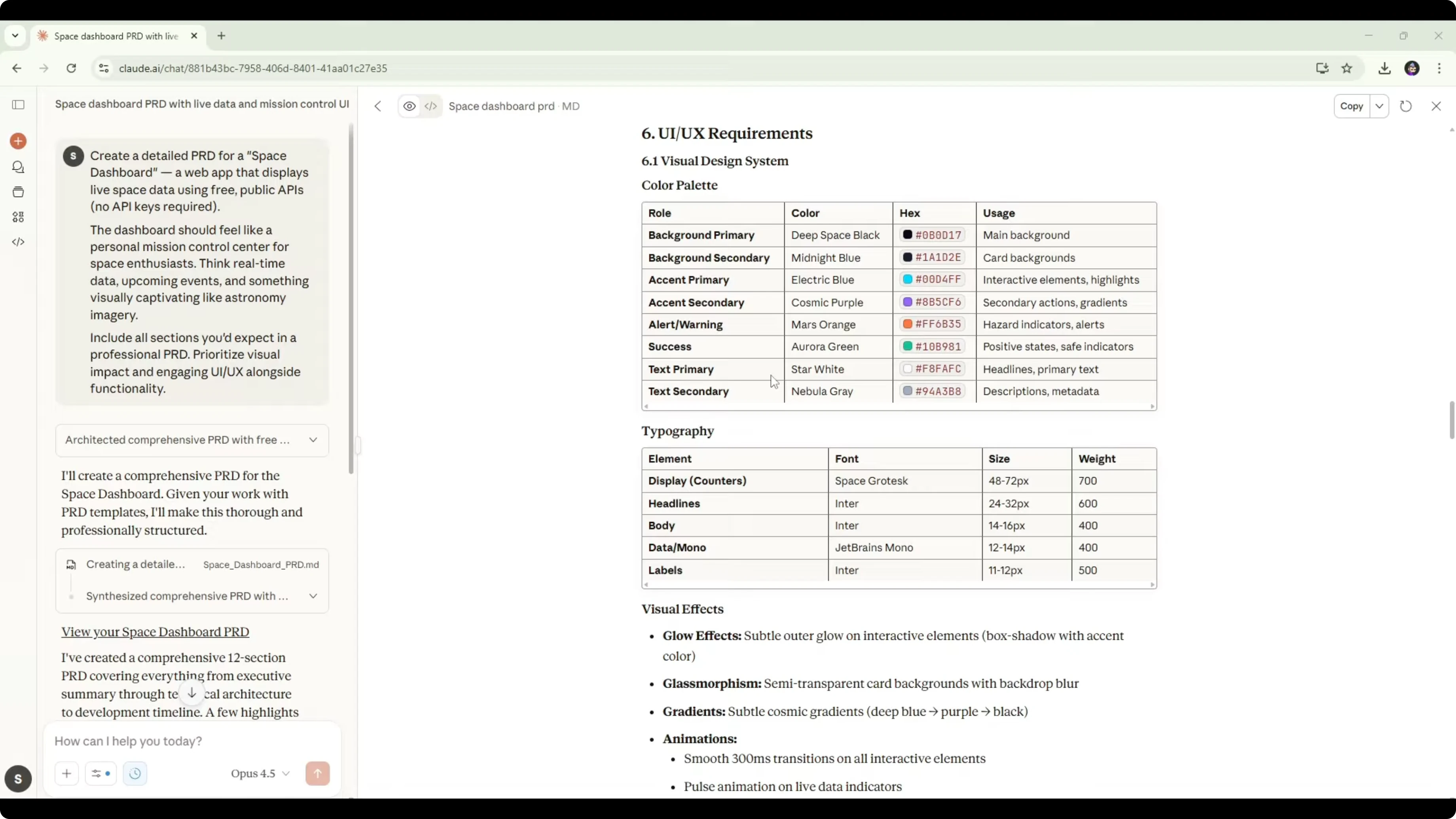

The dashboard layout section included clear diagrams of how components should be placed. The UI and UX requirements went deeper on hex codes, typography, and interaction details that a developer could follow directly. The technical specification section listed every API with endpoints, rate limits, and data refresh guidance, which is extremely helpful during implementation.

It also included an architecture overview using React, a diagram for flow, specific technologies per layer, and rationale for choices. The PRD covered performance requirements, success metrics, and analytics to measure outcomes. A full development roadmap detailed phases, tasks, and time estimates, plus a risks and mitigations section and future considerations.

PRD verdict

Both models understood the assignment and produced legitimate PRDs. DeepSeek provided a solid foundation with good features, specific design direction, and usable technical specs. Opus went further with stronger structure, clear prioritization, risk planning, and a development roadmap that a team could use immediately.

For a real product, Opus 4.5 delivered a more complete PRD on the first attempt. DeepSeek’s version worked as a first draft that would need additional iterations to reach production readiness. For more context on how Opus 4.6 stacks up against 4.5 in similar planning tasks, see this follow-up analysis: Opus 4.6 vs 4.5 comparison.

DeepSeek V3.2 vs Claude Opus 4.5: build results

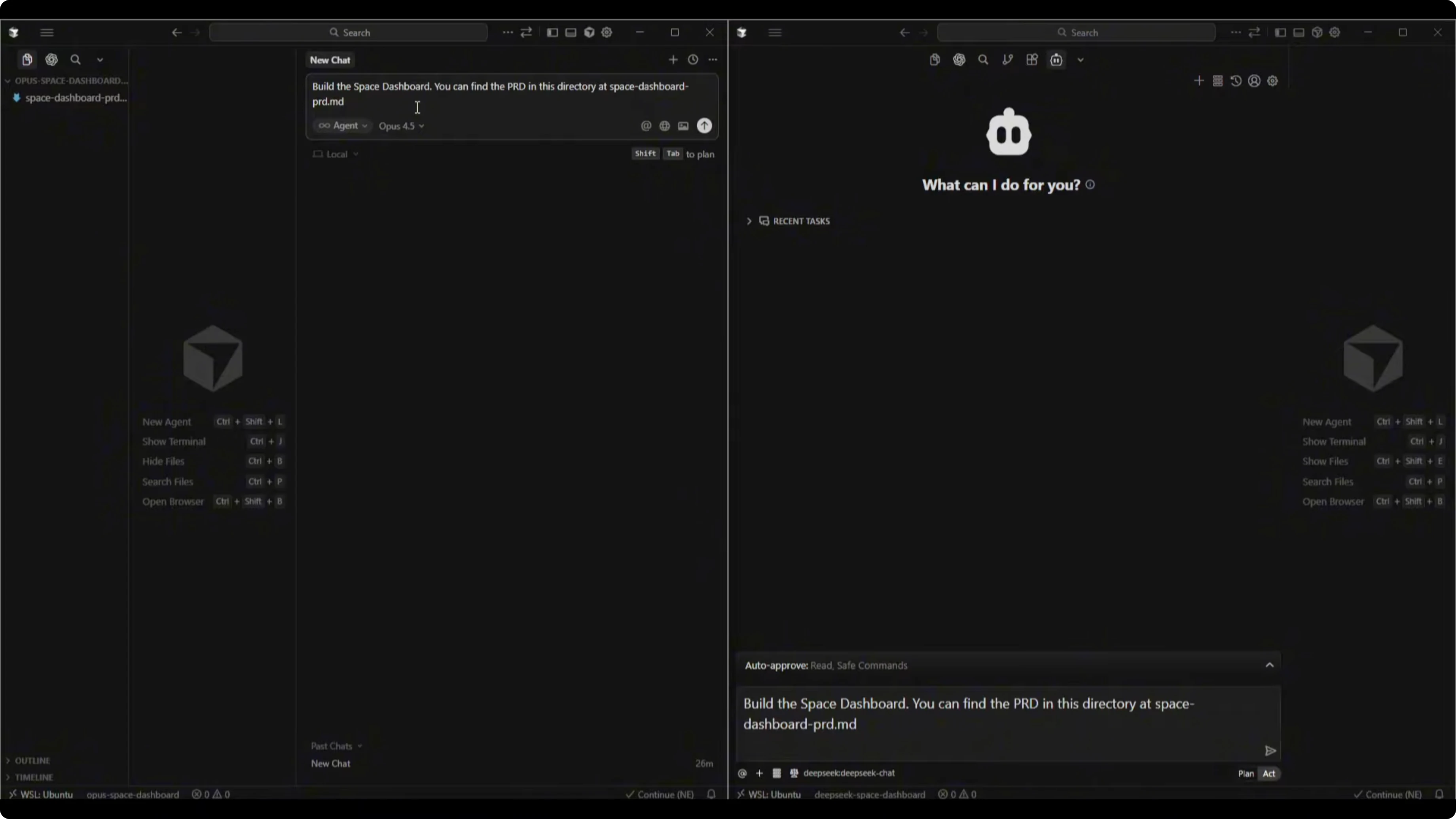

Setup in Cursor

I set up two workspaces in Cursor, one for each model with its PRD uploaded. I used the DeepSeek API with the V3.2 chat model for DeepSeek and selected Opus 4.5 for the Opus workspace. The build prompt was simple: Build the space dashboard. You can find the PRD in this directory at space-dashboard.md.

Both models completed the build. I had to reprompt both to fix minor issues that surfaced during dev mode runs and API connections. Build times were similar, with Opus 4.5 finishing about five to eight minutes quicker.

For a look at speed, cost, and quality tradeoffs across Opus and newer GPT versions in similar coding workflows, this breakdown is useful: speed, cost, and quality comparison for GPT-5.2 vs Opus 4.5.

DeepSeek build walkthrough

The DeepSeek V3.2 dashboard displayed time and date in the top right. It included a live orbital map widget for the ISS with coordinates, velocity, and altitude, though the visualization was a circular orbital map instead of a full map. Astronomy Picture of the Day displayed title and description with a link to nasa.gov, but the image preview did not load and the preview content did not match the linked page.

People in space displayed the current number of people in space and their locations such as the International Space Station. The next launch widget showed a countdown that had already ended and did not roll over to a future launch automatically. The near earth object watch summarized three close approaches with information on distance and risk labels.

A footer noted data updates every 30 seconds and listed public APIs from NASA, Open Notify, and The Space Devs. It nailed the brief and produced a functional space dashboard with a couple of issues that would need fixes. The button for reminders did not perform an action and the APOD image preview did not render.

Opus build walkthrough

The Opus 4.5 dashboard felt more polished and modern in presentation. Astronomy Picture of the Day loaded correctly with the image visible and a description for the Horsehead Nebula. Time and date were in the top right and the dashboard name SpaceDash Mission Control appeared top left.

The ISS tracker rendered an actual world map with the station’s position, which felt stronger from a UI standpoint than a circular orbital representation. Coordinates were displayed and the interaction pattern aligned with expectations for a tracker view. Humans in space showed a count and a clickable crew list with names such as Tracy Caldwell Dyson and role details.

The near object monitor tracked three objects and included size descriptors such as bus sized or plane sized. Space weather displayed KP index and solar wind data. Upcoming launches appeared outdated, listing past launches such as Crew 5 from October 6, 2022, which indicates the API feed or query parameters for recency filtering need adjustment.

Build verdict

Both dashboards worked after a couple of small fixes prompted in Cursor. Opus 4.5 finished a bit faster and delivered a more professional UI with correct APOD rendering and a richer ISS map experience. DeepSeek V3.2 met the brief and was functional, but a few elements needed refinement to match the polish of Opus.

For teams comparing multiple frontier models for app builds like this, you may also find this broader matchup helpful: GLM-4.7 vs Opus 4.5 vs GPT-5.2 overview.

DeepSeek V3.2 vs Claude Opus 4.5: comparison overview

| Area | DeepSeek V3.2 | Claude Opus 4.5 |

|---|---|---|

| PRD structure | Clear and concise with personas, features, UI theme, and tech stack | Very detailed with executive summary, goals, prioritized MVP, diagrams, and rationale |

| Prioritization | Not present across features | Present with P0 flags and phased breakdown |

| Technical depth | Solid framework-level picks and data sources | API-by-API details with endpoints, rate limits, refresh cadence, and architecture diagram |

| Risk and metrics | Missing risk assessment and success metrics | Includes risks, mitigations, performance targets, and analytics |

| Roadmap | Vague future roadmap without build phases | Full development roadmap with phases, tasks, and time estimates |

| Build speed | Similar total time, ended slightly slower | Finished 5-8 minutes faster in this run |

| UI quality | Functional widgets with a basic orbital map | More polished design with an interactive world map and correct APOD rendering |

| Data accuracy | A few mismatches like APOD preview and ended launch countdown | Mostly accurate but outdated launches list due to feed or query issues |

| Overall outcome | Solid starting point and workable dashboard | Production-ready PRD and more professional UI in the first pass |

Use cases

DeepSeek V3.2 fits fast ideation and coding where you want a concise plan and a working prototype. It is also suited to scenarios where tool-augmented reasoning during code generation can prune errors and adjust mid-build. For a broad context that includes coding performance comparisons, see this perspective on code-focused matchups: GPT-5.2 Codex vs Opus 4.5 for coding.

Opus 4.5 fits production-grade planning where you need a PRD that is detailed enough to hand to a team. It also excels when polished UI, clear prioritization, and a well-specified architecture are required on the first attempt. If you want a single page that collates multiple head-to-heads across these families, this is a handy index: model comparisons featuring DeepSeek V3.2 and Opus.

DeepSeek V3.2: pros and cons

Pros include a clear foundation with personas, solid feature list, and specific UI and tech recommendations. It showed effective tool-aware reasoning during build and produced a functional dashboard that matched the brief. The approach is fast to iterate when you plan to refine details later.

Cons include missing prioritization, no risk assessment, and a vague roadmap. The build surfaced a couple of UX gaps such as a non-functional reminder button and a missing APOD image preview. It also made a minor accuracy slip with the date in the PRD.

Claude Opus 4.5: pros and cons

Pros include a production-ready PRD with prioritization, risks, metrics, and a full roadmap. It listed API details with endpoints and rate limits and provided a clean architecture diagram with rationale. The build felt more polished, with an interactive map for the ISS and correct APOD rendering.

Cons include outdated launch data in the final dashboard feed that needs query or source adjustments. It required a couple of reprompts to resolve minor connection or caching issues during the build. That said, it still wrapped up faster than the DeepSeek run in this test.

For more on how Opus versions compare head-to-head, this focused look is useful reading: how Opus 4.6 compares to Opus 4.5.

Step-by-step: reproducing the build

Create two workspaces in Cursor, one for DeepSeek V3.2 and one for Opus 4.5.

Upload each model’s PRD into its respective project directory, saved as space-dashboard.md.

Configure the chat model for each workspace, using the DeepSeek API for V3.2 and Opus 4.5 in the other.

Open a new chat in each workspace and issue the prompt: Build the space dashboard. You can find the PRD in this directory at space-dashboard.md.

Allow the model to scaffold the project, install dependencies, and start dev mode.

Test the dashboard locally and capture any errors that surface during render or API calls.

Reprompt the model to fix specific issues such as dependency mismatches, CORS, outdated endpoints, or failing image loads.

Iterate until all widgets render correctly and data updates at the desired interval.

Code examples

Fetch Astronomy Picture of the Day in React:

import { useEffect, useState } from "react";

function ApodCard() {

const [apod, setApod] = useState(null);

const API_KEY = process.env.NEXT_PUBLIC_NASA_KEY;

useEffect(() => {

fetch(`https://api.nasa.gov/planetary/apod?api_key=${API_KEY}`)

.then(r => r.json())

.then(setApod)

.catch(console.error);

}, []);

if (!apod) return <div>Loading APOD...</div>;

return (

<div>

<h3>{apod.title}</h3>

{apod.media_type === "image" && <img src={apod.url} alt={apod.title} />}

<p>{apod.explanation}</p>

<a href="https://apod.nasa.gov/apod/astropix.html">View on nasa.gov</a>

</div>

);

}Fetch ISS position:

import { useEffect, useState } from "react";

function IssTracker() {

const [pos, setPos] = useState(null);

useEffect(() => {

const load = () =>

fetch("http://api.open-notify.org/iss-now.json")

.then(r => r.json())

.then(data => setPos(data.iss_position));

load();

const id = setInterval(load, 30000);

return () => clearInterval(id);

}, []);

if (!pos) return <div>Tracking ISS...</div>;

return (

<div>

<p>Latitude: {pos.latitude}</p>

<p>Longitude: {pos.longitude}</p>

</div>

);

}Fetch people in space:

import { useEffect, useState } from "react";

function PeopleInSpace() {

const [data, setData] = useState(null);

useEffect(() => {

fetch("http://api.open-notify.org/astros.json")

.then(r => r.json())

.then(setData)

.catch(console.error);

}, []);

if (!data) return <div>Loading crew...</div>;

return (

<div>

<p>Humans in space: {data.number}</p>

{data.people?.map(p => (

<div key={p.name}>{p.name} - {p.craft}</div>

))}

</div>

);

}Fetch upcoming launches with The Space Devs:

import { useEffect, useState } from "react";

function UpcomingLaunches() {

const [launches, setLaunches] = useState([]);

useEffect(() => {

const nowIso = new Date().toISOString();

fetch(`https://ll.thespacedevs.com/2.2.0/launch/upcoming/?mode=detailed&limit=5&hide_recent_previous=true&ordering=net`)

.then(r => r.json())

.then(data => setLaunches(data.results || []))

.catch(console.error);

}, []);

return (

<div>

<h3>Upcoming Launches</h3>

{launches.map(l => (

<div key={l.id}>

<strong>{l.name}</strong>

<div>{l.net}</div>

<div>{l.pad?.location?.name}</div>

</div>

))}

</div>

);

}Note the query adds upcoming filtering and ordering to avoid outdated results. If your feed still returns older entries, add a client-side filter against new Date(l.net) >= Date.now().

Final thoughts

Opus 4.5 took the points on both tests. It produced a detailed, production-ready PRD you could hand to a developer, and its dashboard looked more polished with the interactive ISS map and correct APOD rendering. DeepSeek V3.2 delivered a solid foundation and a working dashboard, but it felt like a first draft on the planning side and needed a couple more iterations to match Opus on completeness and clarity.

If your priority is speed to a functional prototype with tool-aware reasoning during code generation, DeepSeek V3.2 will get you there. If your priority is a plan you can execute immediately and a higher polish level on the first pass, Opus 4.5 is the safer pick. For additional matchups that include GPT-5.2 and others in this tier, start here: speed, cost, and quality insights for GPT-5.2 vs Opus 4.5.

Subscribe to our newsletter

Get the latest updates and articles directly in your inbox.

Related Posts

Why DeepSeek V4 Pro and Flash Redefine GPU Clusters?

Why DeepSeek V4 Pro and Flash Redefine GPU Clusters?

DeepSeek V4 Pro, Hermes Agent & Telegram: Mobile Bug Fixing Guide

DeepSeek V4 Pro, Hermes Agent & Telegram: Mobile Bug Fixing Guide

How DeepSeek V4 Pro and OpenClaw Fix a Real Broken App?

How DeepSeek V4 Pro and OpenClaw Fix a Real Broken App?