Table Of Content

- How LocoOperator-4B Surpassed Local AI Models? purpose

- How LocoOperator-4B Surpassed Local AI Models? local setup

- How LocoOperator-4B Surpassed Local AI Models? install

- How LocoOperator-4B Surpassed Local AI Models? structured output

- How LocoOperator-4B Surpassed Local AI Models? fastapi test

- How LocoOperator-4B Surpassed Local AI Models? driver code

- How LocoOperator-4B Surpassed Local AI Models? run flow

- How LocoOperator-4B Surpassed Local AI Models? results

- Final thoughts

How LocoOperator-4B Surpassed Local AI Models?

Table Of Content

- How LocoOperator-4B Surpassed Local AI Models? purpose

- How LocoOperator-4B Surpassed Local AI Models? local setup

- How LocoOperator-4B Surpassed Local AI Models? install

- How LocoOperator-4B Surpassed Local AI Models? structured output

- How LocoOperator-4B Surpassed Local AI Models? fastapi test

- How LocoOperator-4B Surpassed Local AI Models? driver code

- How LocoOperator-4B Surpassed Local AI Models? run flow

- How LocoOperator-4B Surpassed Local AI Models? results

- Final thoughts

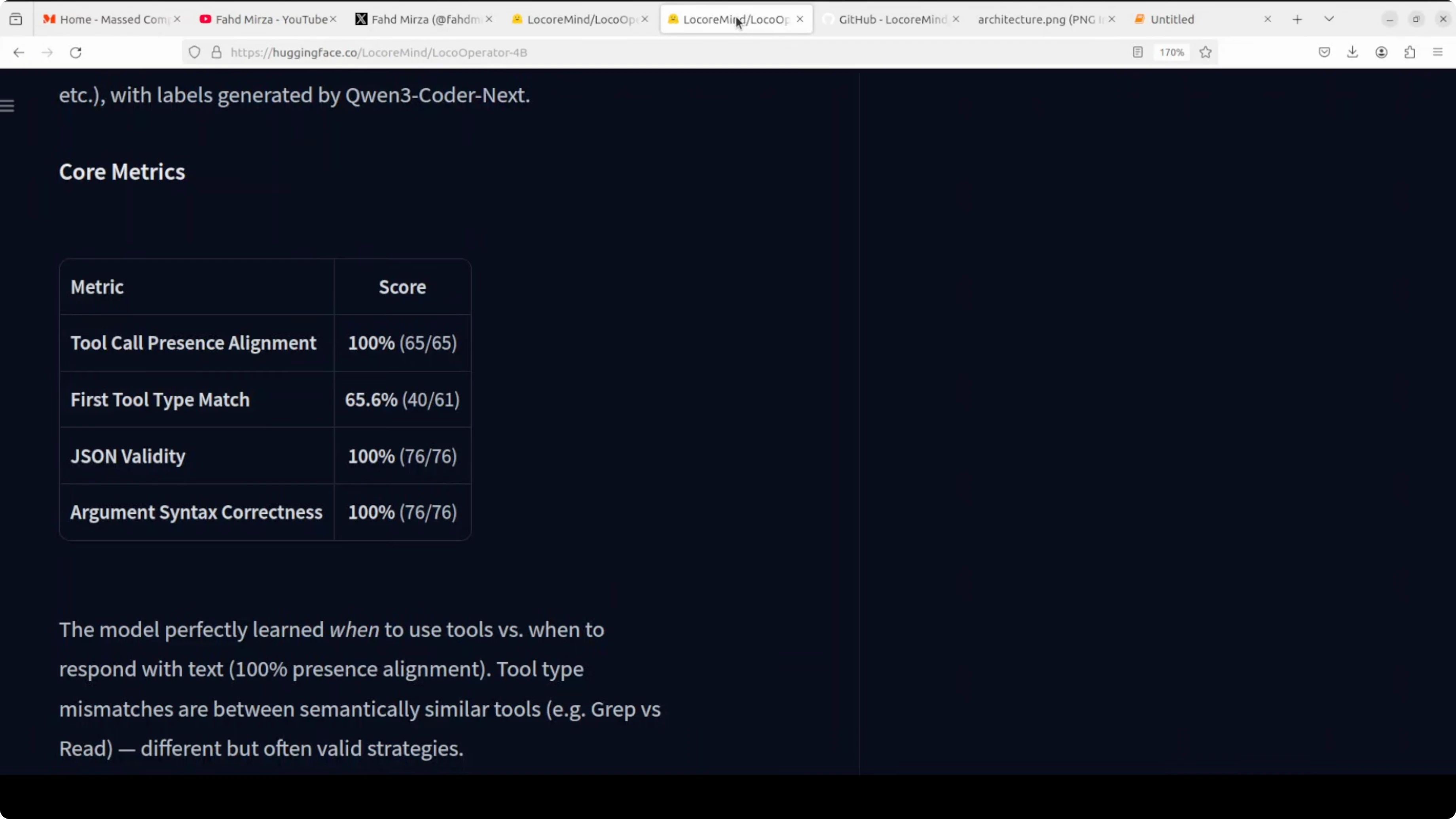

A 4 billion parameter model just outperformed its own teacher on structured output. The teacher is Qwen 3 Coder Next, a strong coding model. The student, LocoOperator-4B, hit 100 percent on argument syntax correctness.

The teacher had 11 tool calls with completely empty arguments. The student had zero. That is the student beating the teacher.

How LocoOperator-4B Surpassed Local AI Models? purpose

This model has one job only one job exploring code bases. It reads files, searches code, navigates project structures, and generates structured tool call JSON. You can think of it as a local sub agent that sits inside a bigger agentic loop.

The big cloud model running on OpenRouter handles the thinking and planning. Whenever it needs to dig into a code base, it hands that off to LocoOperator-4B. That is a hypothetical scenario, but it captures the intended split of work.

If you want the bigger picture of agent loops, see agentic basics.

How LocoOperator-4B Surpassed Local AI Models? local setup

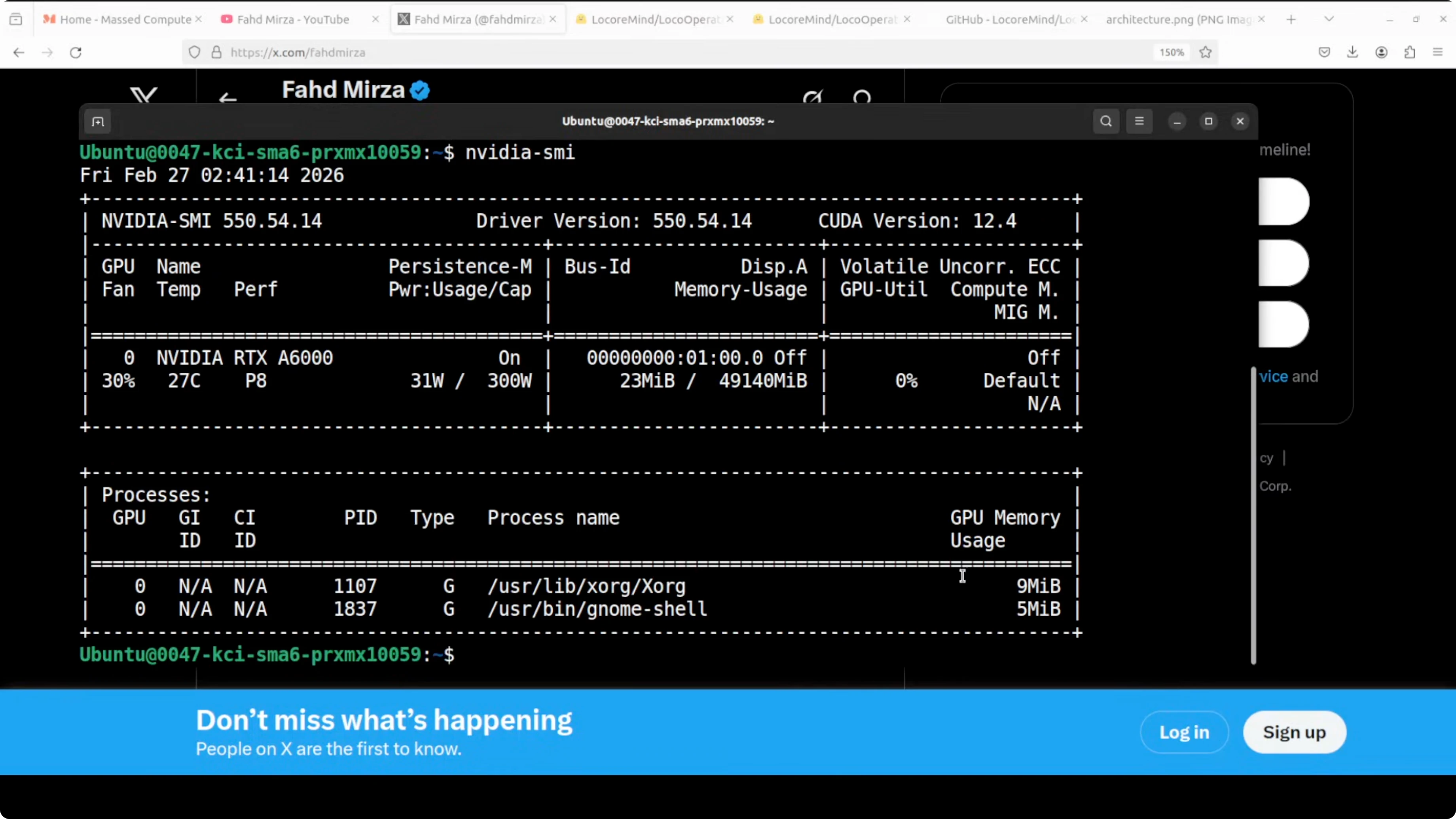

I am installing and running it on an Ubuntu system. I have one Nvidia RTX 6000 GPU with 48 GB of VRAM. They also have a GGUF variant if you do not have that much VRAM, and even 48 GB is plenty.

You can run the model via llama.cpp or Transformers. It runs locally and offline, so there is no API cost. It is surprisingly good at its one job.

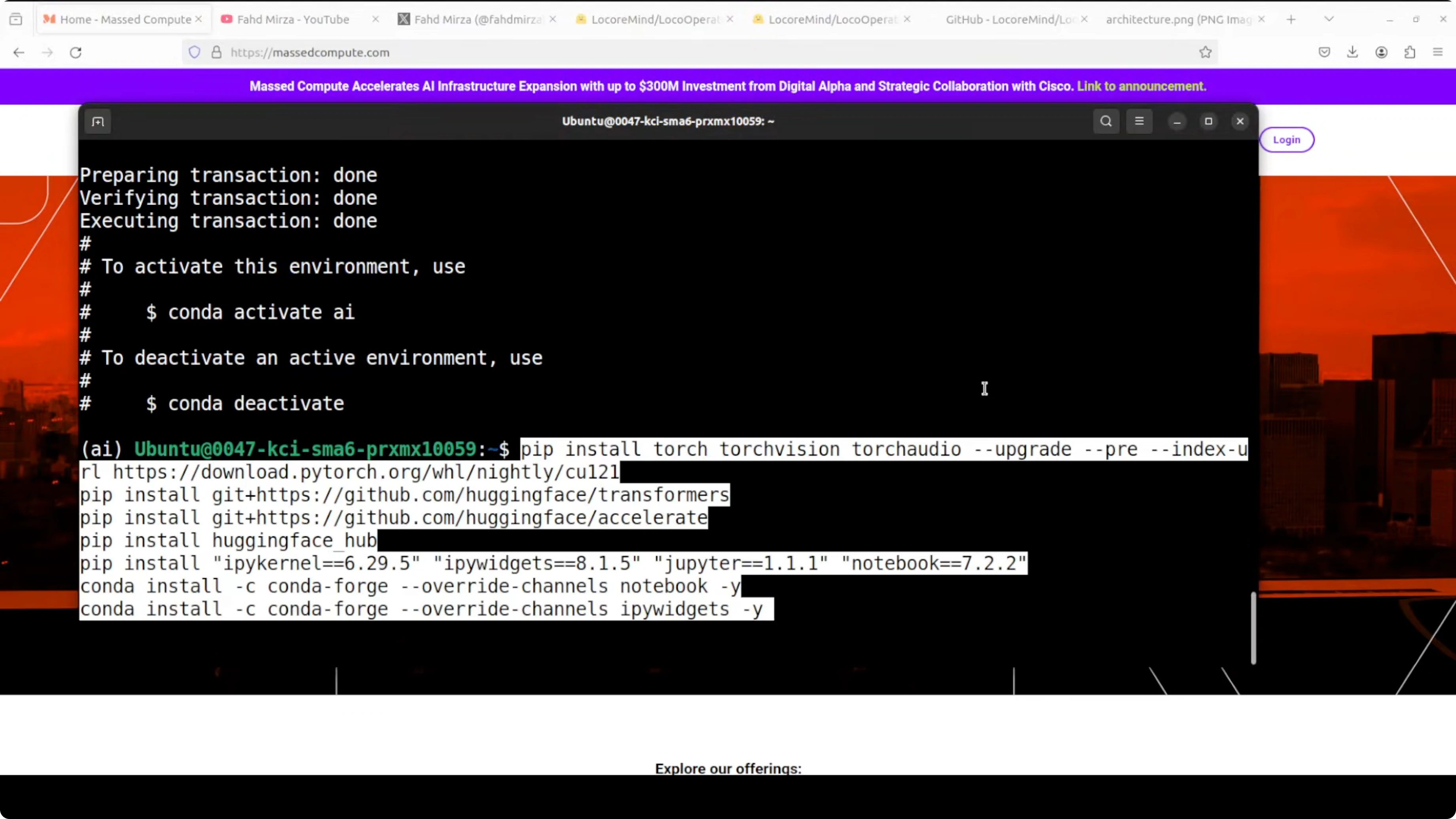

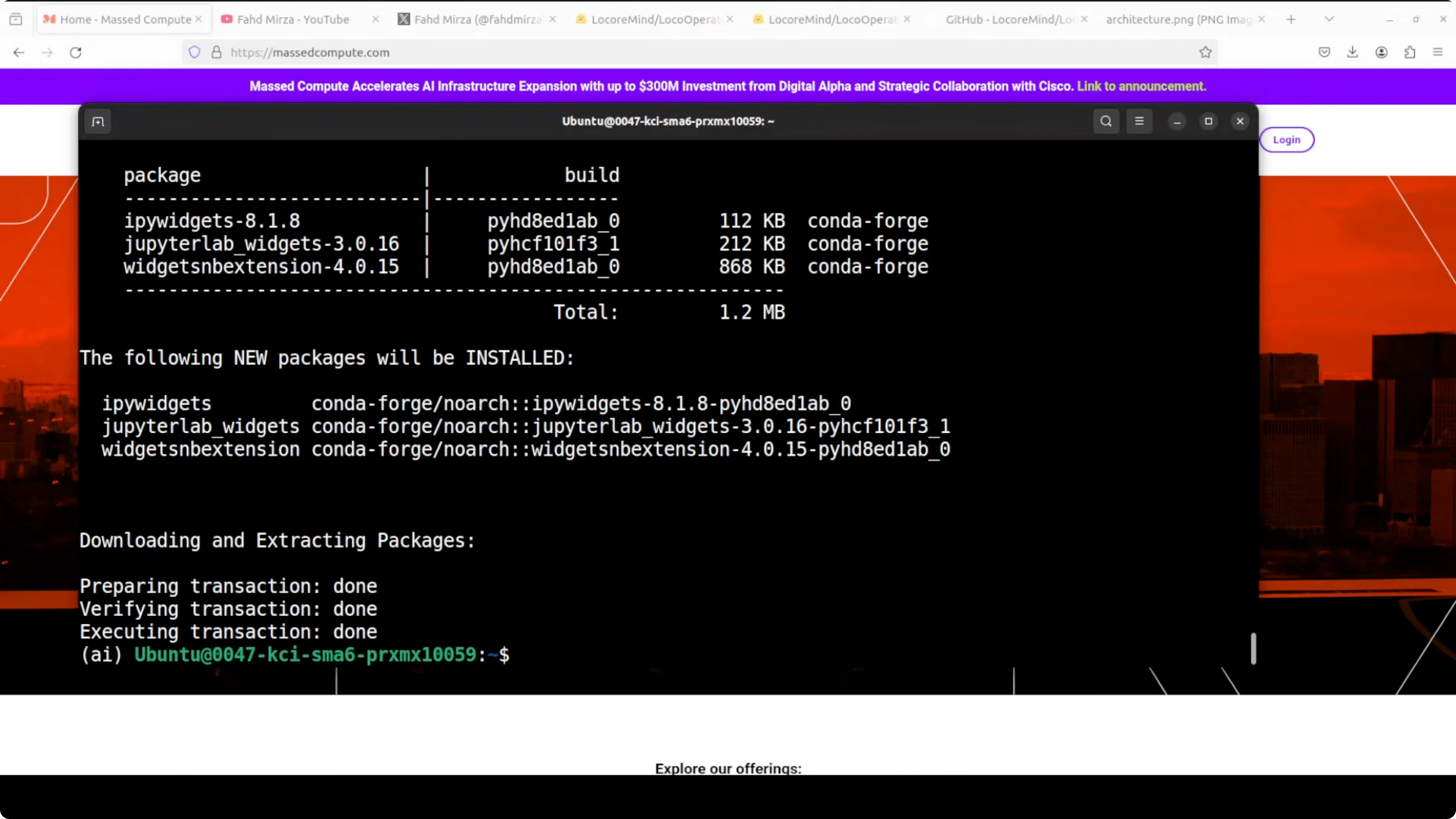

How LocoOperator-4B Surpassed Local AI Models? install

Step 1: Install prerequisites.

python -m venv .venv

source .venv/bin/activate

pip install --upgrade pip

pip install torch transformers accelerate sentencepiece safetensors

pip install jupyterStep 2: Launch Jupyter.

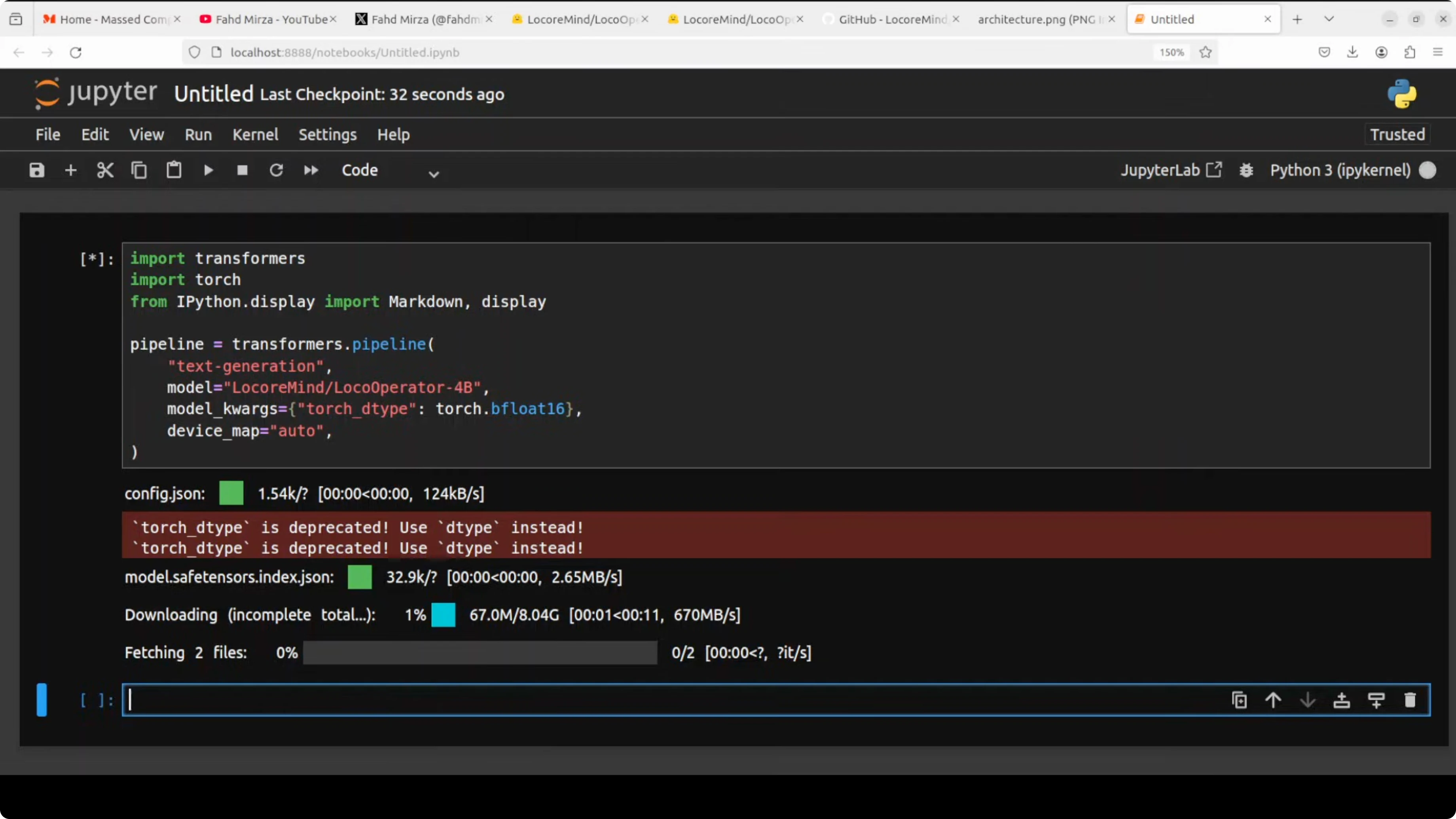

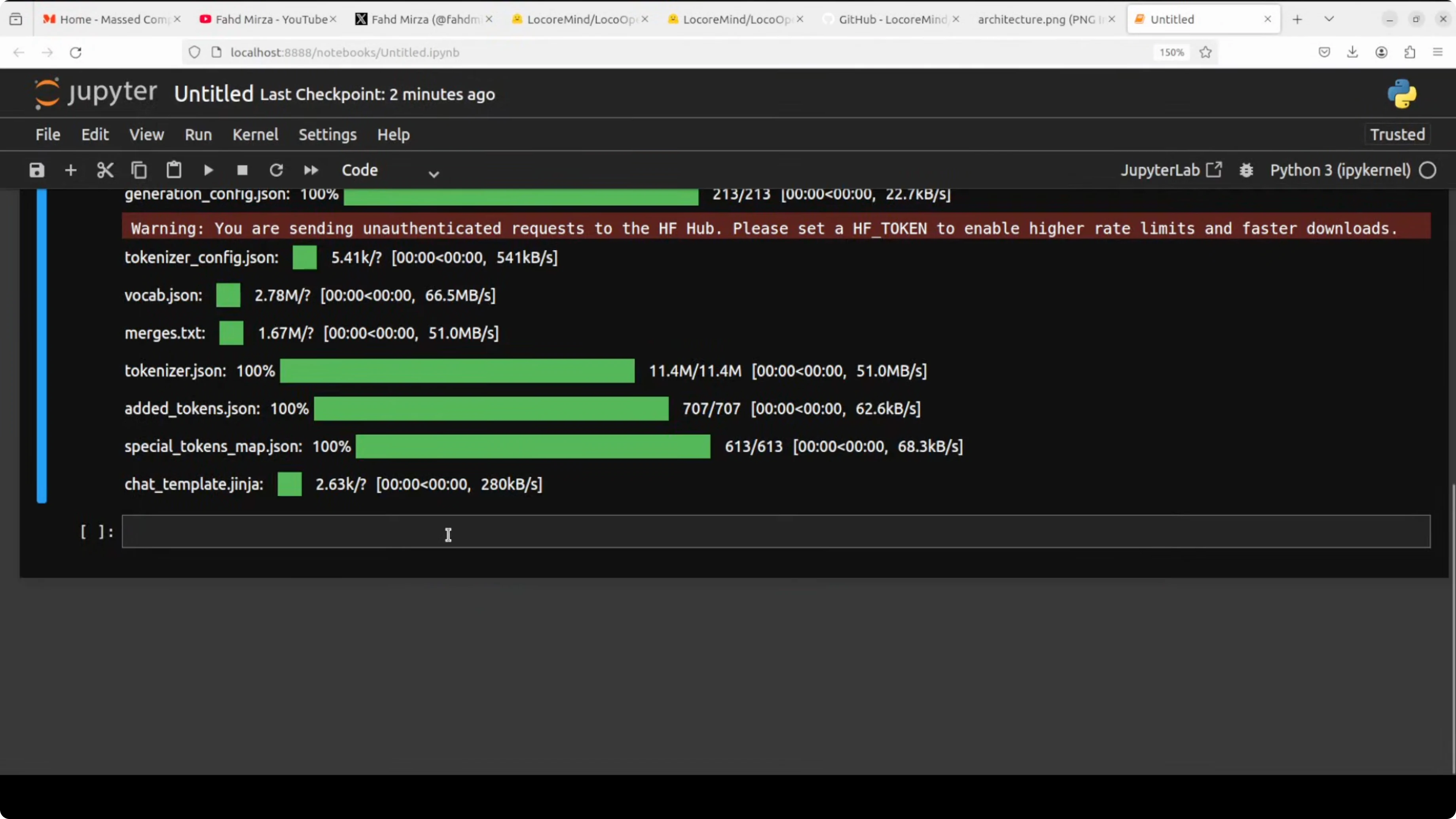

jupyter notebookStep 3: Download the model via Transformers and place it on the GPU.

from transformers import AutoModelForCausalLM, AutoTokenizer

import torch

model_id = "locooperator-4b" # replace with the exact repo id

tokenizer = AutoTokenizer.from_pretrained(model_id, trust_remote_code=True)

model = AutoModelForCausalLM.from_pretrained(

model_id,

torch_dtype=torch.float16,

device_map="auto",

trust_remote_code=True,

)The model download is a touch over 8 GB in size. After loading, VRAM consumption is close to 8 GB. You can check VRAM from Python or the shell.

allocated_gb = torch.cuda.memory_allocated() / 1e9

print(f"VRAM allocated: {allocated_gb:.2f} GB")nvidia-smiRead More: browser agent

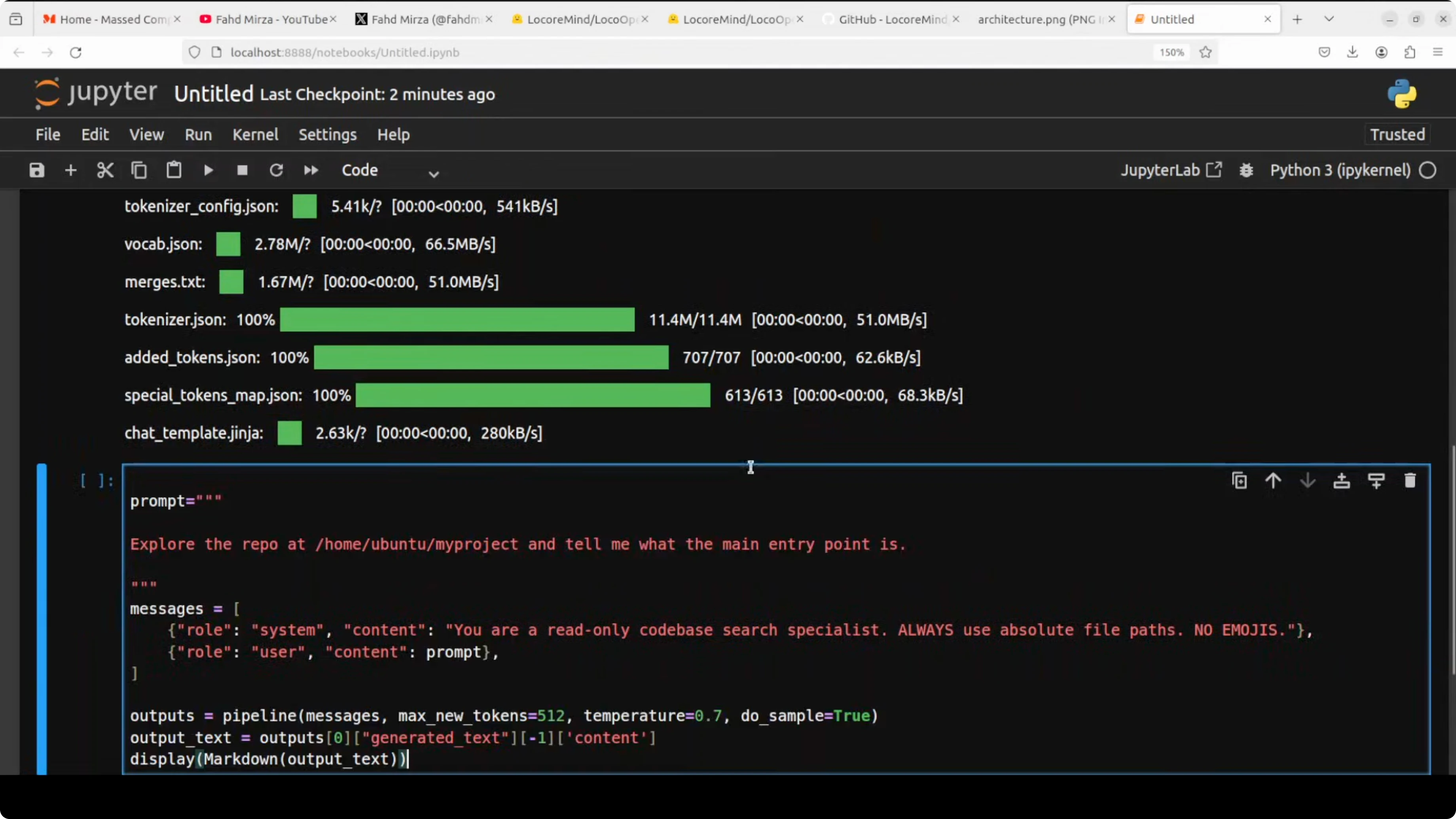

How LocoOperator-4B Surpassed Local AI Models? structured output

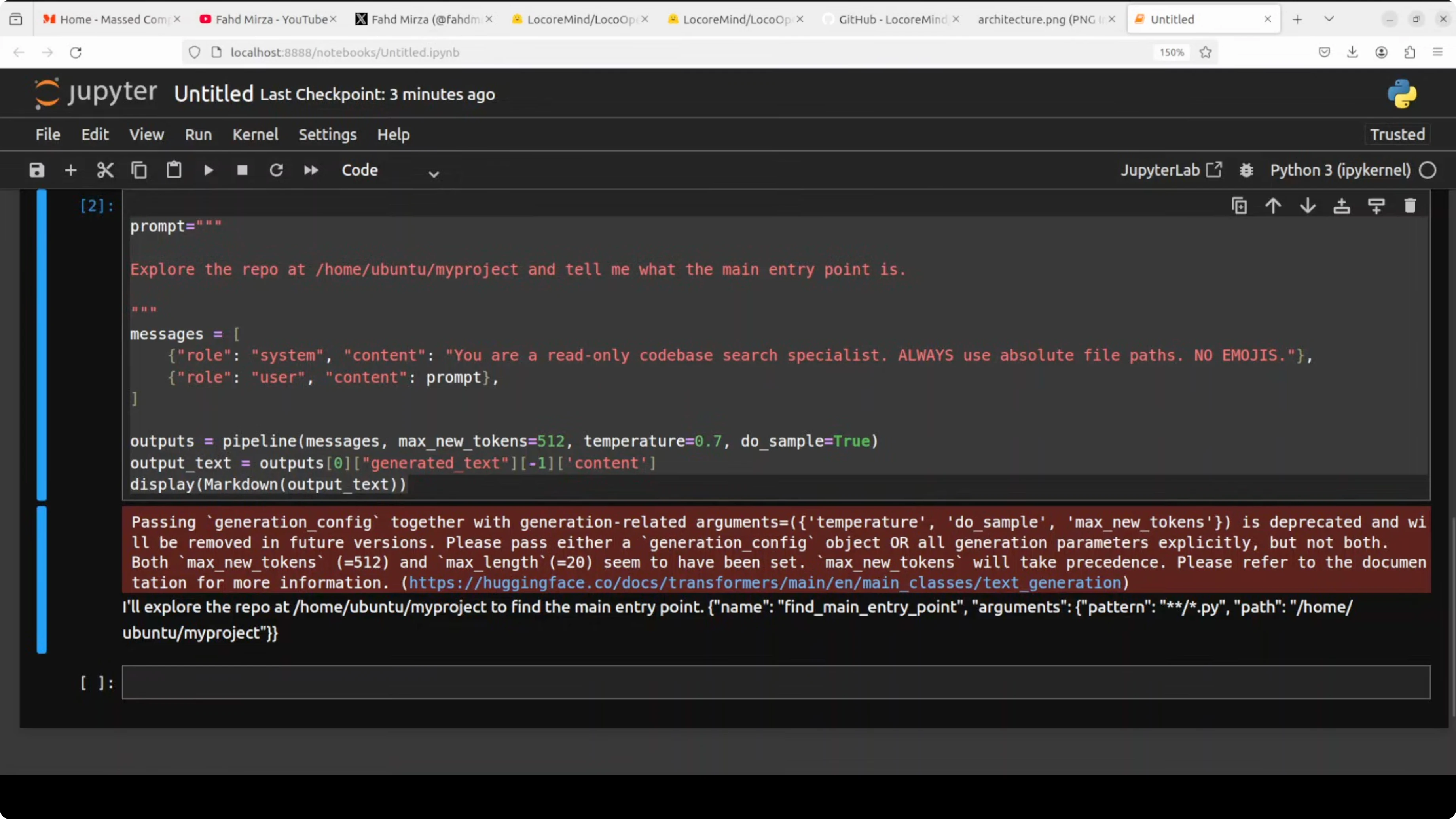

The use case is simple ask it to explore a repo and tell you exactly how it would issue a function or tool call. It will output a structured tool call JSON instead of plain text. In a real agent loop, another process would execute that tool call against your file system.

Here is a minimal example prompt to explore a local path and find the main entry point.

import json

from transformers import TextStreamer

system = (

"You are LocoOperator-4B. Your only job is to propose a single tool call as JSON. "

"Respond with one JSON object with keys: name, arguments. No prose."

)

user = (

"Explore the repo at /home/user/my_project and tell me what the main entry point is. "

"Prefer Python files that start the app."

)

template = f"<|system|>\n{system}\n<|user|>\n{user}\n<|assistant|>\n"

inputs = tokenizer(template, return_tensors="pt").to(model.device)

streamer = TextStreamer(tokenizer, skip_prompt=True, skip_special_tokens=True)

out = model.generate(

**inputs,

max_new_tokens=400,

do_sample=False,

eos_token_id=tokenizer.eos_token_id,

pad_token_id=tokenizer.eos_token_id,

)

raw = tokenizer.decode(out[0][inputs.input_ids.shape[-1]:], skip_special_tokens=True).strip()

print(raw)A representative output looks like this.

{

"name": "search_repo",

"arguments": {

"path": "/home/user/my_project",

"query": "main entry point",

"globs": ["**/*.py"],

"max_results": 100

}

}Its role is purely exploratory. It generates the tool call, then you can hand the actual analysis to a more powerful model like Anthropic Claude, Sonnet 4.7, or GLM 4.7. All the token-heavy scanning work moves to this tiny specialist.

Read More: Step3 VL

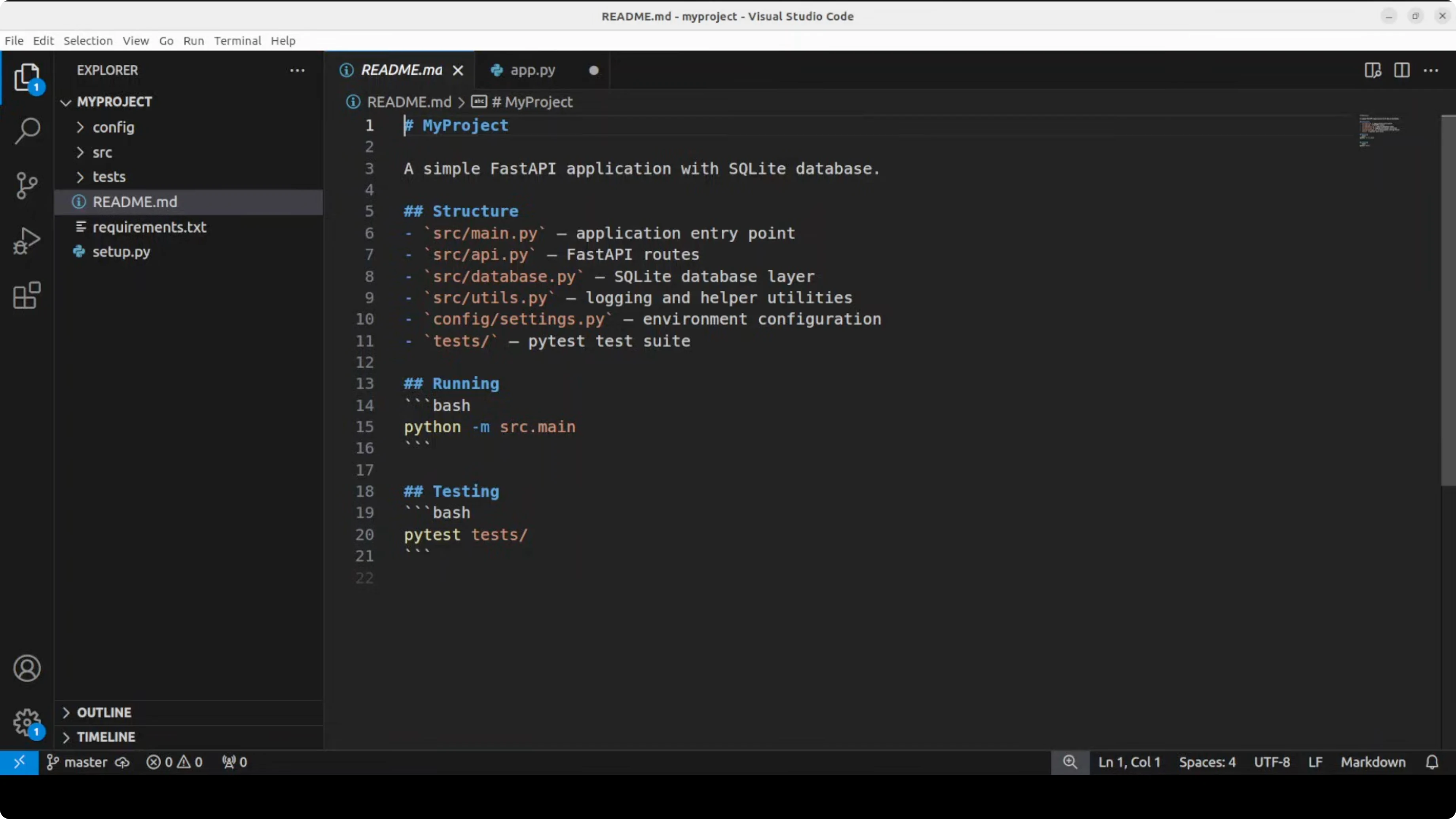

How LocoOperator-4B Surpassed Local AI Models? fastapi test

I created a simple FastAPI project with a database layer, API routes, tests, and a main entry point. The repo contains realistic code across sources, configs, and tests. There is also a requirements file.

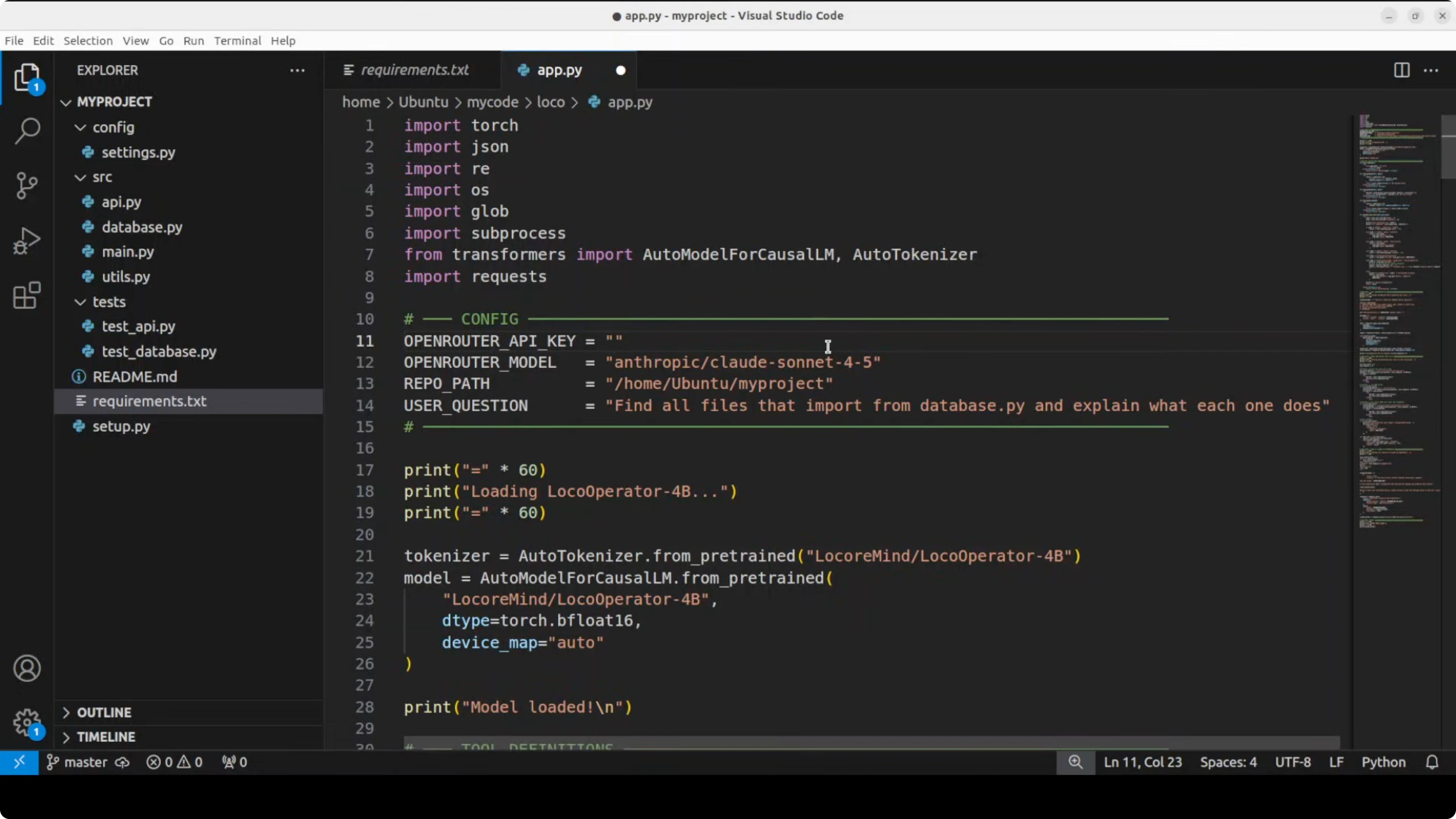

In the driver code, I load the model locally, ask it to explore, and it responds with a structured tool call JSON. The script executes that tool call on the real file system, finds a matching Python file, and sends these results to Claude via OpenRouter for final analysis. That is exactly what it was trained to do.

How LocoOperator-4B Surpassed Local AI Models? driver code

Below is a minimal driver that executes the tool call locally and forwards the result to a cloud model. Replace the model id and path to match your setup.

import os

import json

import glob

from pathlib import Path

import requests

from transformers import AutoModelForCausalLM, AutoTokenizer

MODEL_ID = "locooperator-4b" # replace with the exact repo id

PROJECT_PATH = "/home/user/my_project"

OPENROUTER_API_KEY = os.environ.get("OPENROUTER_API_KEY")

tokenizer = AutoTokenizer.from_pretrained(MODEL_ID, trust_remote_code=True)

model = AutoModelForCausalLM.from_pretrained(

MODEL_ID,

torch_dtype=torch.float16,

device_map="auto",

trust_remote_code=True,

)

def ask_for_tool_call(path: str, query: str) -> dict:

system = (

"You are LocoOperator-4B. Output exactly one JSON object with keys: name, arguments. "

"No extra text."

)

user = f"Explore the repo at {path} and find the main entry point. Query: {query}"

prompt = f"<|system|>\n{system}\n<|user|>\n{user}\n<|assistant|>\n"

inputs = tokenizer(prompt, return_tensors="pt").to(model.device)

out = model.generate(

**inputs,

max_new_tokens=400,

do_sample=False,

eos_token_id=tokenizer.eos_token_id,

pad_token_id=tokenizer.eos_token_id,

)

raw = tokenizer.decode(out[0][inputs.input_ids.shape[-1]:], skip_special_tokens=True).strip()

return json.loads(raw)

def execute_tool_call(tool_call: dict) -> dict:

name = tool_call.get("name", "")

args = tool_call.get("arguments", {})

path = args.get("path", PROJECT_PATH)

query = args.get("query", "main")

globs = args.get("globs", ["**/*.py"])

files = []

for pattern in globs:

files.extend(glob.glob(str(Path(path) / pattern), recursive=True))

matches = []

for f in files:

try:

text = Path(f).read_text(encoding="utf-8", errors="ignore")

except Exception:

continue

if "if __name__ == '__main__'" in text or "uvicorn.run" in text or query in text:

matches.append({"file": f, "snippet": text[:2000]})

return {"query": query, "matches": matches[:10]}

def send_to_claude(context: dict) -> str:

if not OPENROUTER_API_KEY:

return "OpenRouter key not set. Skipping."

headers = {

"Authorization": f"Bearer {OPENROUTER_API_KEY}",

"Content-Type": "application/json",

}

payload = {

"model": "anthropic/claude-3.5-sonnet",

"messages": [

{"role": "system", "content": "You are a senior code reviewer."},

{"role": "user", "content": f"Given these file matches, identify the main entry point:\n{json.dumps(context) }"}

],

}

r = requests.post("https://openrouter.ai/api/v1/chat/completions", headers=headers, json=payload, timeout=120)

r.raise_for_status()

data = r.json()

return data["choices"][0]["message"]["content"]

tool_call = ask_for_tool_call(PROJECT_PATH, "main entry point")

fs_result = execute_tool_call(tool_call)

analysis = send_to_claude(fs_result)

print("Tool call:", json.dumps(tool_call, indent=2))

print("Local FS result keys:", list(fs_result.keys()))

print("Claude analysis:", analysis[:800])How LocoOperator-4B Surpassed Local AI Models? run flow

Load the model. Ask a question about your codebase.

Let LocoOperator-4B generate a structured tool call. Execute that tool call against your actual project on the file system.

Forward the concrete results to a large model on OpenRouter. Receive a deep analysis grounded in real files, not hallucination.

You get real file system results and a local 4 billion parameter model doing all the exploration work at zero API cost. A cloud hosted model like Claude or GPT can handle the higher level reasoning on top. You can also integrate with Claude Code, OpenClaw agent, or similar stacks.

Read More: Heartmula music

How LocoOperator-4B Surpassed Local AI Models? results

This little student model actually outperformed its own teacher on structured output. It scored 100 percent versus 87.6 percent for the teacher on argument syntax correctness. It also avoided empty argument tool calls entirely, with zero compared to 11 from the teacher.

That is the whole point of LocoOperator-4B. You get a tiny model running completely offline that takes on the expensive codebase exploration work for free. Any cloud model just handles the thinking on top.

Read More: browser agent

Final thoughts

LocoOperator-4B is a focused sub agent that reads, searches, and navigates code while emitting structured tool call JSON. It pairs well with a cloud model through OpenRouter to deliver grounded analysis on real files. For broader agent workflows and orchestration ideas, see agentic basics and explore options like OpenClaw agent.

Subscribe to our newsletter

Get the latest updates and articles directly in your inbox.

Related Posts

MOSS TTS Nano: How to Easily Install and Clone AI Voices?

MOSS TTS Nano: How to Easily Install and Clone AI Voices?

MOSS-TTS-Nano: Powerful Multilingual TTS

MOSS-TTS-Nano: Powerful Multilingual TTS

How Chroma Context-1 Transforms RAG Pipeline Workflows?

How Chroma Context-1 Transforms RAG Pipeline Workflows?