Table Of Content

- What is GameWorld: Advancing Standardized Multimodal Game Agent Evaluation

- GameWorld: Advancing Standardized Multimodal Game Agent Evaluation Overview

- GameWorld: Advancing Standardized Multimodal Game Agent Evaluation Key Features

- GameWorld: Advancing Standardized Multimodal Game Agent Evaluation Use Cases

- How GameWorld Works

- Five Genres, Many Skills

- Performance & Showcases

- Game Suite at a Glance

- The Technology Behind It

- Practical Tips for Using GameWorld Results

- FAQ

- How is performance scored?

- What is the verifiable evaluation?

- Which agent interfaces does it compare?

- What kinds of games are included?

- How do human players compare to agents?

GameWorld: Advancing Standardized Multimodal Game Agent Evaluation

Table Of Content

- What is GameWorld: Advancing Standardized Multimodal Game Agent Evaluation

- GameWorld: Advancing Standardized Multimodal Game Agent Evaluation Overview

- GameWorld: Advancing Standardized Multimodal Game Agent Evaluation Key Features

- GameWorld: Advancing Standardized Multimodal Game Agent Evaluation Use Cases

- How GameWorld Works

- Five Genres, Many Skills

- Performance & Showcases

- Game Suite at a Glance

- The Technology Behind It

- Practical Tips for Using GameWorld Results

- FAQ

- How is performance scored?

- What is the verifiable evaluation?

- Which agent interfaces does it compare?

- What kinds of games are included?

- How do human players compare to agents?

What is GameWorld: Advancing Standardized Multimodal Game Agent Evaluation

GameWorld is a large test suite that checks how AI game agents think and act inside real browser games. It covers many skills like timing, control, navigation, and planning, then scores progress in a clear and fair way.

The goal is simple. Put different types of agents in the same setup, let them play the same tasks, then measure how far they get based on the true state of the game, not a guess.

GameWorld: Advancing Standardized Multimodal Game Agent Evaluation Overview

GameWorld runs inside a browser sandbox and compares two ways agents act: as a general multimodal brain that talks and plans, or as a computer use agent that clicks and types like a user. It includes 34 games and 170 tasks across five classic game genres.

Here is a quick summary you can scan.

| Item | Details |

|---|---|

| Type | Standardized benchmark and evaluation suite for multimodal game agents |

| Purpose | Fair and repeatable scoring of agent progress and success inside browser games |

| Scope | 34 games and 170 tasks across five genres |

| Agent interfaces | Computer use agents and generalist multimodal agents, tested in one browser setup |

| Core skills tested | Timing, reactive control, spatial navigation, symbolic reasoning, long horizon planning and coordination |

| How it scores | Outcome based and state verifiable scoring that reads true game state variables |

| Environment | Shared browser sandbox with controlled action interfaces and a shared runtime |

| Human baselines | Expert and novice player baselines for clear context |

| Authors | National University of Singapore and University of Oxford team |

| Best for | Labs, builders, and researchers who need clear apples to apples agent comparison |

If you want a broader context on agents, check our category on AI agent topics.

GameWorld: Advancing Standardized Multimodal Game Agent Evaluation Key Features

-

Two agent styles in one place You can compare a generalist multimodal agent with a computer use agent under the same rules. This shows how high level plans differ from low level key presses and mouse moves.

-

Clear, fair scoring GameWorld reads the true game state to judge progress and success. No tricks and no judge model are needed.

-

Big and varied game suite The suite spans five genres across 34 games and 170 tasks. It stresses fast reaction, clean timing, maze like movement, puzzle logic, and long horizon planning.

-

Shared sandbox and actions A browser sandbox and controlled action interfaces keep runs fair and repeatable. This also makes side by side tests easier to set up.

-

Progress and success as two signals Agents often make good progress but still fail to finish. You get both numbers so you can study where agents stall.

Want to explore more on agent know how and patterns? Visit our broader agent category.

GameWorld: Advancing Standardized Multimodal Game Agent Evaluation Use Cases

-

Compare agent designs Test the same backbone with two control styles and see where each style helps or hurts.

-

Build better training data Use progress and failure spots to guide data collection for timing, navigation, or planning.

-

Set fair internal checks Run the same game tasks after each model update and track steady gains or drops.

-

Teach and demo Use simple browser games to teach core ideas like closed loop control and long horizon planning.

How GameWorld Works

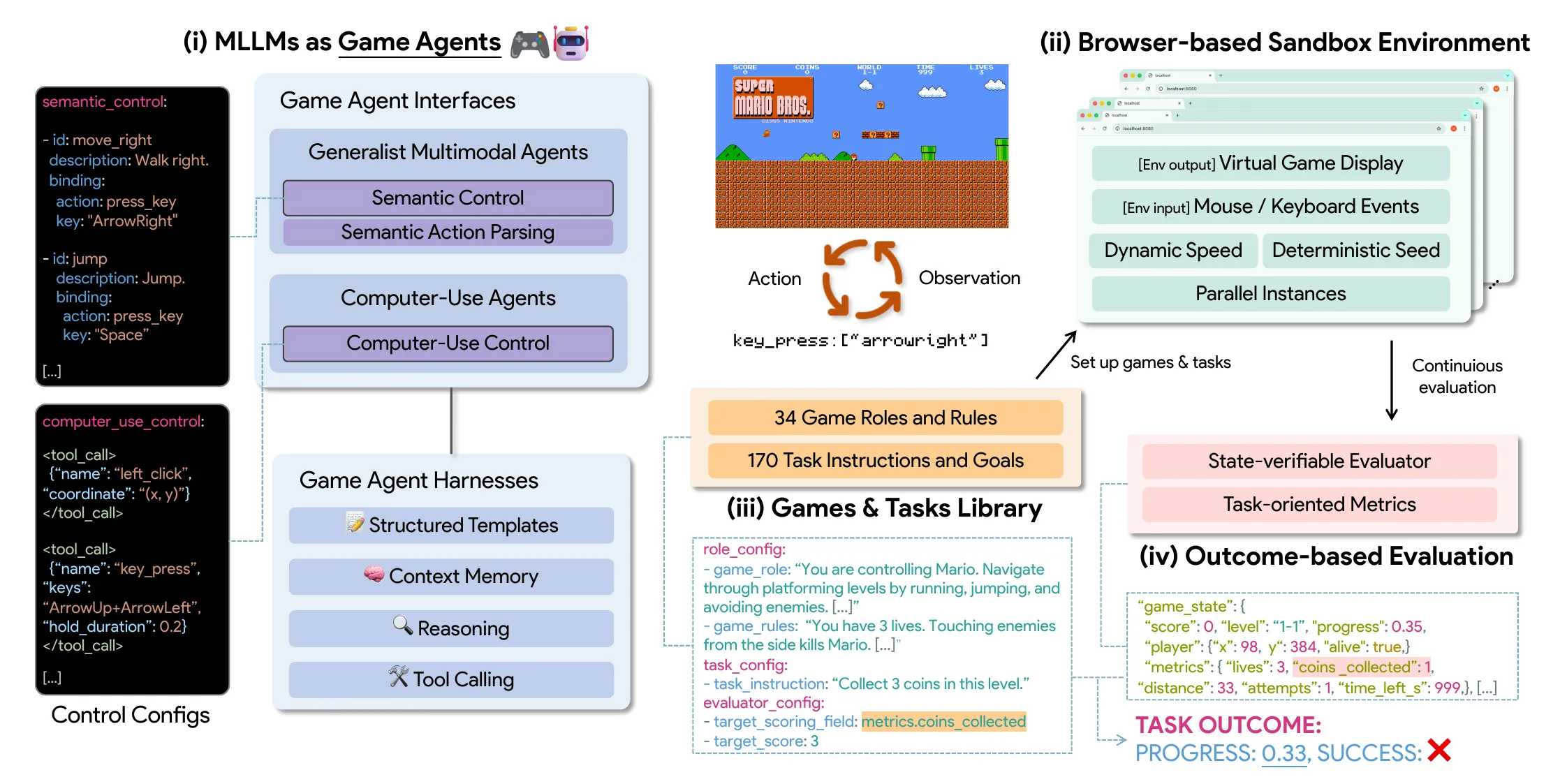

GameWorld closes a loop across three steps. The agent observes the game, takes an action, and then the system verifies progress from the true game state.

It includes four parts that work together. There is the agent brain, the browser sandbox, the games and tasks library, and the outcome based evaluator.

The key idea is trust in the score. By reading serialized game state, GameWorld can compute success and progress from task variables, not from frames or a judge.

Five Genres, Many Skills

GameWorld covers five big genres to test a wide set of skills. Each genre has several games and tasks.

Runner games test fast timing and precise jumps to dodge hazards. The agent must react quickly and stay on track.

Puzzle games focus on logic and planning. The agent must explore a discrete state space, follow rules, and avoid traps.

Platformer, arcade, and simulation genres round out the suite. They bring dynamic tracking, item collection, multi step plans, and open world coordination.

Performance & Showcases

Across the full suite, today’s top agents make solid partial progress but still fall short of human scores. For example, top models reach about forty one progress but still trail the novice player baseline near sixty four under the same budget.

Success rates remain lower than progress rates, which means agents often start well but do not close the task. Scores tend to be higher on reactive control and puzzle logic, and drop on tight timing, spatial navigation, and open world coordination.

Showcase 1 — Standardized and state verifiable overview across 34 games and 170 tasks GameWorld is a standardized, state verifiable benchmark for multimodal game agents in browser environments, covering 34 games, 170 tasks, and two agent interfaces. This video gives a quick sense of scope and how agents are tested under one clear protocol.

Showcase 2 — Mario Game representative trajectory preview Mario Game representative trajectory preview. You will see how the same backbone behaves when action is done through semantic plans versus low level key presses on the same task.

Game Suite at a Glance

The library lists 34 browser games that span well known titles and styles. Examples include Chrome Dino, Flappy Bird, Pac Man, Minesweeper, Tetris, and a Minecraft like sandbox.

Each task pairs a plain language goal with a target metric and a verifiable evaluator. This makes every run measurable and easy to compare.

The Technology Behind It

-

Browser based sandbox Runs all agents in a controlled browser setup with fixed inputs and outputs. This keeps the playing field even.

-

Two action interfaces Compare a general multimodal agent that plans in words with a computer use agent that clicks and types. You can isolate the effect of the interface.

-

State level evaluation The scorer reads serialized game variables to output success and progress. This avoids noisy frame based guessing.

If you are dealing with run failures or process stops in your own agent work, our quick guide on fixes may help. Read More: Agent execution terminated error tips

Practical Tips for Using GameWorld Results

Start by grouping tasks by skill. Compare timing, navigation, and logic tasks to see where your agent shines or slows down.

Use both progress and success to guide next steps. Progress jumps show early wins and success gaps show close out issues.

When you test two interfaces on the same backbone, match tasks and step budgets. This gives fair, clean comparisons you can trust.

FAQ

How is performance scored?

GameWorld reports two metrics per task. Progress tells you how far the agent moved toward the goal and success tells you if it finished.

What is the verifiable evaluation?

The scorer reads the true game state at each step. It then computes success and progress from task variables instead of using image checks or a judge model.

Which agent interfaces does it compare?

It runs both computer use agents and generalist multimodal agents in the same browser setup. This makes it easy to see how planning in words compares to direct keyboard and mouse control.

What kinds of games are included?

There are five genres across 34 browser games and 170 tasks. You get runner, arcade, platformer, puzzle, and simulation games.

How do human players compare to agents?

Expert and novice baselines are reported. Today’s top agents still trail humans by a large margin on both progress and success.

Image source: GameWorld: Advancing Standardized Multimodal Game Agent Evaluation

Subscribe to our newsletter

Get the latest updates and articles directly in your inbox.

Related Posts

AniGen: Exploring Unified S3 Fields in 3D Asset Animation

AniGen: Exploring Unified S3 Fields in 3D Asset Animation

How Prompt Relay Enhances Temporal Control in Video Generation

How Prompt Relay Enhances Temporal Control in Video Generation

TokenLight: How Attribute Tokens Enhance Image Lighting Control

TokenLight: How Attribute Tokens Enhance Image Lighting Control